Agentic AI just had a week. I watched five signals line up on Feb 14, 2026 and it changed what I’m building and how I’m shipping.

Quick answer: Agentic AI moved from hype to execution on Feb 14, 2026. Money went into GRC, public markets reacted to an agentic pivot, security aligned on OWASP-style controls, infra added model options, and consumer bots crossed into romance. My takeaway is simple: tie agents to KPIs, ship with guardrails, keep models swappable, and design with consent upfront.

I tie agents to KPIs, ship with guardrails, keep models swappable, and design with consent upfront.

Enterprise money is voting

On Feb 14, 2026, Complyance raised a $20M Series A led by GV to modernize enterprise GRC with agentic AI. If you’ve ever lived inside governance, risk, and compliance, you know it is repetitive, rules heavy, and evidence obsessed. It is perfect terrain for agents that monitor, log, summarize, and nudge humans.

Why it matters

GRC is a safe, data rich on-ramp for agent builders. The rules are explicit, the ROI is measurable, and the blast radius is small. If you want your first paid pilot, pick a single control, automate the checks, and output an audit-ready report. Think vendor risk intake, policy gap summaries, or evidence pulls from ticketing systems.

For a first paid pilot I pick one control, automate the checks, and output an audit-ready report.

What I’m shipping

I’m building a weekly status agent for one control framework that reads tickets, logs, and docs, then drafts a paste-ready audit brief. Not flashy, very billable.

Markets are whipsawing

Also on Feb 14, 2026, UiPath’s stock fell 12.2% after leaning harder into agentic AI and announcing a deal involving WorkFusion, as covered by Simply Wall St. I’m not here to do stock calls. The signal is what matters to me: moving from rules-first RPA to agent-first orchestration is bumpy, even for incumbents.

Lesson for builders

Enterprises buy outcomes, not philosophy. Replace hours with cycle time, prompts with SLAs, and make the migration invisible to the business while you blend classic automations with agent patterns behind the scenes.

Enterprises buy outcomes, not philosophy. I replace hours with cycle time and prompts with SLAs so the migration is invisible.

How I frame projects

I promise a conservative baseline using traditional automations, then layer agents for exception handling and cross-system reasoning. If the agent layer wobbles, the baseline still delivers.

Security got a shared checklist

On Feb 14, 2026, Zenity highlighted an OWASP-aligned framework for securing agentic AI in enterprises, per TipRanks. Finally, a common language security, platform, and app teams can point to without debating definitions.

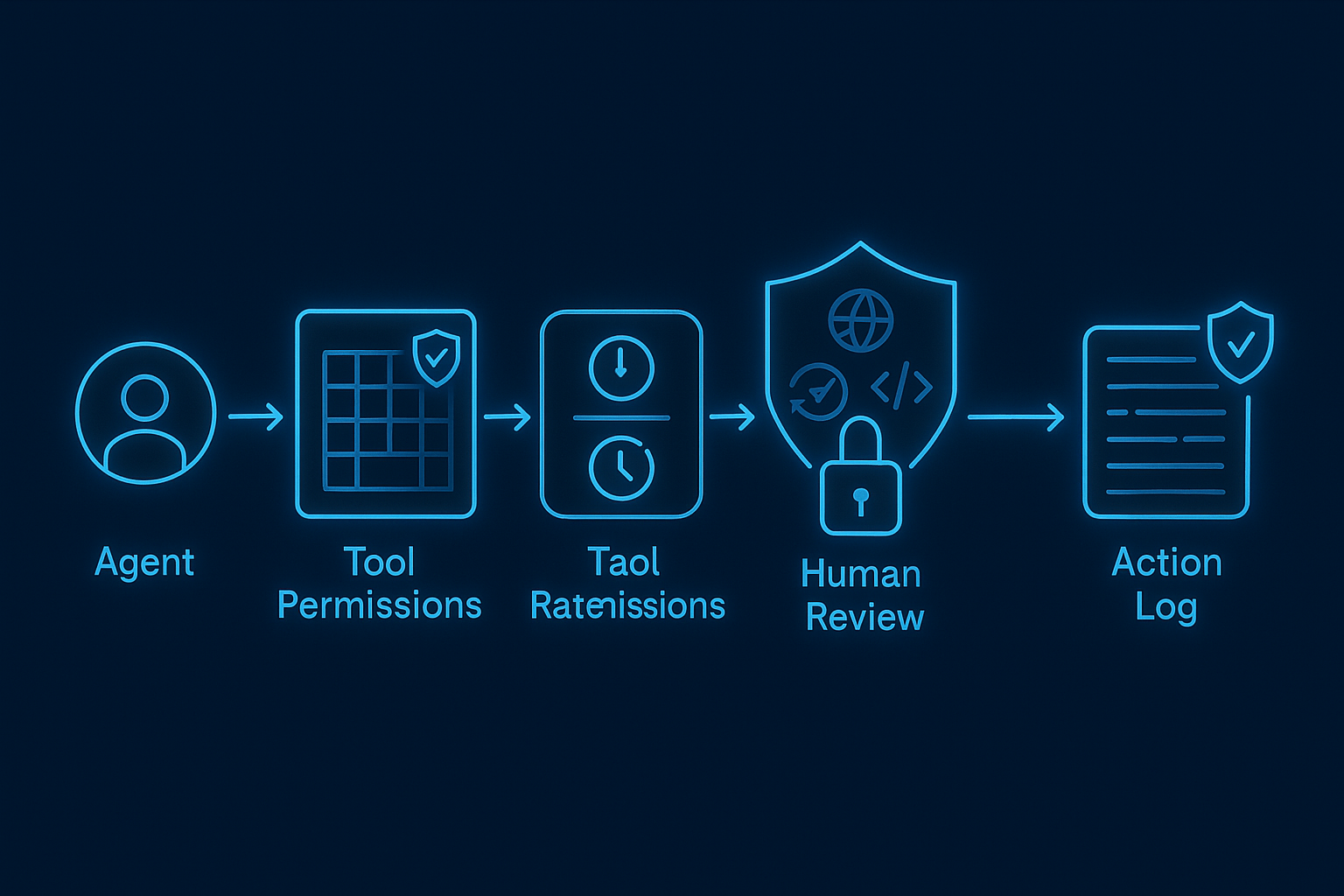

How I’m applying this today

I treat tools like prod integrations. I log every tool call, scope and rate limit access, and prove I can revoke permissions quickly. If an agent can browse, write code, or move money, I add human-in-the-loop steps with auditable justification. I also put a small policy shim in front of the agent that approves or denies actions using scoped JSON rules. Boring, effective, and it helps the CISO sleep.

I treat tools like prod integrations with scoped, rate limited access and full logs. High impact actions get a human-in-the-loop and a small policy shim.

Infra is getting more portable

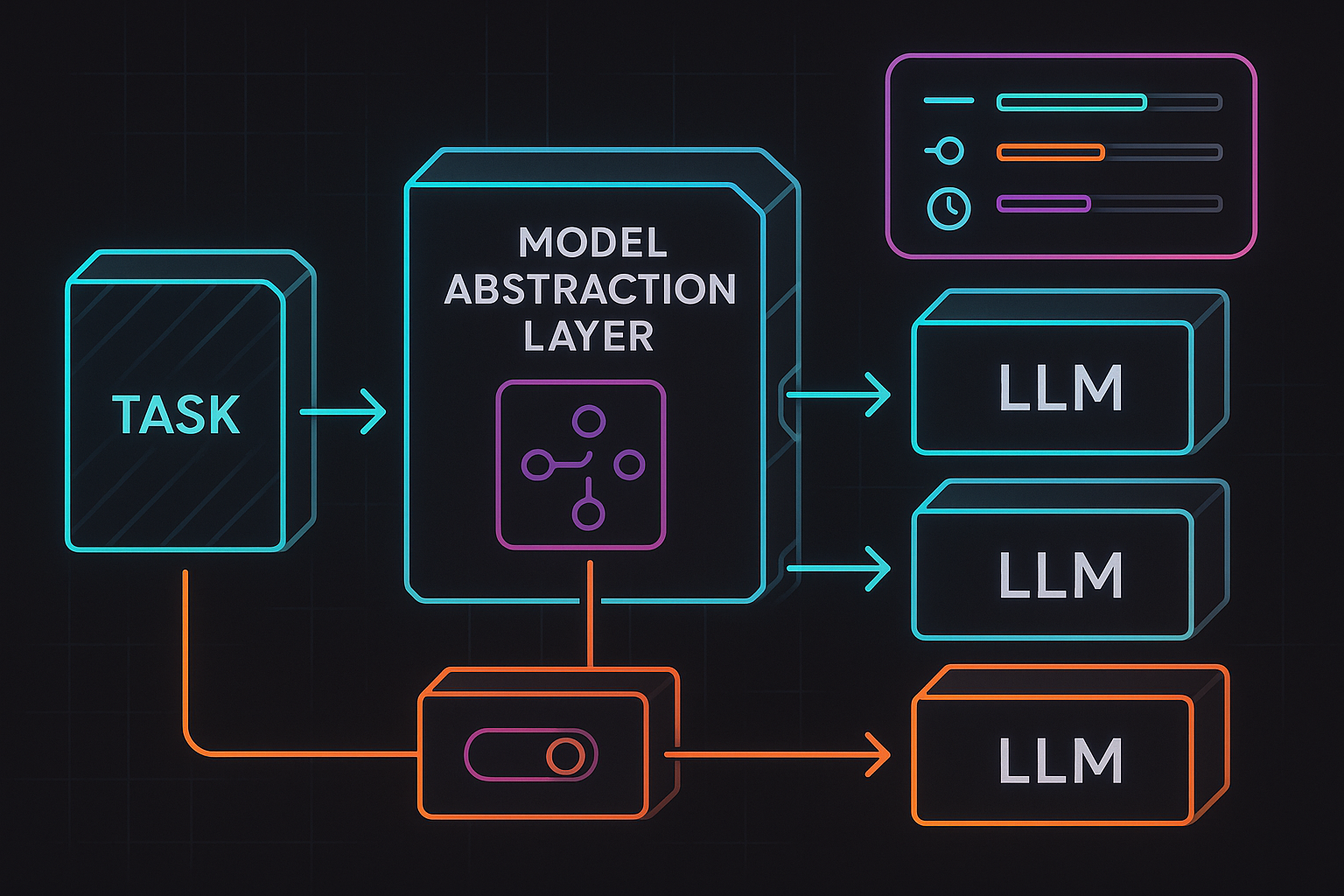

Also reported on Feb 14, 2026, GMI Cloud added MiniMax M2.5 to strengthen its agentic AI stack. I care less about the logo and more about options. Pricing shifts, latency matters, function calling quality varies, and some tasks need longer context. My jobs rarely live on one model forever.

What I changed in my stack

I treat models as pluggable dependencies and talk through an abstraction that lets me swap providers without touching business logic. I run a tiny eval suite nightly on my own tasks, not generic benchmarks. If a new model wins on a narrow skill, I route that skill there. If it regresses, I fail back with one config change.

Consumer reality check

On Feb 14, 2026, coverage flagged that bots are stepping into romance. If you’re building consumer agents, this is your line in the sand. You need intent transparency, consent, and boundaries baked into the product. This does not stop at dating. Your sales agent can cross lines if you do not design for it.

My rule for responsible UX

If an autonomous action could change a relationship, a record, or a reputation, make it opt in, label it clearly, and offer a human handoff. I also make it easy to export every agent-authored message. People will want receipts. Give them up front.

What I’m doing this week

Here is my exact plan so I stay ahead of the agentic AI curve without breaking trust or KPIs:

- Prototype a GRC micro-agent that assembles weekly audit evidence from tickets and docs, gated by human approval and a small policy shim.

- Add a nightly eval harness that compares my top two LLMs plus a rotating third, scored only on my tasks.

- Instrument per-tool guardrails with explicit scopes, rate limits, full action logs, and a fast revoke in admin.

- Write a one-page agent SLA that maps outputs to existing KPIs like cycle time, error rate, and review steps.

- Ship UX copy that discloses autonomy, asks for consent, and offers a human fallback where it matters.

If you’re new to agentic AI, start small

Pick one well-documented workflow inside a team that already writes everything down. Compliance, IT ops triage, or support escalation are ideal. Keep the tool belt tiny. One knowledge source, one ticketing system, one messaging channel. Prove the loop before you chase fancy autonomy.

Pick one documented workflow, keep the tool belt tiny, and prove the loop before chasing fancy autonomy.

My favorite first loop right now: the agent reads a narrow knowledge base, watches a queue for missing info, asks one clarifying question, then drafts a resolution note that a human can approve with one click. Small blast radius, obvious value.

FAQ

What is agentic AI and why does it matter now?

Agentic AI refers to systems that can plan, call tools, and act with guardrails to deliver outcomes, not just text. It matters now because on Feb 14, 2026 we saw funding, security alignment, infra options, and consumer behavior all move at once. That combination usually precedes real adoption.

How do I measure ROI for agentic AI?

Tie agents to existing KPIs. Track cycle time, error rate, rework, and time to resolution. Set a conservative baseline with classic automation, then measure what the agent layer adds for exceptions and cross-system reasoning. If the agent adds risk without ROI, fall back fast.

How do I keep agentic AI safe in production?

Treat tools like production integrations. Scope and rate limit every permission, log all calls, and enable instant revoke. Put human-in-the-loop for high impact actions and add a policy shim that approves or denies actions based on clear rules. Align your controls with OWASP-style guidance to get security onside.

Should I commit to one LLM for my agents?

No. Keep a model abstraction, run task-specific evals nightly, and route skills to the best performer. Pricing and quality change. Portability is leverage, and a single config switch to fail back saves outages and meetings.

Closing thought

Ship something boring that saves someone an hour a week. Wrap it in guardrails your security team can explain back to you. Keep your model layer swappable. Label autonomy like it matters, because it does. Do just those four things and you will be ahead of most teams by the time the next wave of headlines hits.