Agentic AI is finally doing real work. On February 19, 2026 I watched five concrete moves land across healthcare, storage, security, fintech, and networks, and it clicked for me.

Quick answer

On Feb 19, 2026, agentic AI showed up in oncology research, enterprise storage ops, credit union collections, network testing, and governance. The practical pattern is the same: pick a narrow goal, connect a planner LLM to a few safe tools, add strict guardrails, and track one outcome. If you copy this pattern, you can ship a useful, safe agent in a week.

I copy this pattern: pick a narrow goal, connect a planner LLM to a few safe tools, add strict guardrails, and track one outcome so I can ship a useful, safe agent in a week.

Cancer drug discovery just got an agentic co-pilot

PharmaMar and Globant push agentic workflows into oncology R&D

What stood out to me is how well agents fit the grindy parts of research. Literature triage, hypothesis drafts, experiment prep, data cleanup, then adjusting the next run based on results. This is orchestration overhead that slows human experts; agents make that loop tighter without replacing wet-lab judgment.

If I were learning from scratch, I’d build a tiny version: watch a folder of new papers, extract key findings, cross-reference trial registries, then ship a weekly “what changed” brief. You’ll touch tool use, retrieval, scheduling, and basic guardrails in one loop.

Enterprise storage is moving to autopilot

IBM details agentic operations for storage

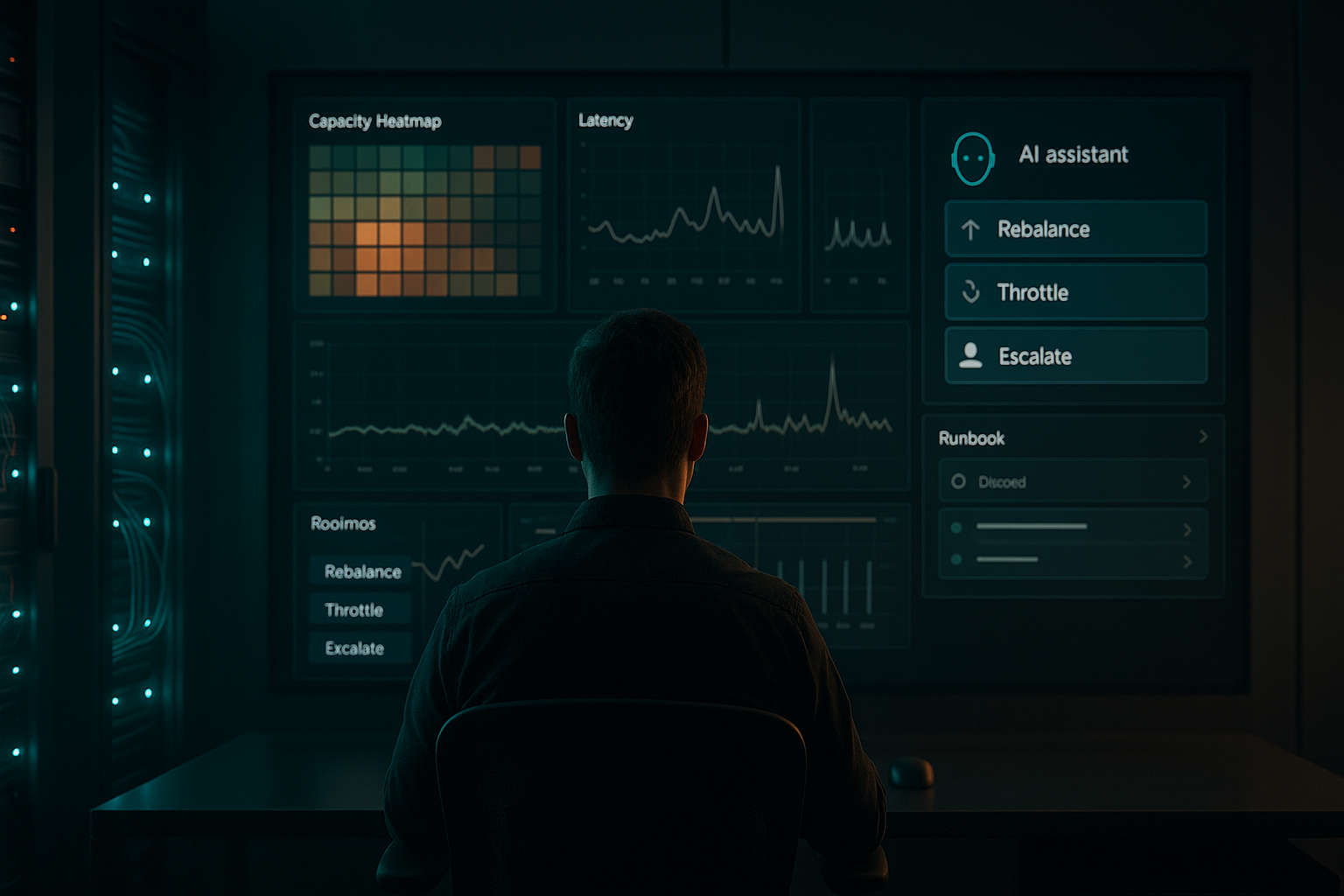

Also on Feb 19, 2026, IBM highlighted agentic AI for storage ops. Storage has predictable telemetry, clear SLAs, and recurring events that want fast, consistent actions. An agent that watches metrics, predicts hotspots, escalates smartly, and runs playbooks is basically SRE with a co-pilot. Forbes covered the direction for autonomous storage operations here.

My tip if you’re new: attach a planner to logs and metrics in a home lab or a few cloud volumes with synthetic load. The aha moment is when your agent correlates noisy symptoms and proposes the right remediation, including asking you when uncertain.

I attach a planner to logs and metrics, let it correlate noisy symptoms and propose the right remediation, and have it ask me when it’s uncertain.

Security leaders are tightening governance

Production agents need real guardrails

The governance drumbeat got louder on Feb 19, 2026. When models can act, not just answer, the blast radius of a bad prompt or flaky tool widens. Here’s the simple kit I keep reaching for:

- Scope agents with a capabilities allowlist and give them only the tools they need.

- Wrap every tool in policy checks, with thresholds that trigger human approval.

- Log prompts, tool calls, parameters, and results so audits are possible.

- Test with simulated scenarios and verify plans match policy before production.

- Fail safe. Uncertainty should trigger human-in-the-loop, not a guess.

None of this is fancy. It’s table stakes and easy to learn on day one.

I fail safe and trigger human-in-the-loop when the agent is uncertain, and I log every decision so audits are possible.

Credit unions got a collections agent that actually follows up

Interface.ai launches multi-channel collections

Also on Feb 19, 2026, Interface.ai rolled out an agent for credit unions that works across text, email, and more. The multi-channel part matters because it forces real decision-making: when to text, when to email, how to read tone, and when to hand off to a human. PYMNTS covered the launch here.

I like vertical agents with one job, one user, and a few tools because they force real decision-making and the right human handoffs.

If you’re learning, vertical agents like this are ideal. One job, one user, a few tools. Think CRM lookup, a messaging tool, a doc generator, and a simple rules engine for offers. Ship that and you’ve basically built a reusable back-office template.

Network testing just hired an always-on test engineer

Keysight’s Spirent adds an agentic layer

Same pattern, different stakes. Networks change constantly, and on Feb 19, 2026 Keysight’s Spirent unveiled agentic AI to plan tests, run them, parse results, then queue the next best set. SDxCentral covered the announcement here. If you’ve ever babysat flaky test rigs, you know why this is huge.

What this means if you’re just starting

I’ll be blunt. The winners won’t be the folks memorizing prompt tricks. They’ll be the ones who stitch a capable planner to three boring tools, wrap it in guardrails, and show a weekly report of wins and handoffs.

If I were starting today, I’d pick a single outcome like reducing overdue invoice age. I’d connect one data source, one comms channel, and a tiny policy engine. I’d teach the agent a short checklist with self-checks before sending anything. I’d keep a human review for risky actions, log everything, and track one metric. That’s enough to earn trust and prove value.

I start with one outcome, connect one data source and one comms channel, add a tiny policy engine with human review, log everything, and track one metric to prove value.

My simple starter stack

What I actually use to prototype

I keep it light: a small agent framework for planning and tool calls, a retrieval layer for context, thin policy middleware for guardrails, and an observability panel that logs every decision. I sketch the workflow as a checklist before I code. If it feels clunky on paper, the agent will struggle too.

Early on, I give the agent as few degrees of freedom as possible. Make it boring and safe before letting it get clever. Strong tool contracts and clear error messages are the underrated superpower here. Agents can only recover from errors they actually understand.

What clicked for me on Feb 19

We’re in the do-the-work phase of agentic AI. Everywhere I look it’s the same mechanics: a narrow goal, a handful of tools, a planner, guardrails, and metrics. Whether it’s cancer research, storage ops, collections, or network testing, the rhyme is unmistakable.

If you’re overwhelmed, take that as a good sign. You don’t need every new model. You need one real workflow and an agent that can plan, act, and ask for help. Start small, keep it safe, and keep score. That’s what the grown-ups are shipping right now.

FAQ

What is agentic AI in simple terms?

Agentic AI plans, takes actions with tools or APIs, checks results, and iterates toward a goal. Think of it like a tireless project coordinator that follows a checklist, not just a chatbot that answers questions.

How can I start with agentic AI this week?

Pick one outcome, connect three tools max, add strict guardrails, and log everything. Build a short plan the agent can follow with self-checks. Ship a tiny but safe loop and track one metric so you can prove impact.

Is agentic AI safe for production?

It can be, if you scope capabilities tightly, wrap tools in policy checks, log every decision, and trigger human-in-the-loop on uncertainty. I test with simulated scenarios first to verify plans match policy.

Do I need a big budget or team?

No. A solo builder can prototype with a lightweight agent framework, basic retrieval, simple policy middleware, and decent logging. Start with a narrow workflow and expand only after you see stable wins.

Which industries are moving first?

As of Feb 19, 2026 I saw signals in oncology R&D, enterprise storage operations, credit union collections, and network testing. The common thread is repeatable tasks with clear feedback signals and strong tooling.