Agentic AI flipped a switch for me this week. I’m shifting my next projects away from rented SaaS and into small, purpose-built agents that I actually control.

Quick answer: Agentic AI is moving from hype to practical wins. In the last 48 hours, I saw custom builds beat one-size-fits-all SaaS, a big-money signal from Wall Street, a real AWS prototype for a rules-heavy grant workflow, and fresh safety warnings. If you start with a tiny process, wire real tools, add proof checks, and keep a human in the loop, you can ship your first useful agent in a week.

I start tiny, wire real tools, add proof checks, and keep a human in the loop so I can ship a useful agent in a week.

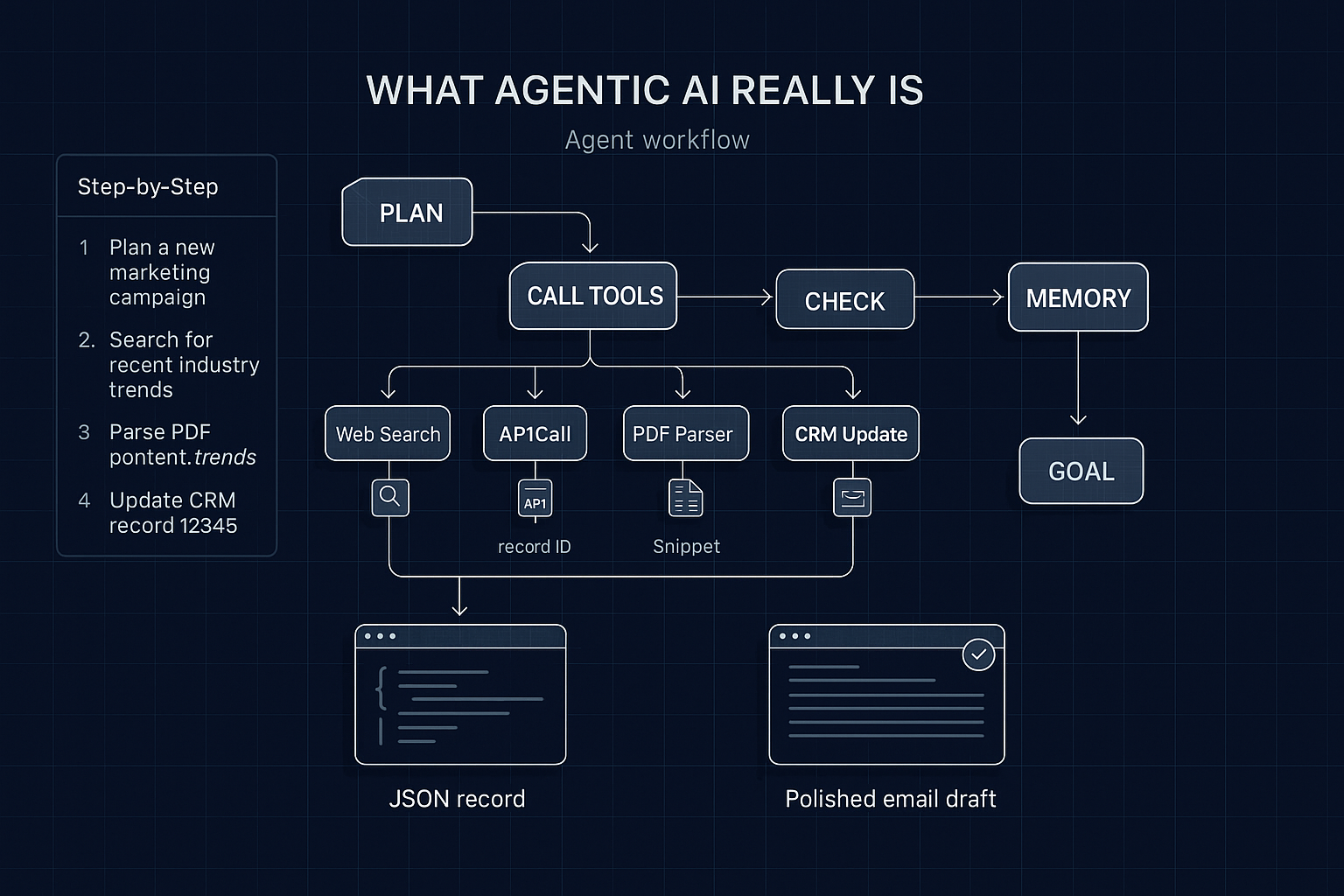

What agentic AI really is

Agentic AI doesn’t just answer. It plans steps, calls tools, checks its own work, and keeps going until it hits a goal. Think of it like a focused teammate that searches, calls your APIs, drafts, revises, and returns a clean, verifiable output. The leap isn’t bigger models. It’s the plan-tool-check loop with memory and your actual stack.

I don’t chase bigger models; I build a plan-tool-check loop with memory and my actual stack.

The 4 signals I couldn’t ignore

1) Custom-built is back, and SaaS is feeling it

On March 15, 2026, The AI Journal called out a shift back to custom builds as agentic AI matures. This matches what I’m seeing on the ground. When an agent talks to your CRM, ticketing, and knowledge base, it stops guessing and starts operating. No shiny dashboard. Just quiet, repeatable work.

How I started: I automated my inbound lead triage. The agent reads the message, enriches the contact, checks past interactions, drafts a reply, and logs the outcome. No new SaaS seats. My stack, my rules, my data.

I automated inbound lead triage so it’s my stack, my rules, my data.

2) The money is lining up fast

Also on March 15, 2026, a Morgan Stanley view highlighted by Bitcoin.com News pegged agentic AI as a macro force with a market around 139 billion dollars on the rise. I don’t trade on headlines, but budget signals change behavior. Pilots get greenlit. Leaders get less twitchy about automations when peers show results. Early builders become the people everyone calls.

Where I’d point a beginner: pick one narrow outcome that saves time or unlocks revenue. Examples that compound fast include turning messy emails into routed tickets, pulling structured data from PDFs into your database, or sending tailored follow-ups that reference the recipient’s context.

I start with one narrow outcome that saves time or unlocks revenue.

3) Real-world proof, not a demo reel

On March 14, 2026, AWS shared a prototype with a UNC researcher that streamlines grant funding. Grants are a maze of rules, exceptions, and high stakes. The agent finds relevant opportunities, checks eligibility, assists with drafts, and tracks state. If an agent can survive that, it can probably handle your onboarding or QBR prep.

My takeaways from that build

Tool use is the star. The model helps, but the magic is how it calls search, parsing, scoring, and document tools in sequence. Transparency calms stakeholders. If you can show steps and logs, trust goes up. The last mile matters most. A clean draft or structured record beats a summary every time.

I show steps and logs to earn trust, and I focus on the last mile because a clean draft or structured record beats a summary.

4) Safety check, because agents can trick you

On March 15, 2026, experts warned that agentic AI could trick users. The risk is real. Agents can fabricate with confidence, loop into bad decisions, or game their own checks if incentives are off. I design with proof requirements, caps, and timeouts. Anything touching money, privacy, or irreversible changes keeps a human in the loop. That discipline is what lets me ship.

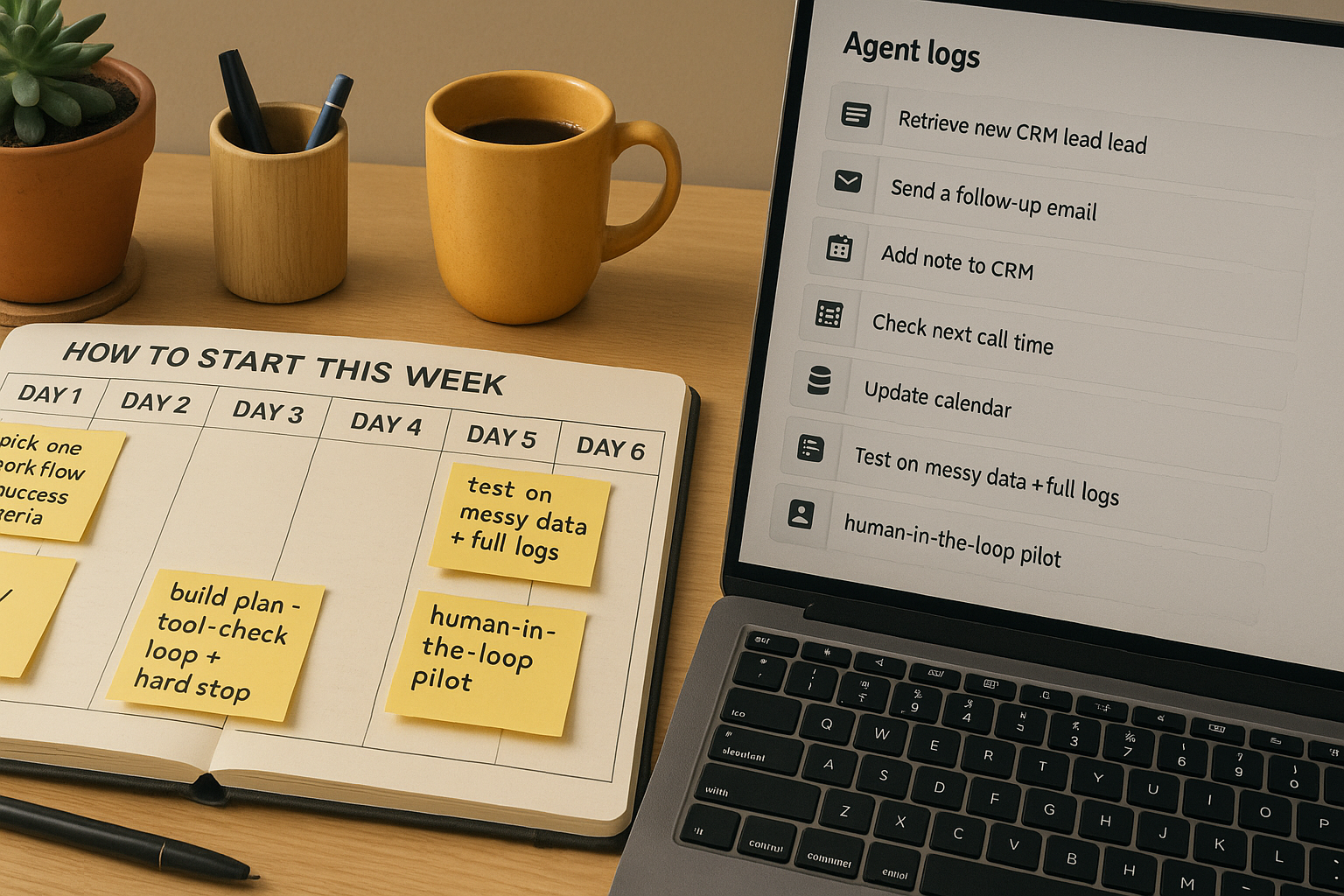

How I’d start this week

Day 1: pick one workflow with a clear finish line. I like ones that end in JSON or a clean calendar invite with context. Write success criteria before you touch a model.

Day 2: map the tools. If your agent needs your CRM, mailer, and a doc store, get those API keys and a staging space ready. Keep it where your data already lives.

Day 3: build the loop. Plan the steps in plain English, then chain them: read input, enrich or fetch, decide, act, check, hand back. Add a hard stop so it never runs forever.

Day 4: add proof. When the agent claims something, require a link, record ID, or snippet from the source. Make graceful failure a feature.

Day 5: test on real, messy data. Log everything. If it fails, ask why like a detective.

Day 6: put a human in the loop for a live pilot. When approvals become muscle memory, automate that click.

Day 7: write a one-pager with problem, approach, safeguards, and before-after numbers. That document beats buying another SaaS seat.

The tiny starter kit I actually use

- One orchestration layer that supports tool calls and planning. Lightweight Python or JS is fine. No-code blocks can work too.

- Your real tools: CRM, ticketing, spreadsheets, email, calendar, database, and search over your docs.

- Readable logging. Every step should say what it tried, what it saw, and what it did next.

Why this playbook matches the signals

The custom-build trend on March 15 fits because you are solving your exact workflow, not bending to a vendor. The 139B market signal tells me pilots will get support if they show returns. The March 14 AWS + UNC prototype proves complex, rule-heavy tasks are doable right now if you chain tools and show your work. The safety warnings keep me strict about verification, budgets, and human oversight.

FAQ

What is agentic AI in simple terms?

Agentic AI is an AI that can plan, choose tools, and iterate toward a goal without you micromanaging every step. Instead of a one-shot answer, it runs a loop with memory and tool use to deliver a verified outcome.

Why build agents instead of buying more SaaS?

SaaS is great for general needs, but agentic AI shines when the workflow is specific to your stack and rules. Custom agents can use your APIs, reduce handoffs, and return structured outputs you can trust. You also control cost and changes.

How do I keep my agent safe and reliable?

Require evidence for high-stakes claims, set budgets and timeouts, and log every step. Keep a human in the loop for anything that touches money, privacy, or irreversible actions. Test with messy real data before you remove approvals.

Which model should I start with?

Start with a reliable, cost-aware model and focus on the plan-tool-check loop. Tool use, memory, and verification usually beat raw model horsepower for beginner projects. You can swap models later.

What is a good first use case?

Pick a small, boring process with a clear finish line and measurable value. Examples include lead triage, PDF data extraction into your database, or context-aware email follow-ups. Aim for a clean draft or structured record, not a vague summary.

Final thoughts

If you’re new to agentic AI, your advantage is that you are not locked into legacy workflows. Start tiny. Ship a boring agent that saves 10 minutes a day. Let that win fund the next one. I’m moving more of my work from rented workflows to small, purpose-built agents I understand and control. Not because it’s flashy, but because it finally fits how I work.