Agentic AI moved fast today, and I felt it before I finished my first coffee. On March 25, 2026, five quiet but important updates landed at once, and I changed what I’m building this week because of them.

Quick answer

If you’re just getting into agentic AI, start with one boring weekly task and wrap it in a tight loop: gather inputs, apply rules, produce the output, self-check, and verify once before you see it. Add simple logging and a tiny eval set of 10 real cases. That’s the fastest path from demo to dependable results.

I always start with one boring weekly task and wrap it in a tight loop. Then I add simple logging and a tiny eval set of 10 real cases.

Arm is tuning hardware for the agent loop

Arm unveiled a chip aimed at agentic AI on March 25, 2026, with partners like Meta, Google, and Nvidia showing up. Agents don’t just answer once. They plan, call tools, check themselves, and try again. That loop wants different compute patterns than a single reply.

Why it matters

As the stack underneath agentic AI gets optimized, costs slide down and latency gets friendlier. That makes fast feedback loops feel natural, including on-device or edge scenarios. More tasks become instant and affordable.

I lean into fast feedback loops when latency gets friendly because they feel natural and unlock on-device and edge scenarios.

What I’m doing

I’m designing small loops that benefit from speed, like a research agent that drafts, critiques against a rubric, and refines once before I see it.

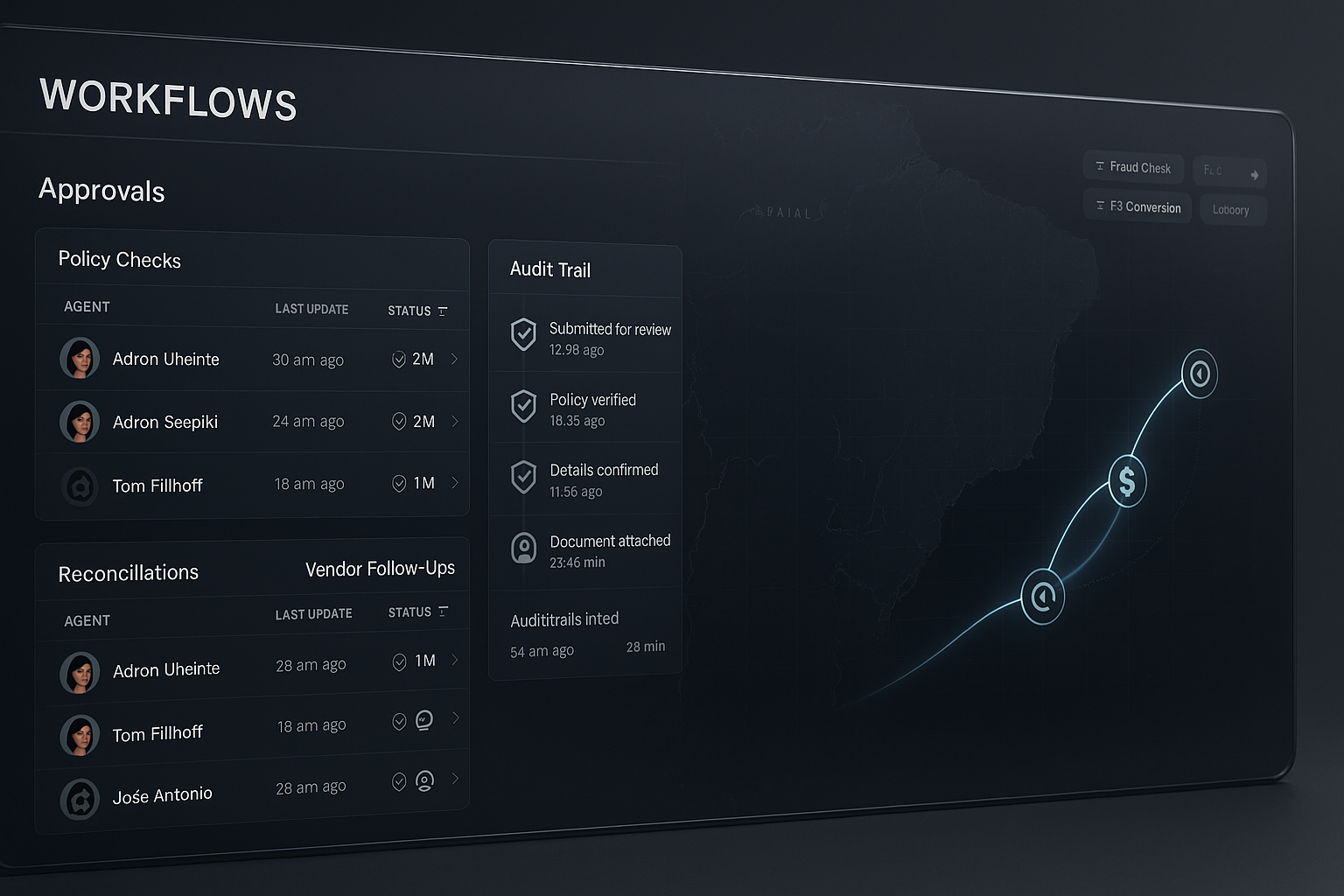

Oracle just put agents inside Fusion Cloud

Also on March 25, 2026, Oracle launched agentic AI apps across Fusion Cloud. When ERP and HCM go agentic, it signals the workflows are stabilizing. Orchestration, approvals, and guardrails are getting baked in, not bolted on.

Why it matters

Routine work finally automates end to end. Think data entry, reconciliation, policy checks, and simple vendor follow-ups. You don’t need exotic tools to see value. Well-scoped, boring tasks are perfect first wins.

I pick well-scoped, boring tasks for my first wins.

What I’m doing

I’m picking a one-hour weekly task I hate and wrapping it with a checklist, a verify step, and a single notification to me when it clears.

Mastercard completed live agentic payments

On March 25, 2026, Mastercard said it ran live agentic transactions across LAC. Not a lab pilot. Real payments with rules, fraud checks, FX quirks, and latency constraints.

Why it matters

If agentic flows survive payments, customer support, billing, collections, and compliance are all fair game. This is where vibes end and verification wins.

I trust verification over vibes when the stakes are high.

What I’m doing

I’m adding confirm steps, rollback paths, and simple audit trails even for small automations like calendar bookings. Log it or don’t ship it.

Solo.io released agentevals

Also on March 25, 2026, Solo.io introduced agentevals, an open source push to close the reliability gap for agentic systems. I’ve wanted practical evals for a while because cherry-picked demos can hide the 30 percent that break.

Why it matters

If you can’t measure stepwise success, you can’t trust the agent. Evals let you stage hard cases, track scores over time, and ship with confidence. Open source means repeatable benchmarks for everyone.

What I’m doing

I’m creating a small eval set of 10 tough cases that reflect my real edge scenarios. I’ll run it weekly and track a single score: completed without human intervention.

Autobrains brought agentic AI to ADAS and automated driving

Same date, March 25, 2026: Autobrains said they applied agentic AI to ADAS and automated driving. I’m not shipping cars, but driving is pure agentic loop energy. Sense, plan, act, check, repeat.

Why it matters

Agents aren’t just chatbots. They’re doers. Your spreadsheet-cleaning agent can learn the same loop: observe, attempt, check, correct, and try again within a budget.

The tiny playbook I actually use

- Pick one weekly task under 60 minutes and define done in one sentence.

- Write the checklist first: inputs, rules, output format, self-review.

- Add one verification loop and allow a single retry if it fails.

- Log every run with status and reason codes in a simple sheet.

- Keep 10 real test cases and track one score week over week.

I log every run with status and reason codes in a simple sheet.

The pattern I see across all five

Compute

Arm is tuning hardware for agentic AI loops, cutting lag and cost. That pulls more real-time use cases into reach.

Workflows

Oracle and Mastercard are putting agents right inside core business processes. Orchestration, approvals, and compliance are table stakes now.

Proof

Agentevals is a push to trust scores, not demos. If autonomous driving can embrace rigorous loop thinking, so can office work.

What I’m shipping by the weekend

I’m building a vendor follow-up agent that pulls due invoices from a sheet, drafts polite reminders with specifics, checks tone and compliance, sends with a unique reference, logs the outreach, and retries once if there’s no reply in 48 hours. I’ll keep 10 test vendors and score it weekly. Boring on purpose, because that’s where agentic AI pays rent.

FAQ

What is agentic AI in simple terms?

Agentic AI is a loop, not a single answer. It plans, calls tools or APIs, observes results, checks against rules, and tries again if needed. That repeatable cycle is what makes it useful for real work.

How do I start without enterprise tools?

Pick one small task and wrap it in a checklist, a verify step, and a log. You can mimic enterprise patterns with simple docs, spreadsheets, and off-the-shelf LLMs. The habit of measuring beats fancy tooling at the start.

How do I add reliable guardrails?

Use hard checks, not vibes. Require confirmations before actions, keep a rollback path where possible, and log inputs, outputs, and decisions. If a step fails a rule, allow one fix and retry, then escalate.

How should I evaluate agent performance?

Create a tiny eval set that reflects real edge cases and run it weekly. Track one meaningful metric, like completion without intervention or time-to-complete. Use the score to guide changes, not a single demo run.

Does latency or hardware really matter for me?

Yes, especially for loops. Lower latency makes critique-and-retry patterns feel natural, which increases reliability and user trust. You don’t need to buy hardware, but you will benefit as the stack gets faster and cheaper.