Agentic AI just clicked for me today

Agentic AI finally had a flip-the-switch day for me. On March 27, 2026, a cluster of real deployments and architecture moves landed at once, and I had to rethink my whole automation roadmap. This wasn’t another flashy demo. It was defense-level reliability, measurable ROI in finance, and security teams putting identity at the top.

Quick answer: On March 27, 2026, agentic AI moved from cool prototypes to governed production. Defense signaled maturity with a BAE Systems and Scale AI partnership, UiPath highlighted 50 percent faster mortgages, Oracle pushed a database-first agent stack, leaders warned against one-model pipelines, and identity-first security became non-negotiable. If you’re starting now, scope tight, log everything, use a real memory layer, keep least privilege, and go multi-model.

What actually changed

Agents are graduating from clever chat to reliable teammates. They’re showing up in safety-critical stacks, shaving time off regulated decisions, and getting a database-first backbone. The industry is also accepting a hard truth I learned the painful way: one model is not a production strategy. And if your agent doesn’t have a real identity, you don’t have real security.

I always treat agent identity as non-negotiable; without a real identity, you don’t have real security.

The headlines I couldn’t ignore

Defense is going agentic

On March 27, 2026, BAE Systems said it is partnering with Scale AI to bring agentic capabilities into defense platforms. I don’t take defense news lightly. If a sector obsessed with reliability, audit trails, and latency is leaning in, the tooling is past toy status. You can skim the announcement here: BAE Systems x Scale AI.

What I’m copying: I define explicit roles and rules. Even for a simple operations agent, I scope access like read-only finance data, draft-only publishing, and I log every decision step. It’s boring. It’s why the agent survives first contact with production.

Mortgages got 50 percent faster

Also on March 27, 2026, UiPath credited agentic AI with cutting mortgage decisioning time in half. That is a document-heavy, audited workflow with edge cases everywhere. If agents can compress that loop, they’re ready for the messy, valuable work most teams avoid. Their note is here: UiPath on 50 percent faster mortgages.

How I’d try this: pick a 3 to 5 step process that always drags. I used vendor onboarding. I wired an agent to parse a W-9, validate fields, draft a vendor profile, and route it for approval. The win wasn’t the model. It was the orchestration and the guardrails.

When I chase speed, the win isn’t the model; it’s the orchestration and the guardrails.

The AI database moved to the center

On March 27, 2026, Oracle pushed a clear stance: make the AI database the heart of agentic workloads. Translation in my words: treat memory, tools, events, and policies as first-class data, not scattered scripts. If you’ve watched an agent forget context or loop on the wrong tool, this clicks. Read the take here: Oracle on AI databases for agents.

What I changed: I give every agent a real home. A vector store for facts and examples, a relational table for tasks and states, and persistent logs for every tool call. Debugging suddenly feels like debugging an app, not a seance.

Avoid the one-model trap

It’s tempting to hand everything to one giant model. It will demo great and fail silently at scale. Different steps want different models and sometimes no model at all. The best next action is often a SQL query, a policy engine, or a cached lookup. I plan with a strong reasoning model, then offload extraction, classification, and critique to smaller specialized models. Deterministic work goes to tools. Policy wraps every step. Cache aggressively.

I plan with a strong reasoning model, then offload extraction, classification, and critique to smaller specialized models.

Security made agent identity page one

Also called out on March 27, 2026: agent identity is becoming a top enterprise security priority. Agents log into systems, touch sensitive data, send emails, and trigger payments. If they don’t have unique identities, you can’t apply least privilege or audit what happened. I treat agents like service accounts with per-environment vaulted secrets, scoped IAM roles, outbound allowlists, and signed webhooks. Not glamorous. Completely necessary.

I treat agents like service accounts with per-environment vaulted secrets, scoped IAM roles, outbound allowlists, and signed webhooks.

How I’d start this weekend

I ran this exact playbook to get something real in a few hours without drowning in complexity.

I start with one narrow workflow and ship a minimal loop in hours instead of drowning in complexity.

- Pick one narrow workflow with 3 to 5 steps and a clear success signal. Mine was turning a vendor packet into a draft entry ready for approval.

- Define the agent’s role, inputs, outputs, and hard boundaries. Read-only storage, no external emails, must pass schema validation.

- Stand up a tiny memory layer. One vector collection for examples, one table for tasks, states, and tool results.

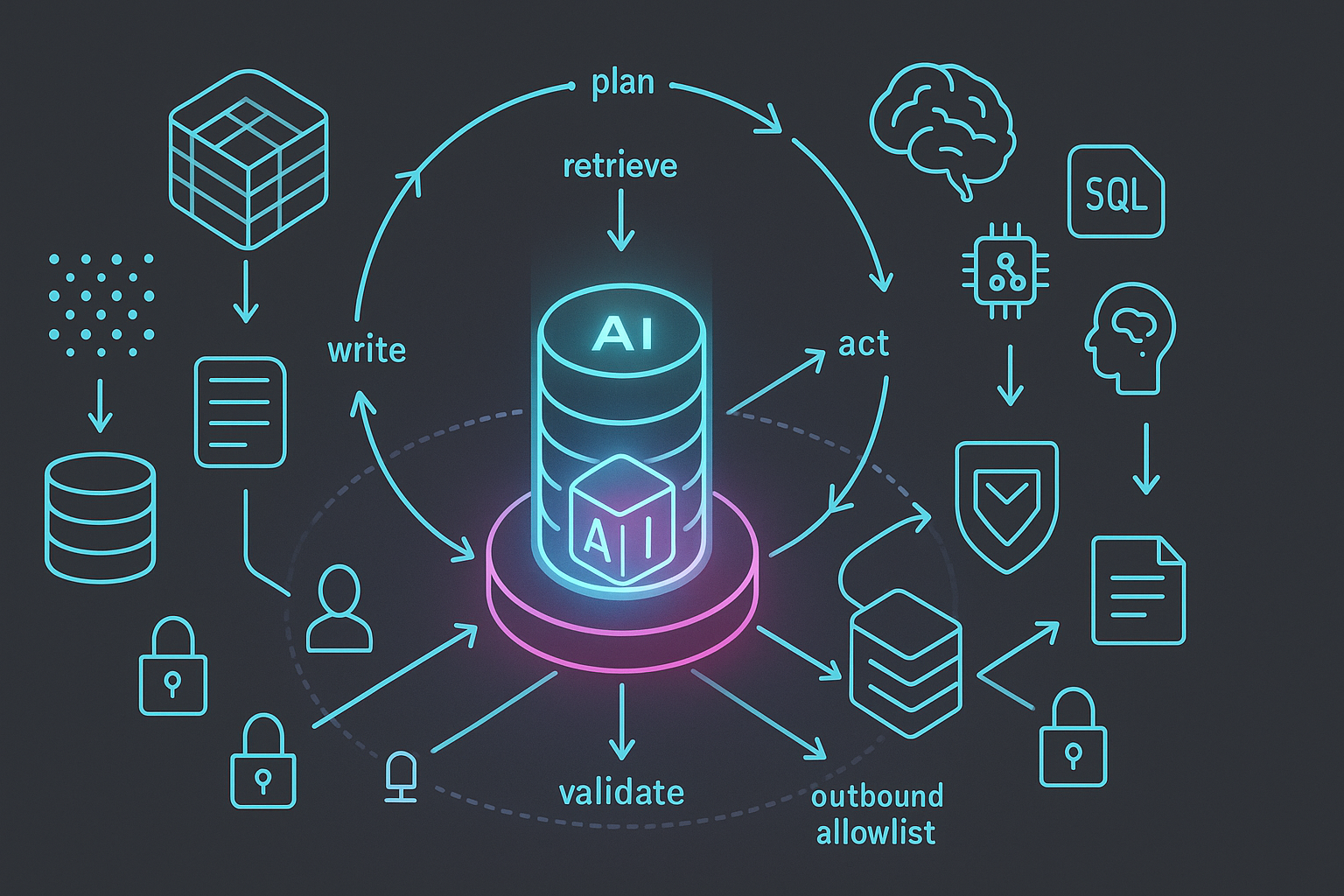

- Compose a simple loop: plan, retrieve, act with tools, validate, write, human review. Log everything.

- Add identity and measure. Give the agent its own keys with least privilege, then track turnaround time and human edit rate. Tighten retrieval or add a critique step if edits are high.

Beginner mistakes I still catch myself making

Chasing model upgrades instead of fixing retrieval. Most misses are context problems, not creativity problems. Clean your inputs and index your truth.

Skipping validation. A small JSON schema check or lightweight rules engine prevents mystery errors downstream.

No plan memory. Agents forget. Persist a structured plan and update it as you go. Replanning every step is slow and wobbly.

Trying one agent for everything. Split responsibilities. A tiny reviewer agent can catch most errors cheaply.

Shared credentials. The day you need to revoke access or explain an action, you’ll want per-agent identities and clean logs.

FAQ

What is agentic AI in plain English?

Agentic AI is software that can plan, use tools, and take actions to reach a goal, not just answer questions. In production, that means clearly defined roles, a memory layer, strict policies, and observability so you can trust what it does and explain why.

Do I need one big model or multiple smaller ones?

I use a reasoning-capable model for planning and smaller specialized models for extraction, classification, and critique. Deterministic work goes to tools or SQL. This modular approach lets you swap weak parts without rewriting your stack.

How do I give an agent a real identity and least privilege?

Create a dedicated service account per agent with unique keys, narrow IAM roles, and environment-scoped secrets. Allowlist outbound domains and sign webhooks. Rotate credentials and log every action so you can audit quickly.

What should I use for agent memory?

Start simple. A vector store for facts and examples plus a relational table for tasks, states, and results. That gives you stable retrieval, versioning, and debuggability from day one.

How do I prove ROI fast?

Pick a short workflow with a clear success metric like turnaround time or error rate. Ship a minimal loop with strong guardrails, then measure edits and latency. Tighten retrieval and validation before you even think about model upgrades.

Where this leaves us

The March 27, 2026 headlines lined up into a clear message for me. Agentic AI is getting battle tested in defense, generating real ROI in regulated finance, shifting to database-first design, moving beyond one-model stacks, and putting identity-first security at the top. Start small, think like a systems designer, and assume someone will ask you to explain every action your agent took. That mindset unlocks everything else.