Agentic AI finally clicked for me this weekend. Agentic AI stopped feeling like a demo treadmill and started looking like a workflow I can trust, and I’m adjusting my build plan because of it.

Quick answer: If you’re starting with agentic AI this week, pick one tiny workflow and make it agent-first. Write three scenario evals before prompts, split work into two tight roles, add a kill switch and rollback, then ship to one real user. The biggest lift right now is reliability, not clever prompts.

I always start with one tiny workflow and write three scenario evals before I touch prompts.

Alexa moved from chat to action

On March 28, 2026, TechRadar’s interview with Amazon’s Daniel Rausch made it plain: Alexa is shifting from small talk to actual task execution. Less chatter, more outcomes. That is the first mainstream signal I’ve seen that everyday assistants are becoming real agents.

Why this matters if you’re new

The floor just rose. When a household assistant trends toward autonomy, people expect tools to finish jobs without back-and-forth. If you are starting now, you have no legacy UI or process debt. You can build native agent flows from day one.

Because the floor just rose, I build native agent flows from day one so users get outcomes, not back-and-forth.

What I’m doing

I’m rewriting one tiny workflow to be agent-first: helpdesk triage reads a ticket, checks the KB, proposes a fix, then opens a PR if it matches a known patch. No platform migration. Just one measurable, agentized flow.

Evals are the real boss battle

Also on March 28, 2026, The New Stack covered Solo.io’s “agentevals” and called evaluation the biggest unsolved problem in agentic AI. I agree. Demos impress, but production needs repeatable checks. If you cannot test multi-step behavior, you are flying blind with pretty logs.

I treat evals as the boss battle and refuse to ship anything I cannot test end to end.

My read on agentevals

I’m not endorsing a tool. I like the direction: shared scenarios, harnesses you can run daily, and scores that trend up or down. Beginners often skip this and wonder why things melt by day three. Your first win is a small, automated yes or no.

What I’m doing

I wrote three scenarios for helpdesk: empty ticket, duplicate request, and policy-restricted request. If the agent cannot pass them consistently, I do not ship. I track pass rate daily and only celebrate if it improves for a full week.

One person, many agents actually ships

Also on March 28, 2026, a Towards Data Science piece on OpenClaw showed how a solo builder ships more with autonomous agents. That snapped me out of the “one giant agent” trap. Roles create clarity, clarity creates logs you can debug.

What clicked for me

Break work into roles with tight tools and clear checks. Let the agent run until it hits a meaningful gate. Big, do-everything agents look powerful and then fail quietly.

I split work into tight roles with clear gates so failures are visible and debuggable.

What I’m doing

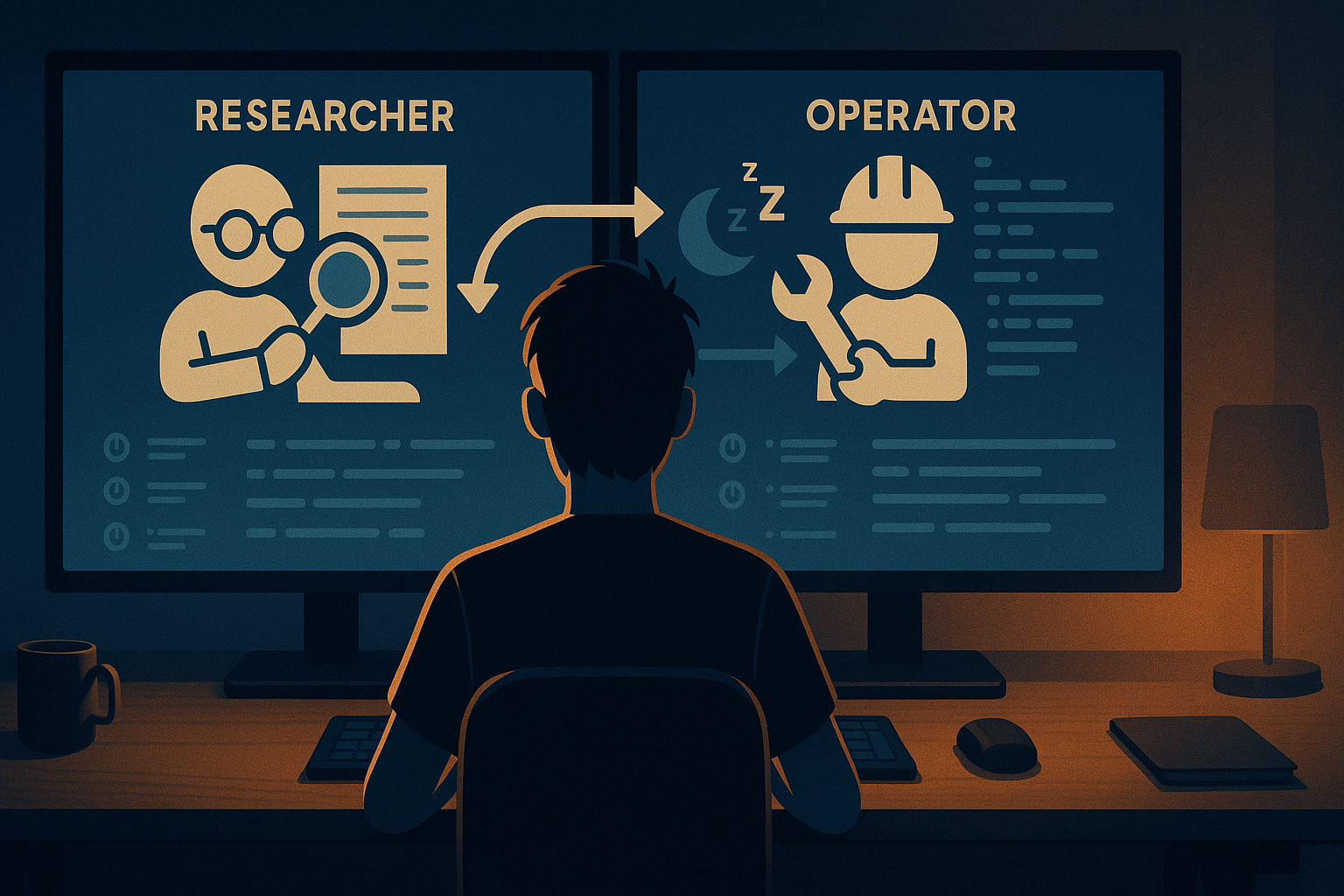

I split helpdesk into two agents: a Researcher that only reads and summarizes, and an Operator that only writes and proposes changes. Each has its own evals. If Researcher fails, Operator sleeps. Debugging already feels saner.

Vendors sprint, enterprises crawl

On March 28, 2026, SiliconANGLE highlighted the gap between vendor velocity and enterprise adoption. That tracks with what I see. Big shops need governance, audits, and sign-offs. Small teams can move now if they make reliability visible.

How I’m leaning into it

I document risk and rollback like I am in a regulated org. One page: what tools the agent can call, where it can write, and how I revert in under a minute. It is not bureaucracy. It is how I earn the next level of automation.

Who in Big Tech feels ready

On March 29, 2026, The Motley Fool asked who is ready for agentic AI. I do not care about stocks here. I care about where beginners should learn. My filter is simple: tool use, memory, and safe actions with permissioning.

How I’m choosing platforms

I want sane tool-calling APIs, eval-friendly workflows I can afford to run daily, and a permission model that keeps agents out of places they should not touch. If every iteration feels like a cloud bill cliff, I am out.

A quick note on lock-in

I keep prompts and scenarios in my repo and wrap external calls behind a thin interface. If I need to swap models or hosts, I do not rewrite my life.

My 5-step starter plan for this week

This is the exact plan I wrote for myself. Steal it if it helps.

- Pick one tiny workflow and write three scenario tests before you write prompts.

- Split that workflow into two agents max. Name their roles and limit their tools.

- Add a kill switch and a rollback you can run in under 60 seconds.

- Track daily pass rates. If stability drops, stop adding features.

- Demo to one real user and change one thing they actually cared about.

Red flags I’m watching

Shiny demos with no repeatable evals or logs. Agents that can write broadly with no rollback. Costs that hide in tool calls or retries with no limits. If any of that shows up, I pause and tighten scope.

If I see missing evals, broad write access, or hidden costs, I pause and tighten the scope immediately.

What this means for beginners

Here is my honest read after this weekend. Consumer tools are normalizing autonomy. Tooling is waking up to evals. Enterprises are slowed by process more than tech. Solo builders are quietly shipping. Platforms are racing to make agent stacks safer and cheaper.

If you are new to agentic AI, you do not need to master everything. You need one reliable agent that proves value without drama. Pick a workflow, write the evals, and ship a small slice. Let that win fund the next move.

My stack stays boring on purpose: a model that can call tools, two tight roles, a test harness I can run with one command, and permissions that treat writes as a privilege. Boring scales.

FAQ

What is agentic AI in simple terms?

Agentic AI is a system that plans and executes multi-step tasks using tools, memory, and rules. Instead of only replying to prompts, it takes actions toward a goal with guardrails you define. Think less chat, more doing.

How do I evaluate an agent without a big setup?

Create 3 to 5 realistic scenarios that represent edge cases and common paths. Run them daily and log pass or fail with short explanations. Track a rolling success rate and only add features when that trend is stable or rising.

What permissions should I give a beginner agent?

Start with read-only access plus a sandboxed write path you can roll back in under a minute. Limit visible tools and require confirmation for any irreversible action. Expand rights only after the evals hold steady.

How do I control costs with tool-heavy agents?

Put hard limits on retries and depth of planning. Cache reads, cap parallel calls, and surface per-run costs in the logs. If an experiment spikes spend, reduce scope before you scale.

Should I pick one platform or stay flexible?

Stay portable early. Keep prompts, test cases, and policies in your repo, and wrap provider calls behind a thin interface. That way you can switch models or hosts without breaking your workflows.

The bottom line

On March 28 and 29, 2026, multiple corners of the ecosystem pointed the same way: agents that do more, with better guardrails, moving from demo to dependable. If you have been hesitating, this is a great week to start small and build something you can trust.