Agentic AI just hit fast-forward for me this morning. I sat down with coffee and realized costs dropped, capability jumped, and the guardrails finally look copyable.

Quick answer: On Feb 16, 2026, Alibaba launched Qwen3.5 tuned for agents, OpenAI and Cisco backed standards for safer scaling, and real pilots landed in retail and healthcare. If you start now, pick one daily workflow, cap scope and tools, add clear review gates, and measure time saved. You can get a reliable win in two weeks.

I start with one daily workflow, cap scope and tools, add clear review gates, and measure time saved to get a reliable win in two weeks.

The day agentic AI got real

Everything I read on Feb 16, 2026 lined up. Reuters reported Alibaba’s Qwen3.5 built for the agent era. PYMNTS covered OpenAI and Cisco backing standards to scale agents. And pilots started showing up in places that print money or save lives.

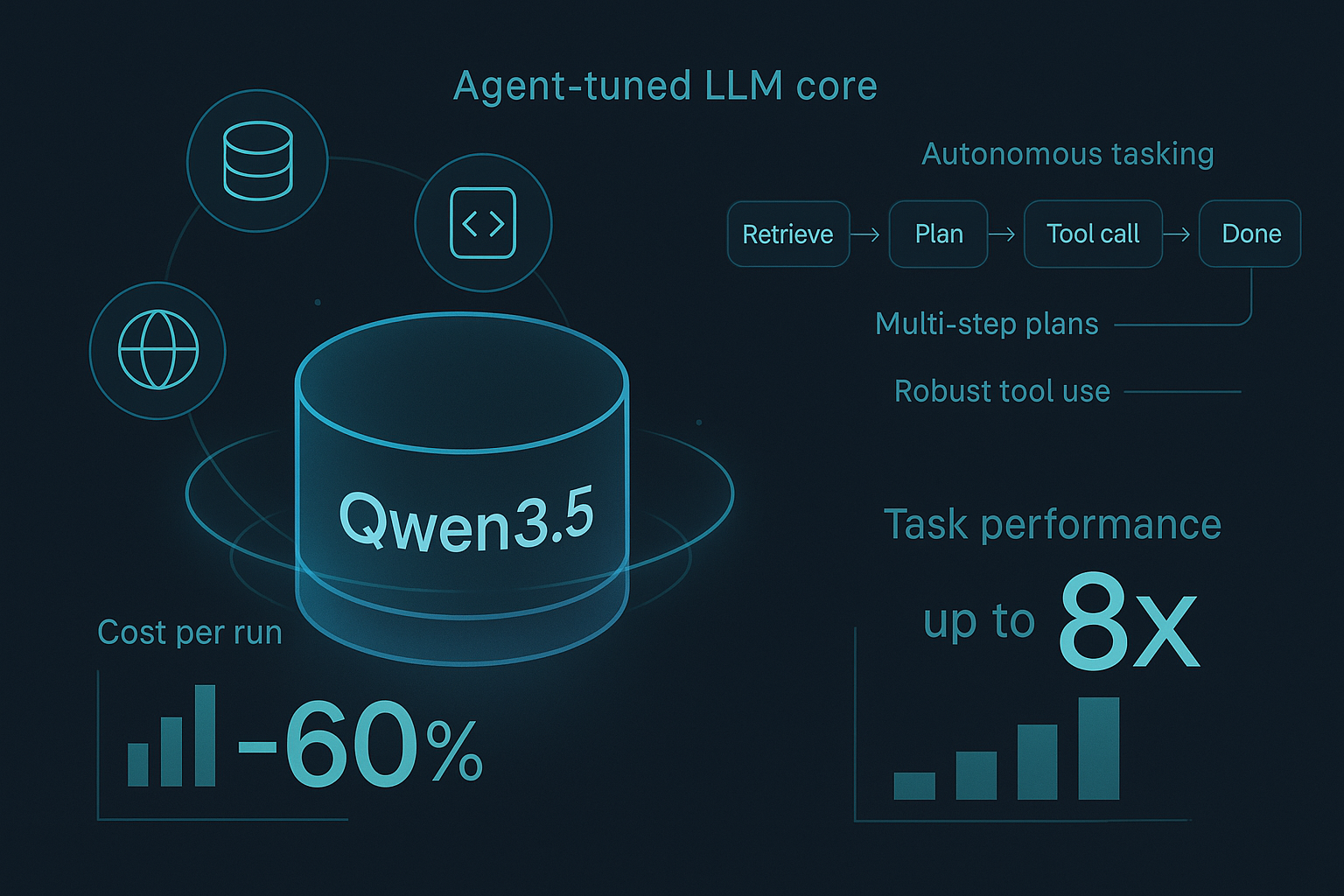

Qwen3.5 shifts the model math

Alibaba didn’t just ship another chat model. The Qwen3.5 pitch is built around autonomous tasking, tool use, and multi-step plans. Several rundowns I trust pegged it at around 60% cheaper and up to 8x more capable on certain tasks compared to prior baselines. Benchmarks will vary, but the signal is clear.

I look for autonomous tasking, solid tool use, and multi-step plans as the real Qwen3.5 unlock.

Why that matters if you’re starting from zero

Agentic AI burns tokens. It retrieves, plans, calls tools, checks itself, and retries. Cheaper runs change behavior. I prototype more, let agents explore, and fail faster without sweating the bill. For utility agents that don’t need frontier-level creativity, this is the green light.

Standards finally have momentum

Also on Feb 16, 2026, OpenAI and Cisco backed efforts to standardize how companies scale agentic AI. The value for me is practical. Agents are small systems now, not single prompts. With shared patterns for identity, scope, tools, memory, and logging, I can walk into a risk review with a common language instead of bespoke glue code.

If you’ve struggled to explain orchestration to your CIO, this is your bridge. Expect clearer role definitions for agents, consistent tool interfaces, and permission models security teams can approve without rewriting your stack.

When I use shared patterns for identity, scope, tools, memory, and logging, risk reviews turn into a common-language conversation.

Security wakes up to agent risk

The same day, I saw movement on the defensive side too. When agents can click buttons, send emails, move money, or tweak configs, classic DLP is not enough. You need intent analysis, tool-call allowlists, policy injection, and human checkpoints for anything high risk. The good news is the market is starting to meet you there, instead of making you duct-tape it yourself.

Real pilots you can copy this week

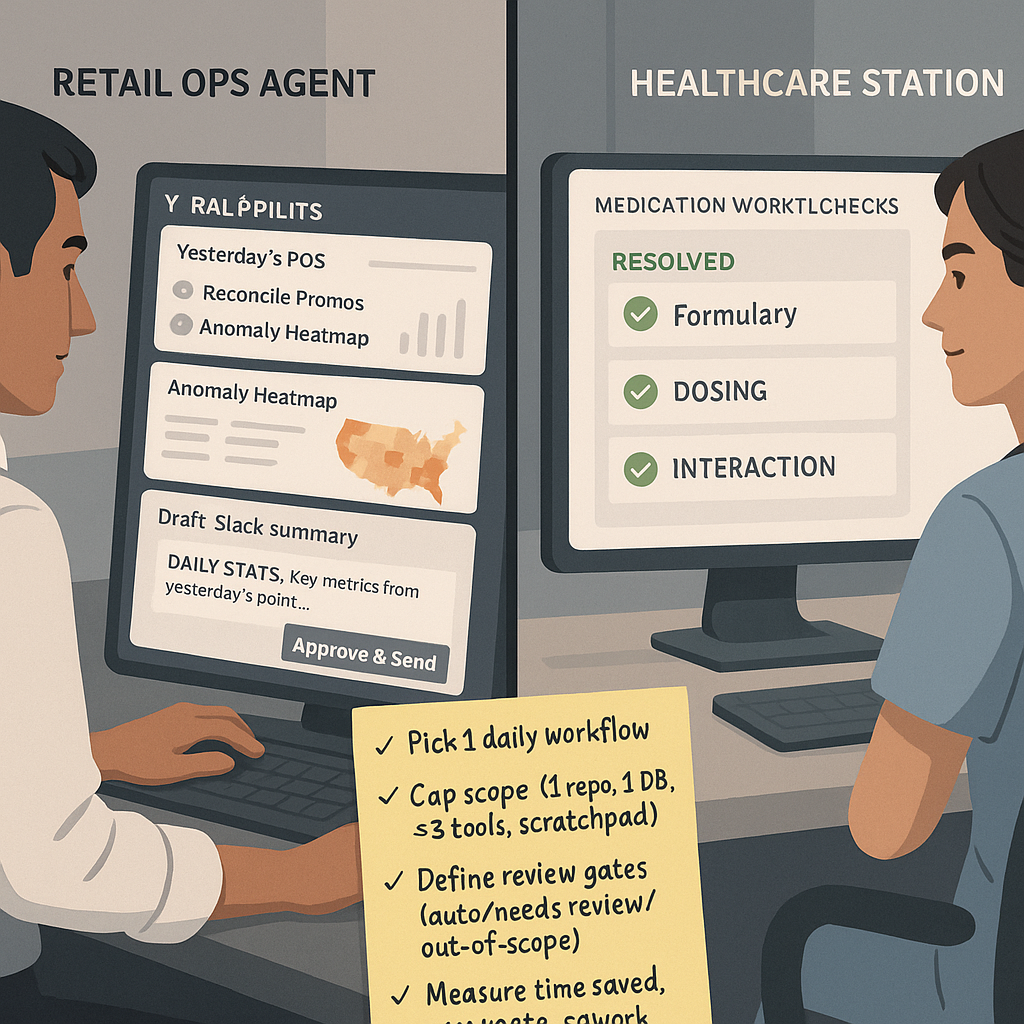

Retail reporting without the 6 am spreadsheets

URBN testing agentic AI for retail reporting on Feb 16, 2026 made me nod. An agent pulling yesterday’s POS, reconciling promos, flagging anomalies by region, then posting a 7 am Slack summary is a boring win that stacks fast. If I were cloning it, I’d start with one report the team already trusts, then keep a human as the final sender for two weeks while I measure accuracy and coverage.

There is momentum here, and it is not theoretical. AI News called it out directly.

Healthcare that feels safer and faster

Medication workflows are prime agent territory. Finite tools, strict rules, and clear definitions of done. Think formulary checks, dosing guidance, interaction scanning, and structured handoffs. The secret is not just a strong model. It is clean integrations. If your tools are wired, you get speed and safety. If not, you get a chatbot with a stethoscope.

In medication workflows, clean integrations beat a stronger model for speed and safety.

Start small: my 2-week starter kit

I wish someone handed me this a year ago. Don’t overbuild. Succeed once, then branch.

- Pick one daily workflow with a crisp definition of done. Reporting, reconciliation, or ticket triage work great.

- Hard-bound the agent’s world. One repo, one database, three tools max. Give it working memory and a scratchpad, not your whole lake.

- Decide safety gates up front. What is auto-approved, what needs review, and what is out of scope. Treat it like an API contract.

- Measure boring stuff. Time to complete, error rate, rework rate. If it saves 30 minutes a day, ship it.

How it all connects

Here is how I see it. Qwen3.5 lowers the price of learning, so you iterate more. Standards give you shared language your security team can bless. Pilots in retail and healthcare prove the pattern outside a slide deck. Put together, you get the tech, the playbook, and the guardrails arriving at once. That does not happen often.

What I’d actually build this month

I’d ship a morning ops agent for my team. Inputs are yesterday’s transactions, a KPI config, and access to a messaging channel. Steps are simple: pull data, calculate KPIs, compare to the last 7 days, flag outliers, create two action items if thresholds trip, and ask for human sign-off before posting externally. Guardrails are read-only on prod, write-only to a draft channel, and a 5-minute execution cap. I’d track on-time delivery and human edit count, then fix the top three failure modes over two weeks.

I keep prod read-only, post to a draft channel, cap execution at 5 minutes, and require human sign-off before anything external.

FAQ

Do I need frontier models for useful agentic AI work?

Usually not. Planning, tool use, and clean retrieval matter more than peak creativity for business workflows. Cheaper, capable models like Qwen3.5 make iteration affordable. Save the heavy hitters for rare edge cases or high-stakes reasoning.

How do I avoid vendor lock-in with agents?

Keep orchestration thin and hide tools behind stable interfaces. If you can swap a model by changing an adapter and your tests still pass, you are already portable. Standards momentum on Feb 16, 2026 should make this even easier to justify.

What do I show security to get a fast yes?

Bring a one-pager. Scope, allowed tools, data boundaries, review thresholds, and a full log of tool calls. Ask for help defining red lines. That posture turns a hard no into a design session.

What kills agent pilots the most?

It is rarely the model. It is messy data, flaky tools, or fuzzy definitions of done. Clean those up and your agent starts to look smart very quickly.

My take

The agent era rewards boring workflow wins. Daily reports, reconciliations, queue grooming, policy checks. Feb 16, 2026 felt like a line in the sand for agentic AI. Pick one workflow, give the agent a tiny sandbox, and you will learn more in 10 days than in 10 whitepapers.