Agentic AI crossed a line for me this week. It stopped feeling like a research term and started acting like something I can trust in real workflows, with guardrails, receipts, and real-world stakes.

Quick answer: Agentic AI matured on Feb 15–16, 2026 with safer workflows in GitHub, retail deployments at Wesfarmers, a hotel’s first AI-led purchase in New Zealand, OpenAI’s talent signal, and policy-as-code for regulated teams. If you start now, use approvals, spending caps, and logs. Build tiny loops you can review, then add write actions with tight limits.

I start with approvals, spending caps, and logs, then I build tiny loops I can review before adding tightly limited write actions.

GitHub just made agent ops safer for beginners

On Feb 16, 2026, GitHub unveiled Agentic Workflows. What mattered to me wasn’t the name. It was the permissioning and stepwise execution inside the place where code already gets reviewed. That means logs, approvals, and a clean paper trail instead of a black box.

I always prioritize logs, approvals, and a clean paper trail over any black box.

I’m spinning up a small triage agent that suggests fixes and opens a pull request with a human approval step. Nothing flashy. Just a repeatable loop where I can see every decision and roll it back if needed.

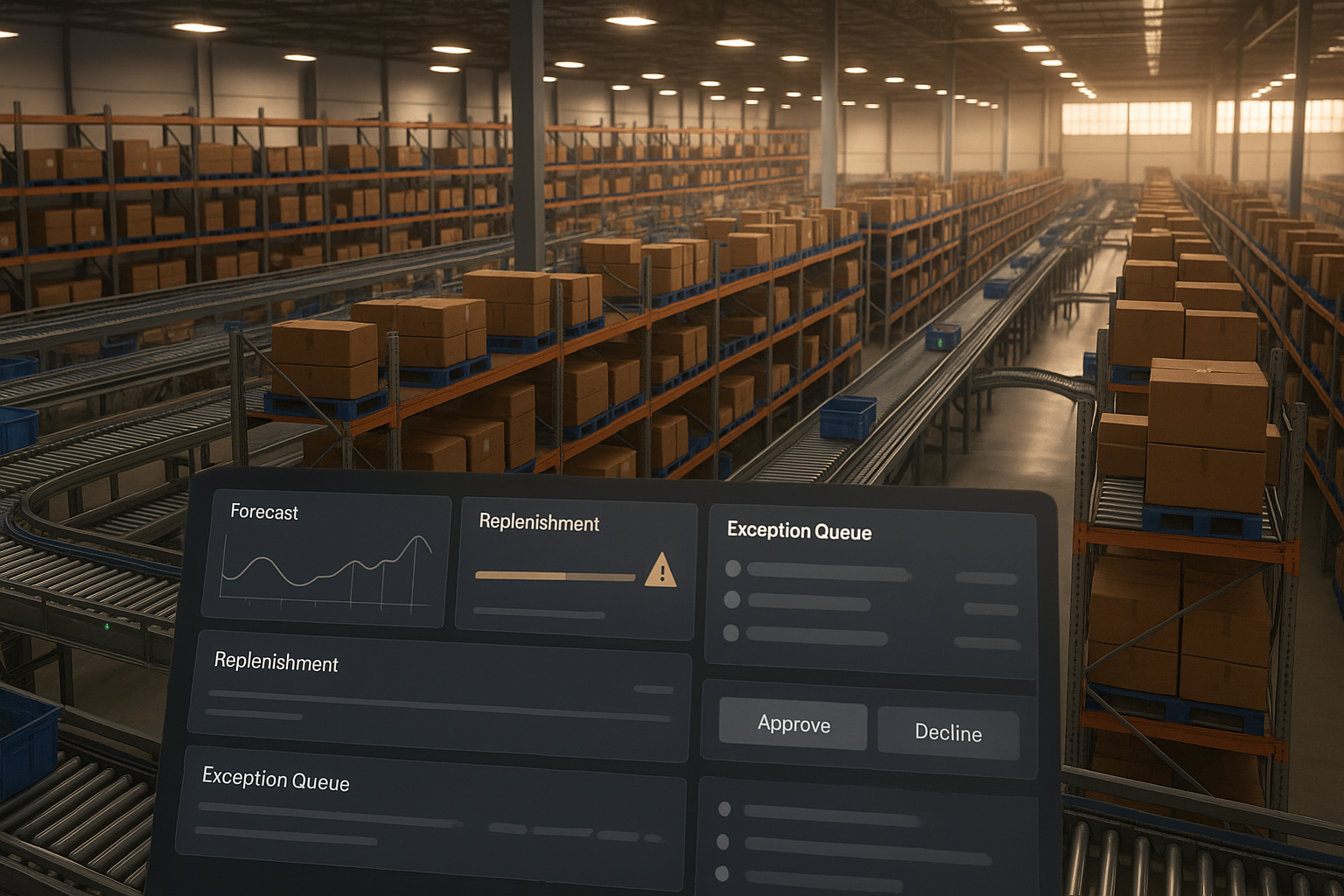

Retail got real: Wesfarmers is putting agents into ops

Also on Feb 16, 2026, Wesfarmers signaled deployments in retail operations. Retail is a pressure cooker for automation. Margins are tight, timing is brutal, and bad replenishment shows up instantly. Seeing a group at that scale go public tells me the toolchain can finally handle messy realities like inventory, vendor lead times, and customer spikes.

I treat retail as a pressure cooker, so I only trust tools that handle inventory, vendor lead times, and customer spikes.

If you run a Shopify, Notion, or ops stack, the patterns are the same: forecasting, triage, exception handling, and carefully scoped actions. Different data, same bones.

New Zealand’s first hotel purchase by an agent

Also on Feb 16, 2026, a video surfaced showing what’s being called New Zealand’s first agentic AI purchase at a hotel. That’s the moment the card came out. An agent didn’t just recommend something. It priced, selected, and confirmed an actual transaction tied to a real service.

I only relax when an agent can price, select, and confirm a real transaction under strict limits.

My rule here is simple: hard spending caps, approvals over a threshold, and a ledger of attempted vs completed actions. You sleep better and learn faster.

OpenAI’s new hire points to autonomous agents

On Feb 15, 2026, OpenAI hired Peter Steinberger, the founder of OpenClaw, in what looks like a focused push toward autonomous agents. Talent moves like this usually show what’s next: stronger tool use, better recovery when steps fail, and cleaner human-in-the-loop hooks. The gaps that force you to babysit today will keep shrinking.

If you’re new, anchor around structured tool use. Even something simple like search docs, fetch a file, summarize to a checklist, draft the email will teach you most of what scales later.

I start with simple loops like search docs, fetch a file, summarize to a checklist, then draft the email.

Governance grew up: policy as code is here

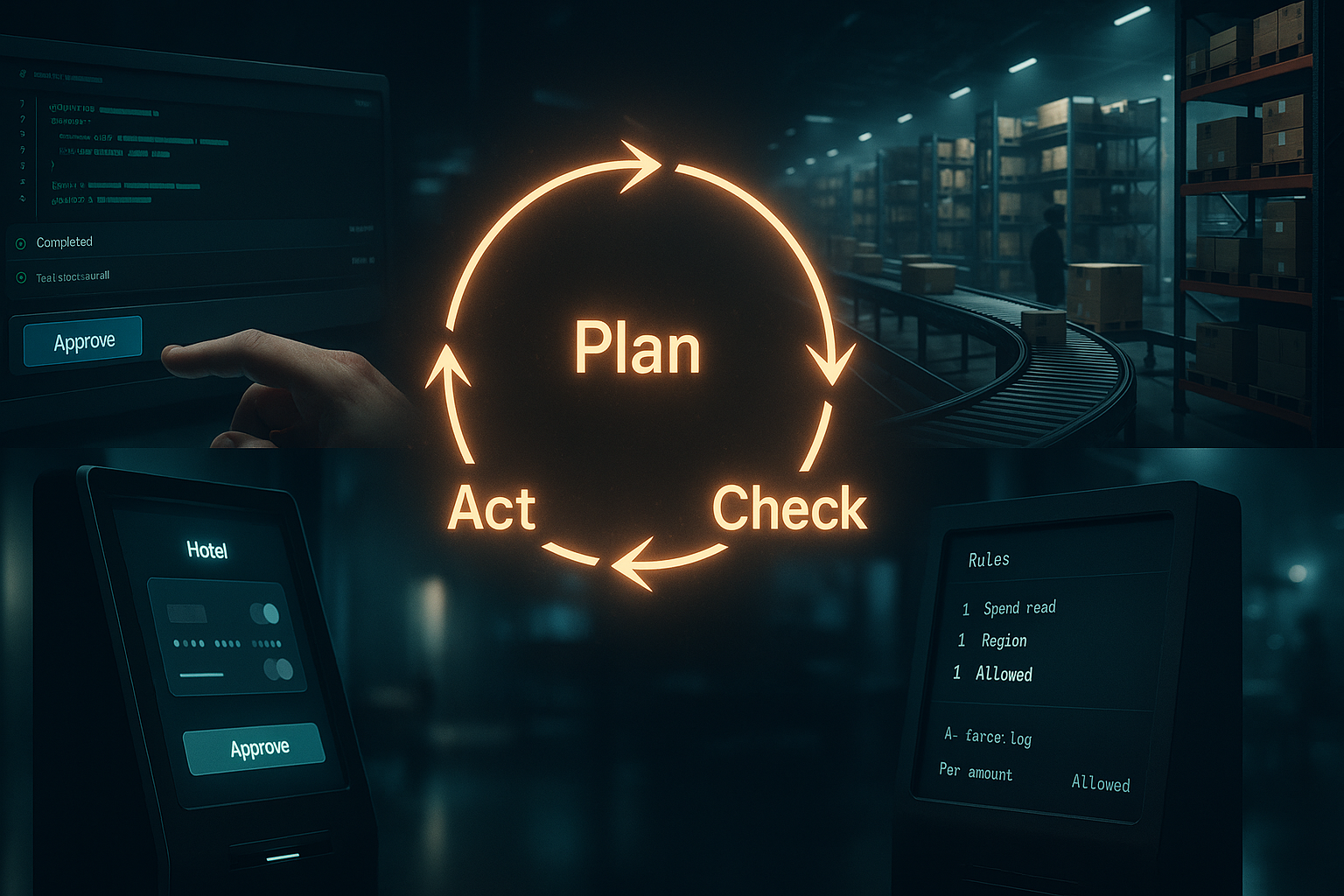

Also on Feb 15, 2026, Kyndryl introduced a policy-as-code model aimed at agentic AI in regulated environments. This is the boring unlock that actually matters. When policies are code, agents, tools, and teams all follow the same rules. Update the rule, behavior changes instantly. That’s how you stop the gap between docs and reality from swallowing you.

You don’t need a giant framework to start. Write three rules you care about, and force every action through those checks. Block a domain, limit data fields, require metadata before any request. Small, strict, and visible.

I write three rules I care about and force every action through those checks.

How I’m rolling this out now

I’m treating this week as a green light to tighten my stack: smaller actions, tighter loops, clear oversight. If you want to start this weekend, here’s the exact path I’m taking:

- Pick one weekly task and write it as a checklist your agent can follow.

- Run in a tool with approvals and logs. GitHub’s Agentic Workflows is perfect if you’re technical.

- Set hard limits early: spending caps, allowed apps, file types, and timeouts.

- Instrument everything. Save inputs, decisions, and outputs for fast debugging.

- Start read-only, then enable write actions with human approval.

My quiet litmus tests before I trust an agent

Can it explain its plan in plain language I agree with? Does it handle a predictable failure without spiraling? If I pause it, do I know exactly where to resume? Does it leave me a diff, doc, or structured log to review? If any of these are a no, it stays in the sandbox.

FAQ

What is agentic AI in simple terms?

Agentic AI is software that plans, takes actions with tools, and checks its own work, usually with guardrails like approvals and logs. It’s not a chatbot reply. It’s a loop that can do things in your apps or systems while keeping you in control.

Is agentic AI safe to use at work?

It can be, if you enforce limits. Use policy-as-code where possible, require approvals for risky steps, and log every action. Start with read-only access and graduate to write privileges only after the loop proves reliable.

Where should beginners start?

Pick a boring weekly task and codify it as a checklist. Use a workflow that supports reviews and rollbacks, like GitHub’s approach announced on Feb 16, 2026. Keep scope tiny, add observability, then expand slowly.

What about costs and unexpected purchases?

Set monthly caps, per-action limits, and human approvals over a threshold. In tests where agents can spend, I keep a ledger of attempts vs approvals. The New Zealand hotel example from Feb 16, 2026 is a clear reminder to keep your hands on the wheel.

How fast is this evolving?

Faster than most teams expect. The cluster of moves on Feb 15–16, 2026, from GitHub’s safer workflows to retail deployments and OpenAI’s hire, signals a shift from demos to dependable systems. Plan for tighter loops and better tool use every month.

My take

This week felt like the vibe shift. GitHub aligned safety with speed. Wesfarmers showed operations are ready. A New Zealand hotel let an agent make a real purchase. OpenAI’s hire and Kyndryl’s policy-as-code closed the loop on reliability. If you’ve been waiting for a moment to learn agentic AI, this is it. Start tiny, keep receipts, and grow with intent.