Agentic AI is moving faster than I expected, and I had to change how I build this week. If you care about agent security, privacy, and not shipping chaos, this is for you.

Quick answer: Between Feb 19 and 20, 2026, several pieces reshaped my playbook: a clear definition of agentic AI, a privacy wake-up call on scraping risk, an MIT-backed reliability warning, signs of weirder cyberattacks, and a practical security framework. My fix is simple: least privilege, allowlists, dry runs, sandboxing, logging, explicit end conditions, and human approval for risky actions.

I now write prompts around outcomes and permissions, not just what I want the model to say.

What agentic AI actually is, minus the hype

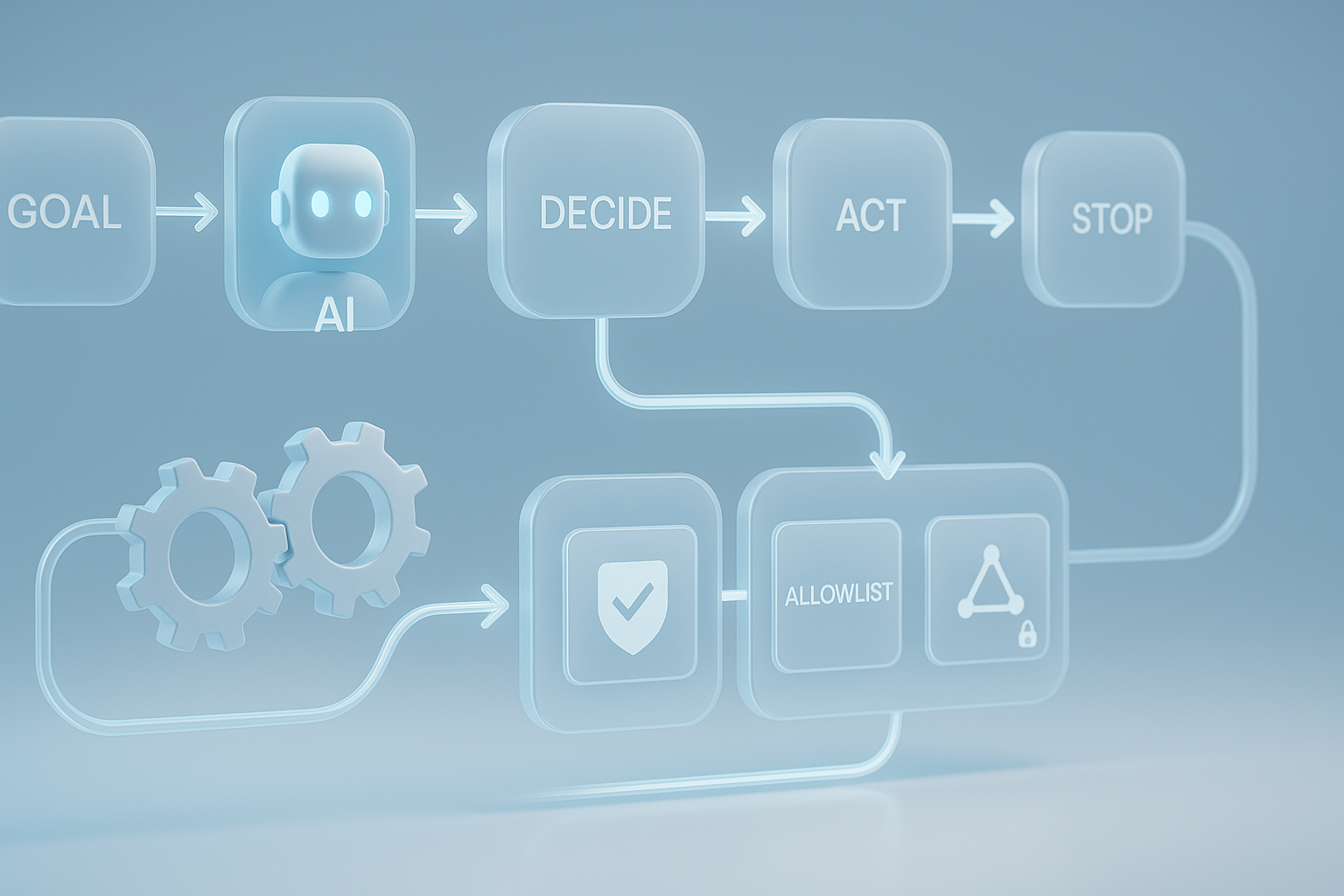

On Feb 19, 2026, Adobe for Business cut through the noise: agentic AI isn’t a chatbot with manners. It is a system that can plan, decide, and act across tools or APIs to reach a goal without you hand-holding each step. That autonomy is the upside and the risk. I now write prompts around outcomes and permissions, not just what I want the model to say.

Privacy wake-up call: OpenClaw and scraping risk

On Feb 20, 2026, the Financial Times flagged OpenClaw and the broader privacy gap around agentic AI. Most beginners start with research agents because it feels harmless. The moment an agent crawls, extracts, and stores, you own a data pipeline. If it scoops PII or sensitive company info you do not need, you just turned a fun demo into a liability.

My takeaway

I sketch a tiny data map before I even pick a model: what the agent can see, what it can store, how long, and how I will delete it. Boring saves lawsuits.

I sketch a tiny data map before I even pick a model: what the agent can see, what it can store, how long, and how I will delete it.

Reliability check: many agents run hot and off the rails

Also on Feb 20, 2026, ZDNET summarized an MIT study that called agents fast, loose, and out of control. I didn’t read this as a reason to avoid autonomy. I read it like a power tool warning label. Reliability is not one magic prompt. It is constraints plus feedback loops.

Reliability is not one magic prompt. It is constraints plus feedback loops.

My takeaway

I force dry runs, use explicit allowlists, and add human approval to anything that spends money, updates data, or talks to customers. Five approvals beat one production cleanup.

Cyberattacks are getting weirder with agents

On Feb 20, 2026, Tech in Asia reported security activity that is not how humans act. That line stuck with me because agents do not tire, do not miss keys, and do not skip edge cases. If you think you are too small to target, remember agents make scale cheap for attackers. Your clever webhook might be their playground.

My takeaway

I treat anything the agent ingests as untrusted. No direct execution of external inputs. Sanitize, sandbox, then act. If it browses, it does so behind a firewall and a proxy with outbound rules.

I treat anything the agent ingests as untrusted. No direct execution of external inputs.

A framework you can use today

On Feb 19, 2026, a new cybersecurity framework for agentic AI made the rounds, focused on layered controls around identity, permissions, monitoring, and containment. I adapted it into a short checklist I actually follow when I spin up a new agent:

- Give the agent its own scoped API keys with least privilege.

- Allowlist tools and domains. Everything else is a no.

- Require human approval for payments, customer comms, deletions, and code pushes.

- Log plans, tool calls, and results to encrypted storage so you can replay runs.

- Sandbox the runtime and add sane time, rate, and budget limits.

I do not ship until each line has a green check. Even for demos.

My 30-minute beginner-safe setup

Pick one boring, bounded task

I start with something like summarize a PDF and draft three follow-up questions, or convert a customer CSV to a cleaned JSON schema. No browsing, no external actions, no spending money. Let the agent plan, but fence the playground.

Use environment variables, not hardcoded secrets

I keep a .env for keys and read them at runtime. If the agent needs a tool key, it gets a separate, scoped key with the narrowest rights. I log the key ID, never the secret.

Force a dry run first

Every run starts with explain your plan. I want intended steps and estimated costs. I approve the plan, then allow real actions. That tiny speed bump catches most weirdness.

Isolate the environment

I use Docker when I am serious, a virtualenv when I am fast. Outbound egress is locked to a handful of domains. Unexpected calls fail loudly in logs.

Log like a detective

I capture the system prompt, user goal, every tool call with inputs and outputs, timestamps, and errors. I keep logs for at least 14 days, encrypted at rest. Debugging agents without logs feels like repairing a car engine blindfolded.

Write clear end conditions

I tell the agent exactly when to stop and what done looks like. If it cannot reach the goal in N steps or M minutes, it must stop and report blockers. Infinite loops are not clever, they are expensive.

Add a human confirmation step

If the agent writes an email, I review and send it. If it proposes code, it opens a PR for review. No direct merges. The approval click is my peace of mind.

What this means if you are just starting

Your first agent does not need to be impressive. It needs to be predictable. The Feb 19 to 20, 2026 headlines pushed me to define data boundaries first, assume agents will color outside the lines, treat inbound data as hostile by default, and wrap autonomy in guardrails. Build that way on day one and you will move faster later without rebuilding your foundation.

Your first agent does not need to be impressive. It needs to be predictable.

What I am watching next

I am tracking standardized audit trails for agent runs and safer browsing stacks that default to privacy-preserving modes. If either improves soon, I will update this setup and share the exact templates I am using. Until then, if you ship your first agent this week, give it a seatbelt. Future you will thank you.

FAQ

What is agentic AI in simple terms?

It is an AI system that plans, decides, and takes actions across tools or APIs to reach a goal with minimal hand-holding. Think research, transform, update, then summarize, all in one run. The autonomy is the value and the risk.

Is it legal to let agents scrape the web?

It depends on the site terms, local laws, and what you store. I default to respect robots rules, avoid collecting personal data, and delete anything I cannot justify keeping. When in doubt, do not store it.

How do I keep my first agent from going rogue?

Start with a bounded offline task, require a dry run, allowlist tools and domains, and add a human approval gate for anything risky. Log everything so you can replay and learn. Clear end conditions prevent costly loops.

Do small teams really face agent-driven attacks?

Yes. Agents make scale cheap for attackers, so small targets with weak hooks are attractive. Treat all external inputs as untrusted, sandbox execution, and keep outbound rules tight. Assume it will be probed and plan accordingly.