Agentic AI just clicked for me in a very real way. On March 16, 2026, four updates dropped within hours and forced me to change my plan for the rest of the year. If you’ve been agent-curious, this was the day the training wheels came off.

Quick answer: Agentic AI went mainstream on March 16, 2026 with PCs leaning into “Agent Computers” (ITPro), enterprise-scale tooling from Nutanix (Techzine Global), and a consumer Health AI Agent from Amazon (HLTH). My move: start a tiny local agent with tight permissions, full logging, and human review, then scale when it’s boringly reliable.

I start a tiny local agent with tight permissions, full logging, and human review, then I scale only when it’s boringly reliable.

PCs are quietly becoming “Agent Computers”

On March 16, ITPro reported AMD’s view that AI on the PC has crossed an important line and that “Agent Computers” are the next big breakthrough. That hit me. This isn’t just chat on a laptop. It’s task-driven agents running locally with real tools, memory, and plans.

Local agents feel different. They’re faster, they keep more data on your machine, and they unlock everyday workflows that actually finish the job. Think inbox triage that files notes, research that saves citations as you read, or meeting helpers that summarize and schedule without spraying data across the internet.

If agents sounded like complicated cloud orchestration, this brings the starting line back to your desk. You don’t need a monster GPU to learn the patterns. You need one focused problem, a small agent, and good defaults.

Enterprises just got a scaling button

Also on March 16, Techzine Global covered Nutanix introducing a platform for large-scale agentic AI. That’s the boring but vital stuff I care about: orchestration, permissioning, observability, cost controls, and rollback when an agent drifts.

Why I care even as a solo builder: the stack is maturing. If I instrument a small workflow agent properly now, those habits translate when the question becomes “can we roll this to the team?” It’s not just prompts anymore. It’s versioning, logs, metrics, and safe defaults.

I treat it as more than prompts; I focus on versioning, logs, metrics, and safe defaults.

A consumer health agent just went live

HLTH reported that Amazon launched a Health AI Agent on its website on March 16 and expanded free virtual care to as many as 200 million Prime members. That puts a real agent in a high-stakes domain, right where people already are.

I don’t read this as replacing doctors. I see a workflow pattern that will spread: intake, triage, smart routing, and follow-up, with clear escalation. Expect the same approach in legal intake, benefits navigation, IT support, and complex shopping.

The safety clock is ticking a little louder

On the same day, IMD’s AI Safety Clock reportedly moved closer to midnight, citing agentic AI going mainstream and increasing weaponization. I’m not here to be dramatic. I’m here to be careful. Autonomy multiplies outcomes, good and bad.

My rule now is simple: define hard limits, log every run, scope access narrowly, and treat credentials like they’re radioactive. Safety is a feature, not a tax.

I treat safety as a feature, not a tax.

How I’d start this week

My goal isn’t a sci-fi butler. I want a reliable hour back every day. I picked an annoying loop I do three times a week: pull new customer emails, tag by intent, draft quick replies, and create tasks for the tricky ones.

The tiny system I shipped

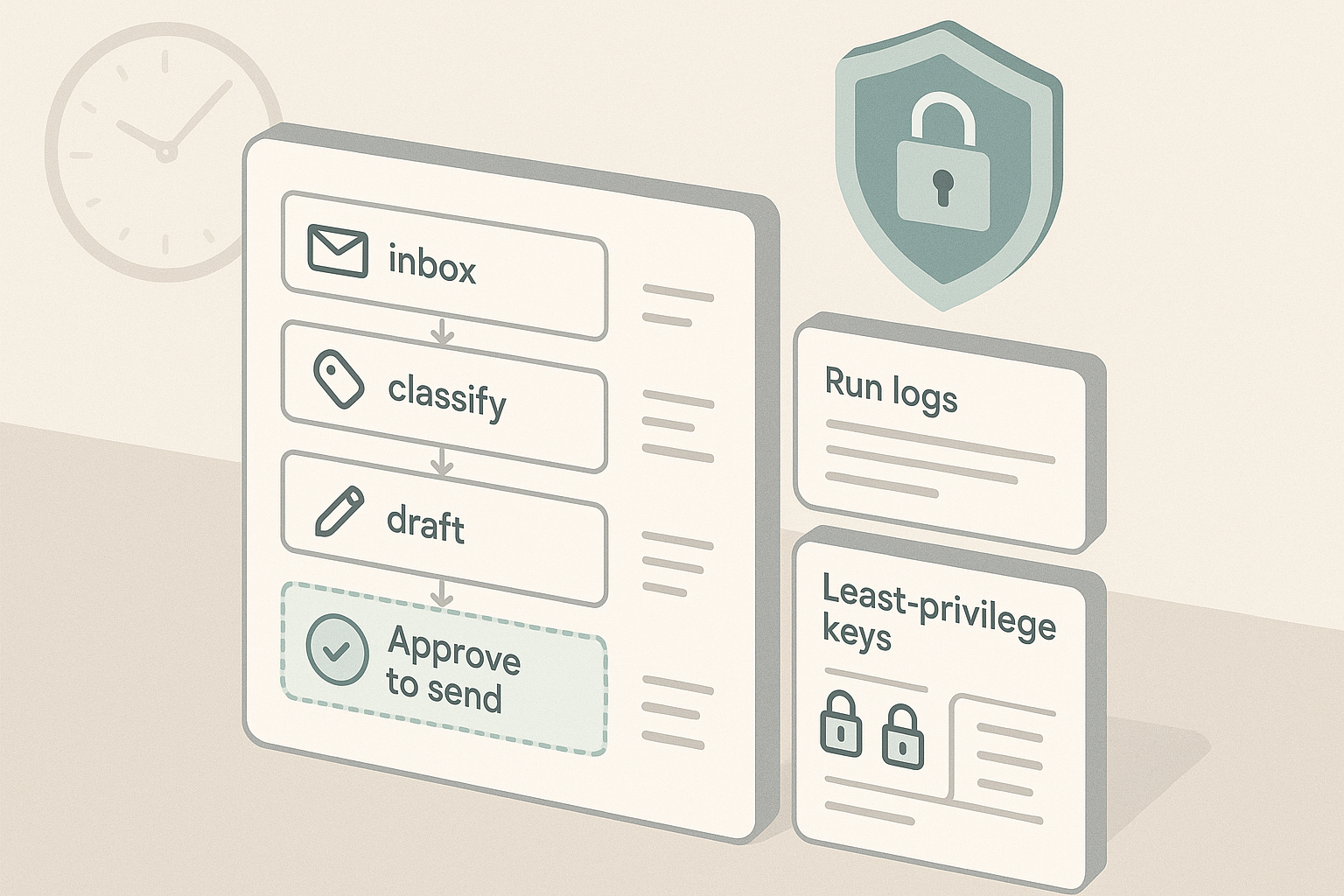

I run a small local agent that watches a single inbox label during work hours. It classifies, drafts, and files. Permissions are minimal. Every run is logged. Nothing sends without a click. That’s it. Day one felt lighter, and I didn’t worry about a rogue send.

When it held steady, I added one step up the chain: drop a CRM note or place a calendar hold. Each capability is boring, reversible, and logged.

The tooling I reach for

You don’t need a fancy stack. A lightweight agent framework, a dependable model, and tools you already trust will do. If you’re non-technical, pair a general AI assistant with automation tools and start read-only. If you write code, pick a framework with tool use, retries, and memory, but avoid premature complexity. Confidence beats cleverness.

Beginners’ checklist I wish I had

- Define a narrow mission and a hard stop, including when to hand off to a human.

- Keep a human in the loop for anything customer-facing until you’ve reviewed 100 plus runs without surprises.

- Log everything. Even a plain text log is gold when things get weird.

- Start with least-privilege access and add one permission at a time.

- Run a weekly agent retro to prune prompts and remove steps that don’t earn their keep.

My take on the March 16 cluster

AMD’s “Agent Computers” framing told me to expect better local UX. Nutanix signaled the backend is ready enough that my effort here compounds. Amazon’s health agent proved high-friction, privacy-heavy workflows are in play now, not someday. Together, it’s a simple roadmap: start local, learn to make agents predictable, then promote the workflow when it proves itself.

I follow a simple roadmap: start local, make agents predictable, then promote the workflow when it proves itself.

What I’m doing this week

I’m shipping one narrow inbox agent with a hard rule: it can draft and tag, but it cannot send. I set up run logs and a daily digest of actions. If it’s stable for a week, I’ll let it file support tickets and create follow-up tasks. No new hardware spree. No orchestra of agents. Just one helper that earns trust.

I’m shipping just one helper that earns trust.

FAQ

What is agentic AI in plain English?

Agentic AI is software that can plan, use tools, and take steps toward a goal with your rules in place. It is more than a chat. It is an assistant that can act, within clear boundaries you set.

Do I need a powerful GPU to start?

No. For most starter workflows, a decent laptop is enough. Start local with tight scopes and logging. If your use case grows, you can move to stronger infrastructure later.

How do I keep agentic AI safe at work?

Limit scope, use least-privilege credentials, log every run, and keep a human in the loop for anything customer-facing. Treat safety like a product feature and review it weekly.

What is a good first project?

Pick a repetitive workflow you do three times a week. Inbox triage, basic research capture, or meeting summaries with calendar holds are great. Keep permissions minimal and require manual approval to send or publish.

When should I scale beyond my laptop?

Scale when the workflow is boringly reliable and you can answer basic questions with logs and metrics. That is when enterprise platforms designed for agentic AI start to make sense.