Agentic AI is having a moment, and I felt it this weekend. I caught up on what dropped between March 21 and March 22, 2026, and four updates shifted how I’m thinking about building, shipping, and securing real agents.

Quick answer: The fastest path to useful Agentic AI right now is to pair AI-assisted code reviews with basic prompt-injection testing, keep state portable for future decentralization, and invest in strong CPU-first local rigs. The news this week made it clear: focus on quality, safety, identity, and compute. If you’re starting fresh, you can get something reliable running in a weekend.

I move fastest by pairing AI-assisted code reviews with basic prompt-injection tests, keeping state portable for future decentralization, and investing in a CPU-first local rig.

Why this week mattered

In 48 hours we saw four puzzle pieces click: AI code review stepping into high-stakes Rust in the Linux kernel, prompt-injection testing going mainstream, a serious decentralized bet for agent identity and value, and a reminder that CPUs still power a ton of agent workflows. That’s a clean roadmap for beginners: build for quality, ship with safety, keep your data portable, and don’t starve your compute.

AI code reviews just hit the Linux kernel

On March 22, 2026, Phoronix reported that Sashiko is now providing AI reviews on Rust code in the Linux kernel. That’s a tough bar, and it signals something important: AI-assisted review is getting trusted where it actually matters. If it can nudge maintainers toward safer patterns and reduce reviewer load there, everyday teams can benefit too.

How I’m using it as a beginner-friendly boost

I treat AI like a tireless junior reviewer. It’s great at enforcing style, suggesting tests, and flagging obvious footguns before I even open a PR. I still keep domain logic and architectural calls human-owned, but the speed-up on sanity checks and test scaffolding is real.

I treat AI as a tireless junior reviewer for style, test suggestions, and footguns while I keep domain logic and architecture decisions human-owned.

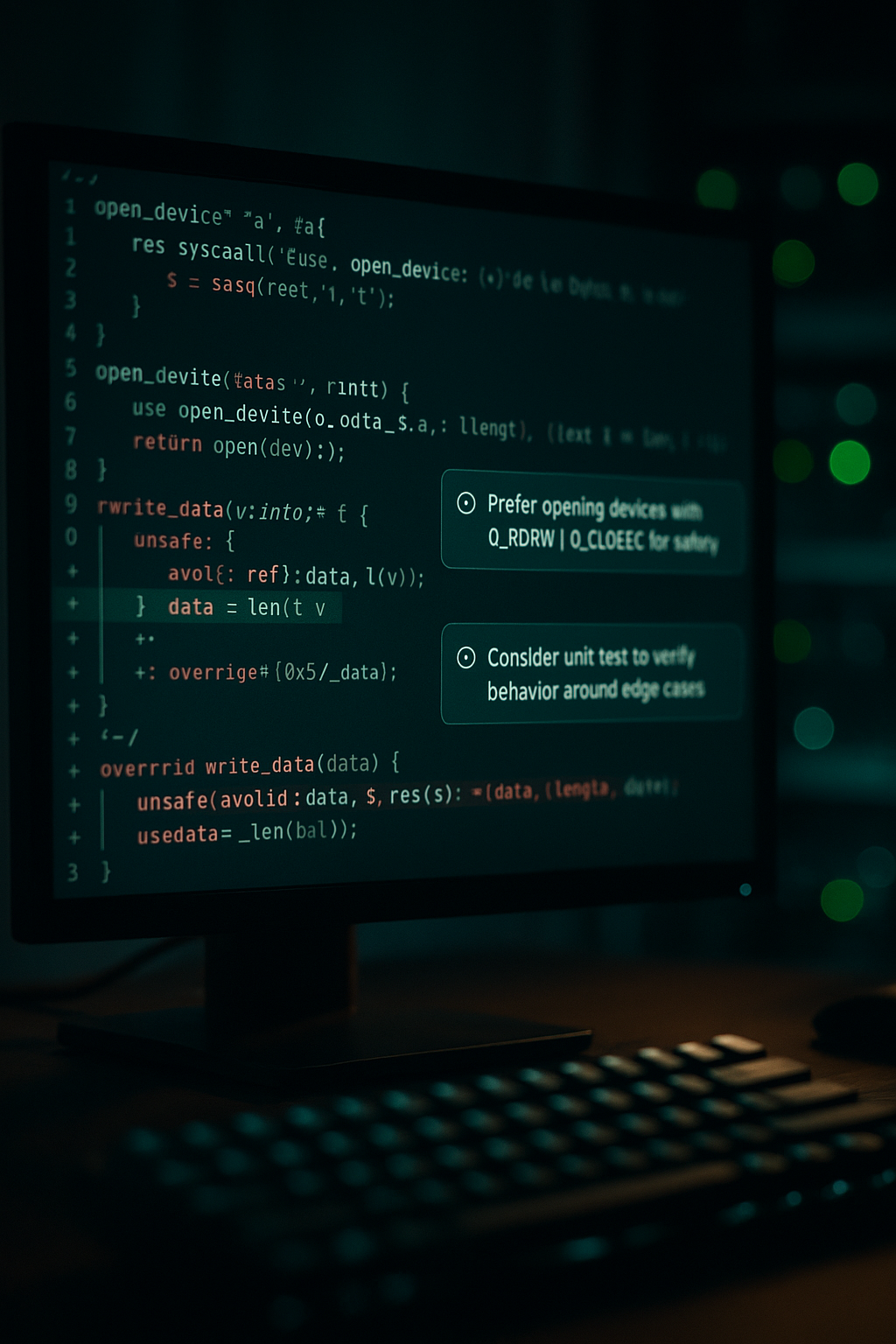

Security finally steps up: prompt-injection testing

On March 21, 2026, Cybersecurity Insiders covered HackerOne’s new agentic prompt-injection testing. If your agent reads the web, parses PDFs, or acts on semi-trusted notes, this is the failure mode that bites. It isn’t a weird sentence in a chat. It’s an agent executing hidden instructions and doing real damage.

How I red-team my own agent

I start with a tiny threat model: what inputs can an attacker influence, and what tools can my agent run without me? Then I craft a couple of harmless adversarial payloads and see if anything slips through. I also force a human checkpoint for actions that write to disk, email users, change config, or spend money. Tool contracts help too: the agent must request exactly what it needs, parameters get validated, and every call is logged. Boring, yes. But when something goes sideways, logs save hours.

I add a human checkpoint for risky actions and log every tool call so I can trace what happened in minutes, not hours.

The decentralized bet: a blockchain built for agents

Also on March 21, 2026, 0G positioned itself as the blockchain for AI agents, pointing at a projected trillion-dollar agentic economy. Whether or not that number lands, the signal is clear: agents will need durable identities, reputations, and wallets that can live beyond any single vendor.

How I’m designing for it without going full crypto

I keep my agent’s state behind a clean interface so I can swap identity and storage later. Payloads get signed. Audit trails are a feature, not an afterthought. And if an agent can hold value or privileges, I plan for key rotation, multi-sig approvals, and rate limits. It’s the difference between a cool demo and something someone will trust.

I sign important payloads, keep audit trails, and design state behind a clean interface so I can swap identity or storage later.

Local compute is back: CPUs matter more than you think

On March 21, 2026, GadgetMatch highlighted AMD’s angle on the agentic era with high-performance CPUs. So much agent work is CPU-heavy: orchestrating subprocesses, vectorizing embeddings, scraping, running lightweight local models, juggling context. If I’m building a desk-side rig, more cores and fast storage usually beat chasing a halo GPU on day one.

How I’d spec a beginner-friendly agent box today

For a snappy build that can run multiple tools at once without melting, I like 16 to 32 CPU cores for parallel jobs, 64 to 128 GB RAM for embeddings and RAG caches, and at least 2 TB of NVMe so vector databases and local search feel instant. A mid-range GPU is nice for embeddings or small-model inference, but it isn’t mandatory to learn the ropes. The real goal is a machine that keeps you building instead of waiting.

I prioritize many CPU cores, lots of RAM, and fast NVMe so my agent tools feel instant and I keep building instead of waiting.

OK, but what should I actually build first

If I were starting this weekend and wanted the right lessons fast, here’s the plan I’d follow.

- Pick one narrow workflow you do 3 times a week. Use an off-the-shelf model and a local vector DB. Keep it boring and shippable.

- Add a tiny AI code review step. Let it suggest tests and flag obvious mistakes, then track how often you accept its changes.

- Create two prompt-injection tests tailored to your exact tools. Run them in CI. If either slips through, add a human checkpoint.

- Design state like it might live elsewhere later. Even a simple JSON ledger with signatures gets you thinking the right way.

What this week tells me about where Agentic AI is going

Quality is getting real if AI is touching Rust in the kernel. Security is moving from Twitter threads into test harnesses you can actually run. Infrastructure is exploring ways agents can outlive a single vendor. And hardware is reminding us that CPUs carry a lot of the day-to-day load.

If you’re new to Agentic AI, that’s good news. You don’t need to chase every paper or the biggest model. Pick a tight workflow, wire in safety from day one, keep state portable, and give yourself enough compute to iterate without friction.

FAQ

What is Agentic AI in simple terms?

Agentic AI refers to systems that can plan, use tools, and act on goals with some autonomy. Instead of just answering questions, they execute steps, call APIs, read files, and report back. The value shows up when you give them a repeatable workflow and clear guardrails.

How do I stop prompt injection without expensive tools?

Start small. Add a human checkpoint for risky actions, validate tool parameters, and log every call. Create two adversarial test payloads that target your real tools and run them in CI. You’ll learn fast where the gaps are and fix the biggest risks first.

Do I need a GPU to build useful agents?

Not at the start. Most orchestration, scraping, embedding lookups, and context juggling is CPU-heavy. A many-core CPU, lots of RAM, and fast NVMe will carry you far. Add a mid-range GPU later if you want faster embeddings or small local models.

Should beginners care about blockchains for agents?

You don’t need tokens to learn from the pattern. Design your agent so identity and storage can be swapped later, sign important payloads, and keep an audit trail. If decentralized rails mature, you can plug in. If not, you still have a clean architecture.

Where should I use AI in code review?

Lean on it for style, linting, test suggestions, and catching obvious bugs. Keep core domain logic and architecture decisions human-owned. This balance speeds you up without outsourcing judgment.

I’ll keep testing these pieces in my own projects and share what actually sticks. Build for real tasks, treat safety as a feature, and ship the simplest thing that works. Momentum beats perfection.