Agentic AI enterprise is here, and I felt it this morning while combing through the March 27, 2026 updates. I sat down with coffee, opened way too many tabs, and kept seeing the same story: agents are leaving toy demos and moving into governed, auditable, enterprise workflows.

Quick answer: The clearest path to enterprise-grade agents right now is a database-centered memory and control plane, a small router model plus specialists instead of one giant model, tight zero-trust guardrails, and a simple replayable eval set. The March 27, 2026 news cycle confirmed this with database-first architectures, new agent-focused silicon, a research foundry push, and a warning to avoid the one-model trap.

I anchor agents in a database control plane and route with a small model to specialists; it keeps costs sane and memory reliable.

Why this week actually matters

Different vendors moved on the exact same day. That’s rare. Oracle is anchoring agents in data, Arm showed silicon tuned for agent patterns, Salesforce is formalizing the research-to-prod bridge, and CIO hammered the risks of betting on a single model. For me, that combination reads like a green light to get serious about architecture, not just prompts.

Signal 1: Your database should be the agent control plane

On March 27, 2026, SiliconANGLE reported Oracle’s push to center agentic workloads on the AI database. That clicked for me because most fragile agent projects die on state and coordination. If vectors, events, policies, and transactions live side by side, an agent can plan, act, and verify without losing context.

How I’d start

Pick a database that gives you vectors, JSON, and events in one engine. Treat it as the agent’s memory and control plane. Store tool calls, plans, and results there. Fewer moving parts, fewer mystery bugs.

I pick one database with vectors, JSON, and events and treat it as the agent’s memory and control plane to kill mystery bugs.

Signal 2: Arm’s AGI CPU hints at lower-latency agent loops

Also on March 27, 2026, Arm announced an AGI CPU for the agentic AI cloud era. Agentic patterns are chatty and tool-heavy, so latency and efficiency matter. I’m reading this as a nudge to design for heterogeneous hardware now, not later.

My takeaway

Don’t overfit to one GPU workflow. Use batching, streaming, and smart tool routing so CPUs, GPUs, and accelerators become levers you can swap without refactoring your whole stack.

I design for heterogeneous hardware with batching, streaming, and smart tool routing so I can swap CPUs, GPUs, and accelerators without refactors.

Signal 3: Salesforce is building the research-to-prod bridge

On March 27, 2026, IT Pro covered Salesforce spinning up an agentic AI foundry. When a CRM giant says they need a foundry, they mean shared tooling, repeatable evals, and clean handoffs from research to production. That’s the part most of us skip until it hurts.

What I’m copying

I keep a tiny personal foundry repo: prompts, tools, fixtures, and a script that replays yesterday’s real tasks after every change. If today’s agent can’t beat last week’s on the same jobs, I don’t ship.

Signal 4: The one-model trap will stall your rollout

Also on March 27, 2026, CIO warned about the one-model trap. I’ve done this. It works until cost, latency, or reliability hits a wall. A mixture-of-experts mindset fixes most of it.

What works for me

Use a small, fast model for routing and easy extraction. Keep a mid-size model fine-tuned for your domain. Save your heavyweight model for hard reasoning or rare fallbacks. Add retrieval for facts and make routing decisions auditable.

I route easy tasks to a small model, use a domain-tuned mid-size for depth, and reserve the heavyweight for tough cases with auditable routing.

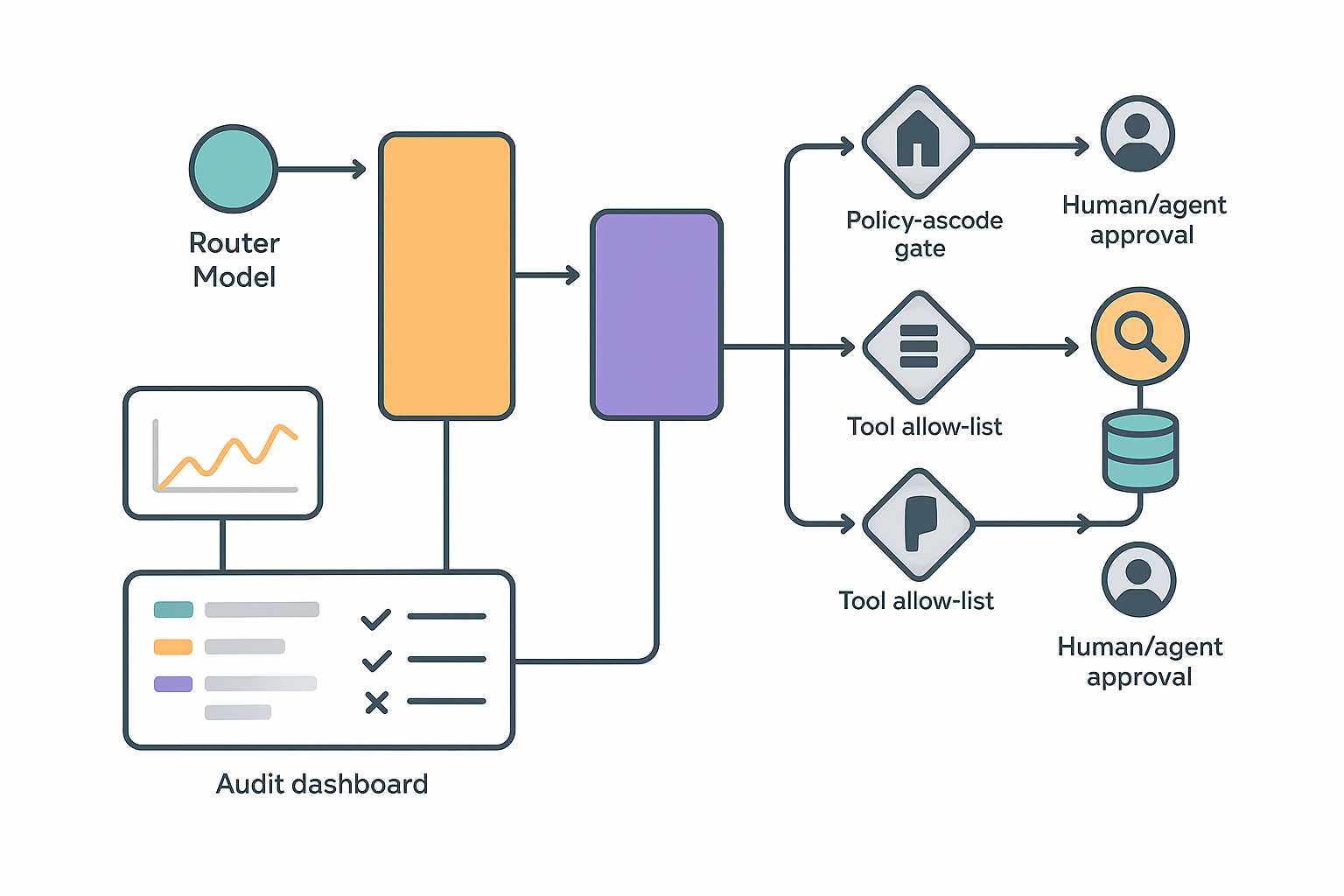

Signal 5: Zero-trust isn’t optional for agents

Agents chain actions, touch sensitive data, and can produce confident nonsense. That needs policy, not faith. I scope credentials, allow-list tools, enforce policy-as-code, and keep immutable logs so I can answer why something happened without guesswork. If an agent can send money or change records, it gets an approval step with a human or a checker agent.

If I were starting this weekend

I’d pick one workflow I actually run weekly, like summarizing support tickets, drafting replies, and filing follow-ups in my CRM. I’d build it on a database backbone, route easy tasks to a small model, escalate when needed, and pull facts from retrieval. Then I’d prove it with a replayable eval set before turning it into a service.

I only ship after a replayable eval set shows today’s agent beats last week’s on the same real tasks.

Starter kit I actually use

- Memory and control: one database with vectors, JSON, and events. Store every plan, tool call, and result.

- Model mix: small router for easy wins, domain-tuned mid-size for routine depth, heavyweight only for tough cases.

- Security: short-lived tokens, tool allow-lists, policy-as-code, immutable audit logs, and approvals for risky actions.

- Evaluation: 10 to 50 real tasks with expected outputs and a script that flags regressions on every change.

- Shipping: start as a cron or CLI, promote to a service after a clean week of replays.

The bigger picture I can’t unsee

Oracle is pushing agents into data gravity, Arm is making agent loops faster, Salesforce is standardizing the pipeline, and CIO reminded us to split workloads across models. I’m not bullish because demos look cool. I’m bullish because the plumbing is finally real, which is what enterprise AI automation and AI infrastructure actually run on.

My 7-day plan

I’m migrating agent memory to a database with vectors next to events. I’m splitting my single giant model into a router plus two specialists. I’m wiring policy checks in front of API calls that spend money. I’m also adding a tiny dashboard that shows the last 50 actions with reasons and results so I can sleep at night.

FAQ

What is agentic AI in an enterprise context?

It’s an AI system that plans, calls tools and services, verifies results, and repeats until a task is done. In enterprise use, it must be auditable, policy-aware, and resilient. That means real memory, guardrails, and evaluation loops, not just a clever prompt.

Do I need GPUs to start with agentic AI?

No. Start hardware-agnostic. Many routing and extraction steps run great on CPUs. Keep your design flexible with batching, streaming, and tool routing so you can add GPUs or accelerators when latency and scale demand it.

How do I choose a database for agent memory?

Look for vectors, JSON, and eventing in one engine so memory, context, and actions live together. You want transactions, policies, and logs in the same place your agent reads and writes, which simplifies debugging and governance.

What’s the fastest way to avoid the one-model trap?

Define boundaries first. Use a small router, a domain-tuned mid-size model, retrieval for facts, and reserve a large model for edge cases. Make routing decisions auditable so you can prove why the agent chose a path.

How do I implement zero-trust for agents?

Scope credentials tightly, allow-list tools, write policy-as-code for what the agent can do, and keep immutable logs. Add approvals for high-risk actions. This keeps AI security and AI automation aligned with real-world accountability.