Agentic AI just hit fast-forward for me. In 24 hours I changed how I build, evaluate, and secure agents, and I’m sharing exactly what I’m doing this week so you can move with me.

Quick answer: Add a real control layer, make your agents portable, bake in scenario-based evals, start with one small repeatable workflow, and treat every tool call as a privilege. These shifts clicked for me after the March 28–29, 2026 updates from Inc42, Arm, and Solo.io’s agentevals coverage.

I start with one small, repeatable workflow this week and ship it before chasing anything bigger.

The stack finally has a control layer

What changed

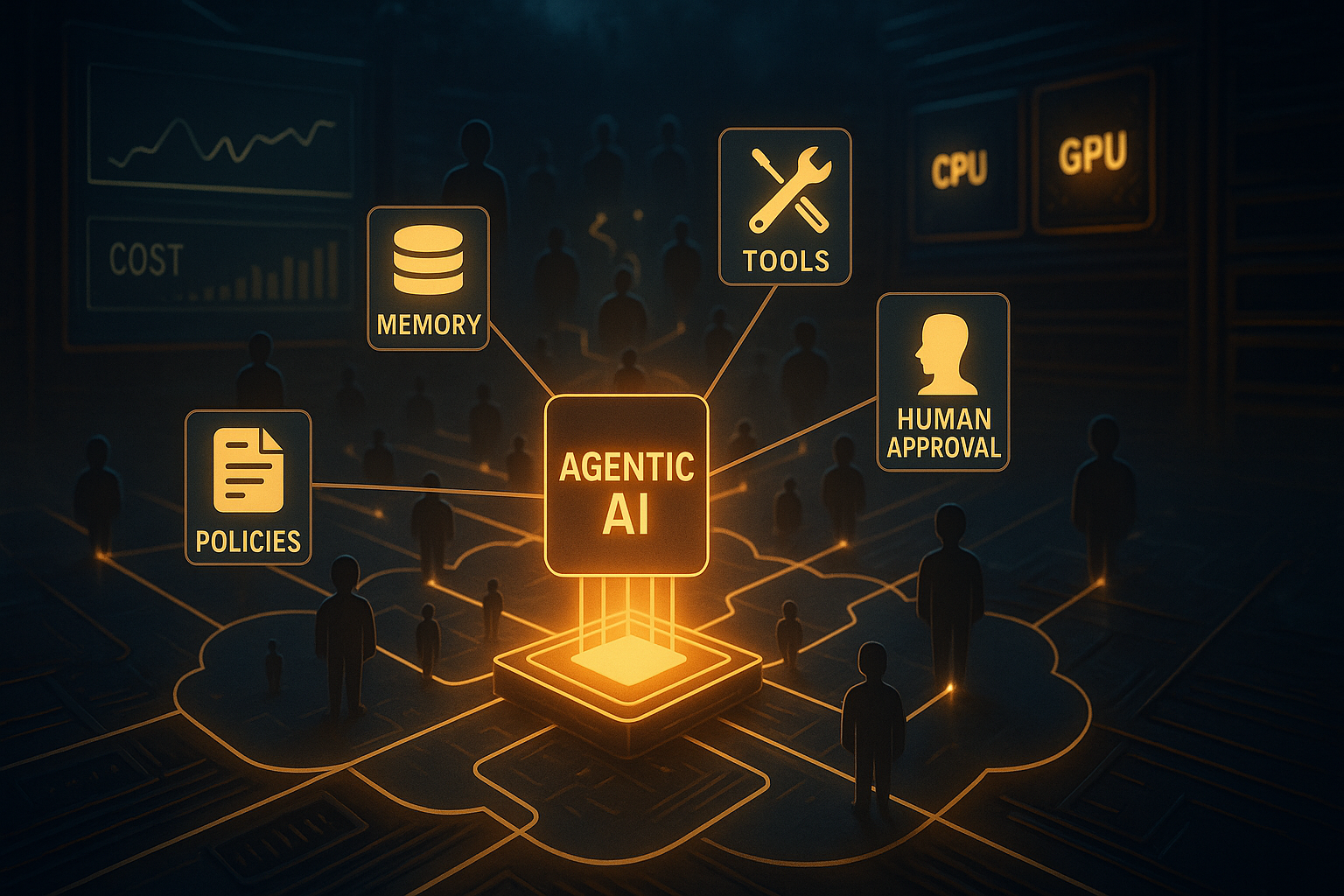

On March 29, 2026, Inc42 called the control layer the next big thing for agentic AI stacks. It’s the missing brain that coordinates tools, memory, policies, and human approvals so your system does not collapse into callback spaghetti.

How I’m adjusting

I’m treating orchestration as a product decision. If an agent needs more than two tools or touches a database, it gets a controller with explicit policies, event handling, and human-in-the-loop. I sketch flows first with triggers, states, approvals, fallbacks, and cost caps before I write code. Clever prompts are great until you need replanning, waiting, or safe retries. Then you want a control layer.

Beginner tip: pick one orchestration approach and keep it shallow. You only need predictable hooks for tools, memory, review, and logging. Build for day two: errors, retries, rollbacks.

Silicon showed up: Arm’s AGI CPU is a signal

What changed

Also on March 29, 2026, Arm announced its AGI CPU as the silicon foundation for agentic AI in the cloud. Translation: long-running, tool-heavy, memory-aware workloads are getting first-class CPU support, not just GPU cycles.

How I’m adjusting

I’m making agents portable. Containers over bespoke runners, minimal native dependencies, and zero assumptions about chip type. If cloud providers start slotting Arm instances for cost or efficiency, I do not want a 2 a.m. rewrite. I’m also tracking cost because agents idle, fetch, and reason in loops. That screams for right-sized hardware and autoscaling that actually saves money.

Beginner tip: test locally on x86 and on an Arm dev box or preview instance. If your Python stack behaves the same on both, future you will be very happy.

I make agents portable with containers and zero assumptions about chip type so I never face a 2 a.m. rewrite.

Evals are finally getting grown-up attention

What changed

On March 28, 2026, Solo.io’s agentevals framed evaluation as the biggest unsolved problem for Agentic AI. Agents are about behavior over time, not a single accuracy score. Did it choose the right tool, ask for help, and stop on budget?

How I’m adjusting

I’m baking scenario-based evals in before any demo. Three tests cover most risk: a happy path success, a forced failure with recovery, and a strict budget ceiling. If I cannot read the logs without squinting, it is not ready for users. I’m not chasing a perfect metric. I just want to catch regressions, tune policies, and surface cost early.

The adoption gap is real, so I’m starting small

What changed

On March 28, 2026, SiliconANGLE wrote about the agentic AI gap where vendors sprint and enterprises crawl. I feel it in every client call. People want outcomes, not slide decks.

How I’m adjusting

I’m narrowing scope to the same data, same tools, every day. That is where Agentic AI shines without spooking compliance. Skip the giant autonomous assistant pitch and ship one boring, measurable loop this week:

- Inbox triage to one system of record with human approval before write-back

- Daily status rollup that pulls two APIs, writes a doc, and pings a channel

- Data hygiene bot that flags issues and drafts fixes behind a one-click confirm

These are safe, traceable, and actually useful. When they work, the next greenlight is easy.

I ship one boring, measurable loop this week to earn trust and unlock the next greenlight.

Security is shifting to active defense

What changed

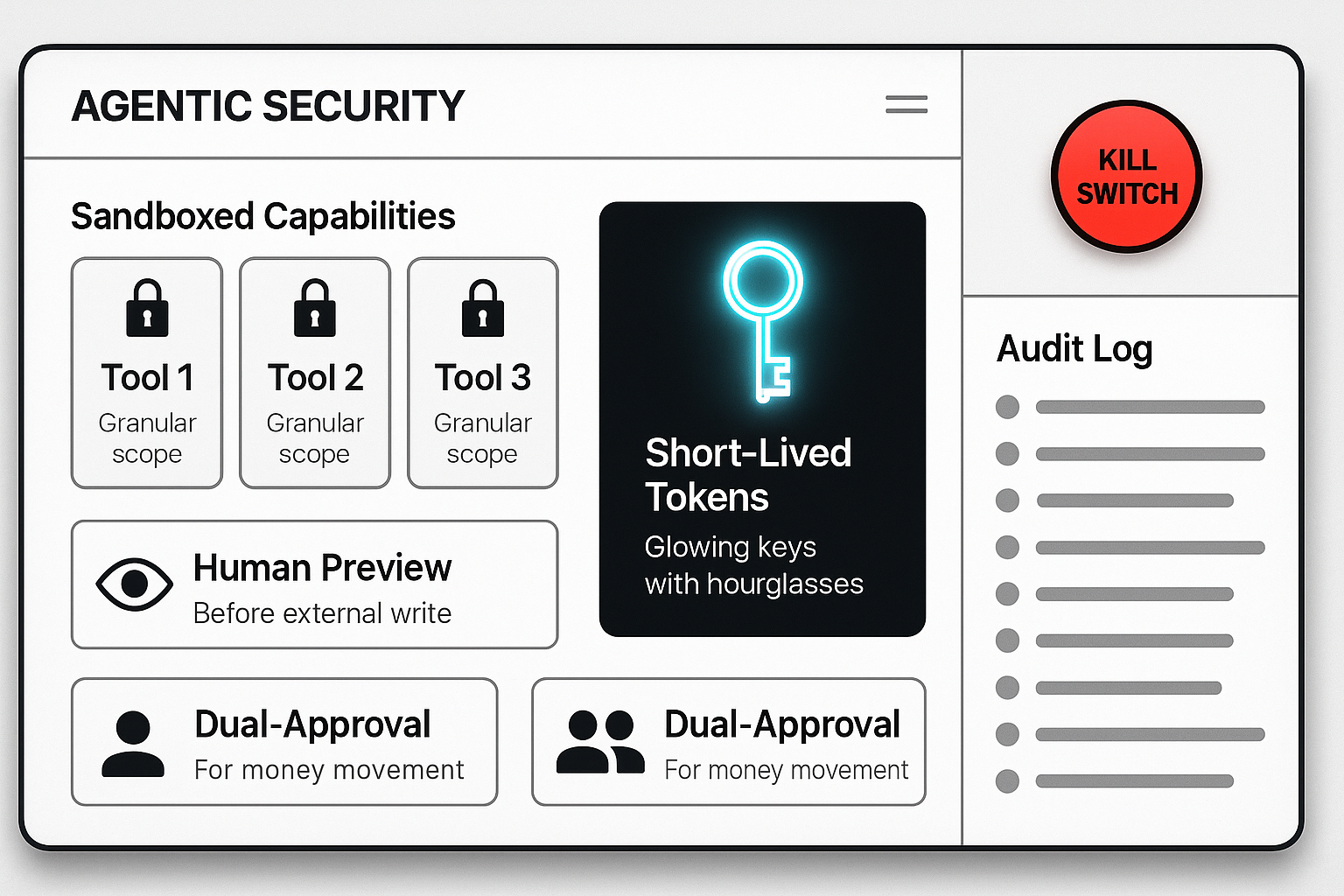

GovTech’s RSAC 2026 highlights on March 29, 2026 put it plainly: Agentic AI can act, so security must assume action. This is not just another SaaS login problem.

How I’m adjusting

I’m treating every tool call and external write as a privilege. Granular scopes, short-lived tokens, visible previews, and structured logs at the control layer. If an agent wants to send email, it needs a send capability with a human preview and a log entry. Moving money requires dry runs and dual approval, always. None of this is fancy. It is just the default now.

My starter stack for this week

Use case: a daily partner-report prep agent that pulls yesterday’s metrics, drafts a brief, and hands it to me for review.

I sketch the control layer first: a scheduler trigger, a fetch-metrics state with retry, a summarize state with a token and cost ceiling, and a review state that blocks any outbound email until I approve. Tools are narrow and scoped: one read-only analytics API, one doc writer in a sandbox folder, one email draft capability that cannot send. Memory is minimal and short-lived. Logs go to one table with timestamps and costs. For evals, I run three nightly scenarios: happy path with fixed data, API failure that forces a retry then human handoff, and a stress case that enforces a budget cap.

I block outbound email until I approve and log every step to a single table for traceability.

The result is an agent that behaves like a teammate I trust, not a clever demo I’m afraid to show my boss.

FAQ

What is the Agentic AI control layer and why should I care?

It is the coordination brain that manages tools, memory, policies, and human approvals. Without it, systems turn into brittle prompt chains. With it, you get predictable behavior, safer actions, and easier debugging.

How do I evaluate an Agentic AI system without a single accuracy score?

Test scenarios, not just answers. Start with a happy path, a known failure that requires recovery, and a strict budget limit. Log decisions, tool calls, and costs. You are looking for stable behavior and clear reasoning, not a perfect number.

I test scenarios, not just answers, and I log every tool call and cost to catch regressions early.

Should I optimize for GPUs or CPUs for agents?

Agents often mix waiting, fetching, and light reasoning. After Arm’s AGI CPU news on March 29, 2026, I’m assuming more Arm-friendly CPU options in the cloud. Write portable code and let autoscaling choose the right mix per workload.

What is a safe first agentic use case for an enterprise?

Pick a daily, scoped loop with the same data and tools. Inbox triage with human approval, a daily report rollup, or a data hygiene pass are great starters. They are measurable, low risk, and fast to ship.

How do I handle security for agents that can act?

Default to least privilege. Scope every capability, keep tokens short-lived, preview irreversible actions, and log every tool call. Add a real kill switch in your control layer and test it.

What I’m watching next

Across March 28–29, 2026 the signal is clear: Agentic AI is professionalizing. Control layers are getting real, hardware is aligning to workloads, evaluation is maturing, and security is moving to active defense. If you start small, add a control layer, bake in evals, and put guardrails in today, you will be ready for the bigger drops coming next.