Agentic AI just stopped being hype for me this week. In 48 hours, five shipped updates made it usable, auditable, and worth copying.

Quick answer: From Feb 18-19, 2026, Sea and Google moved agents into commerce, Travelers shipped a claims assistant with OpenAI, 500 ISPs ran agents via Calix, AWS shared evaluation lessons, and NIST kicked off standards. If you’re starting now, pick one repeatable workflow, define the end state, log everything, and scale gradually.

I start by picking one repeatable workflow, defining the end state, giving the agent minimal tools, logging everything, and scaling gradually.

Sea and Google are rolling out agents across an ecosystem

On Feb 19, 2026, Sea Ltd signed a deal with Google to deploy agentic AI across its ecosystem. That’s a strong signal this is past slideware and into ROI territory. You can read the report here.

What I’m copying: design for outcomes, not prompts. For a tiny project, I’d set a goal like this: find 3 comparable products, validate prices on two sites, then write a 100-word summary with links. Build the shortest toolchain that can hit that end state on its own.

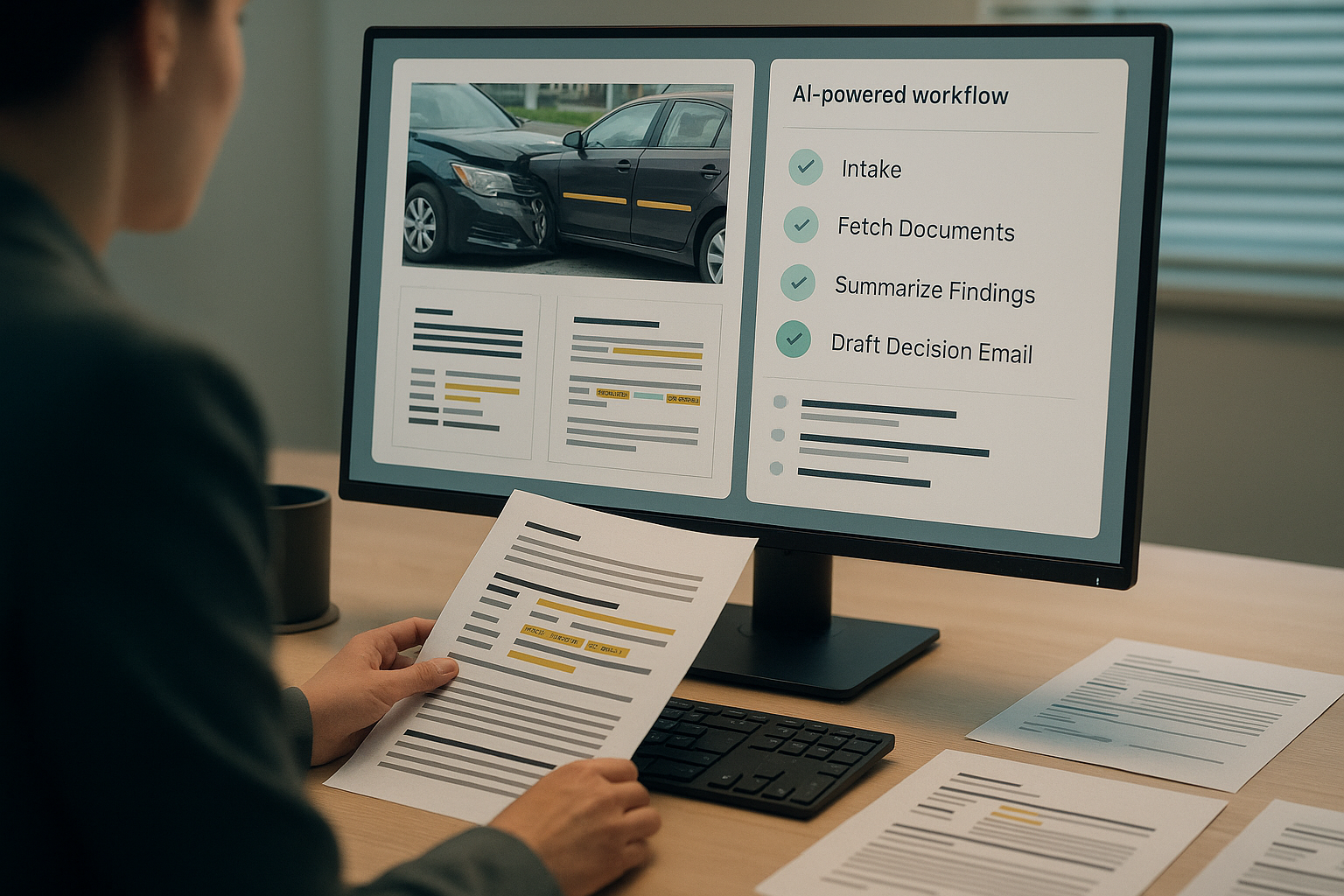

Travelers shipped a claims assistant with OpenAI

Also on Feb 19, 2026, Travelers announced an agentic AI claims assistant built with OpenAI, covered here. Insurance is paperwork and compliance heavy. If an agent holds up there, it can handle my messy backlog.

How I’d start: pick one process with clear steps and a definition of done. Think intake a request, gather 3 docs, summarize findings, and draft the decision email. Humans still decide, the agent does the boring parts.

I pick one process with clear steps and a definition of done, and I let humans decide while the agent handles the boring parts.

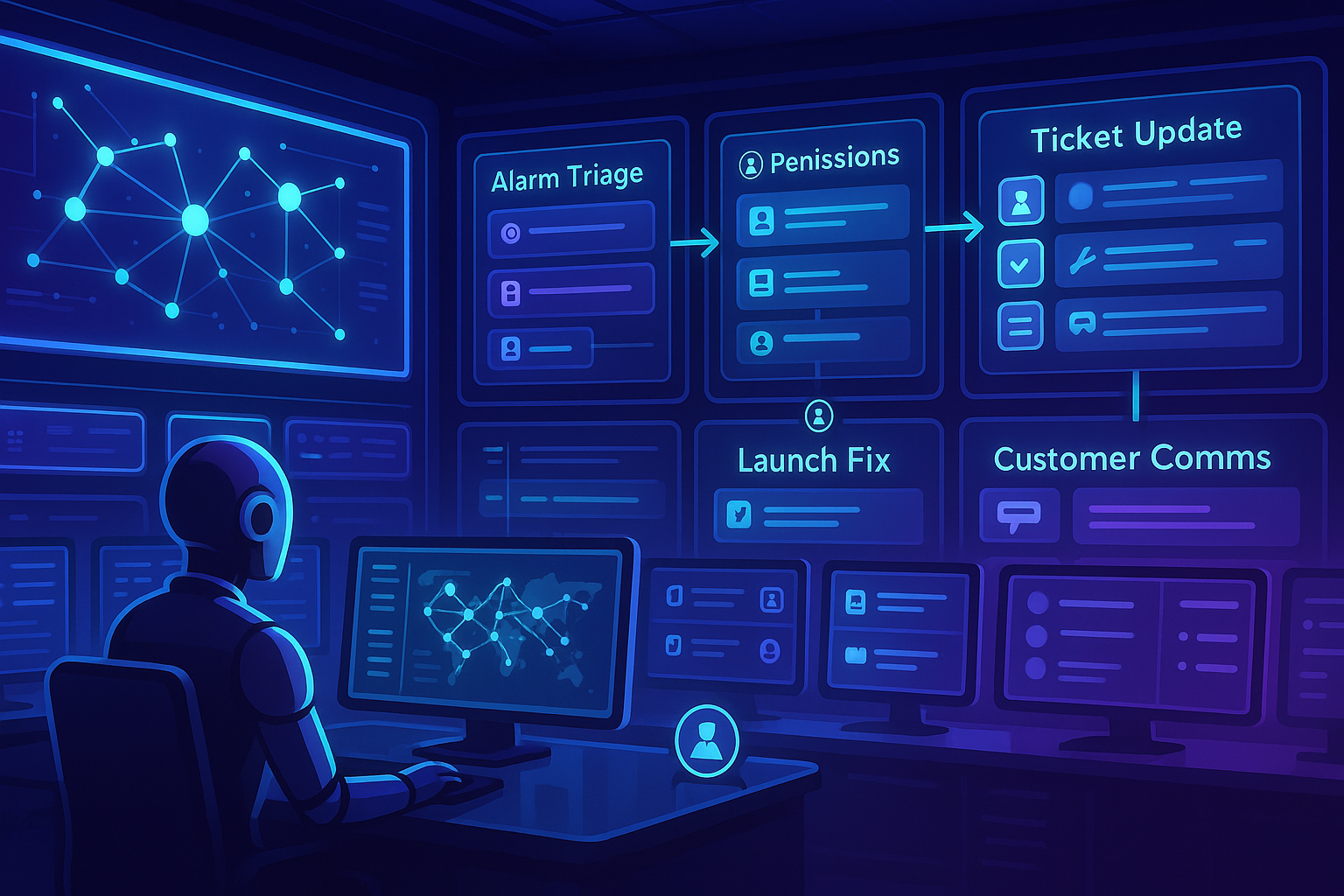

500 ISPs are already running an agentic AI platform

On Feb 18, 2026, Calix said 500 internet service providers are using its agentic AI platform, reported here. That’s real ops, not a demo. The first wins are the boring ones: alarms, ticket updates, common fixes, and customer comms.

My playbook here: define a clear trigger, give the agent the right tools and read-only access first, review transcripts, then expand scope once trust builds. You do not need the biggest model for 80 percent of the lift. You need clean handoffs.

What Amazon learned about evaluating agents

Amazon Web Services shared hard lessons on Feb 18, 2026 from building agentic systems, and it finally clicked for me. The gist: evaluate the whole loop, not a single clever reply. Their post is worth a read here.

- Test end-to-end and measure outcomes, not wordplay.

- Seed real edge cases like missing data and timeouts.

- Keep toolboxes small and well defined.

- Track retries and tool calls to spot confusion.

- Review failures weekly and fix the biggest pattern first.

When I tightened tool schemas and added a simple ask-for-missing-info step, my tiny internal agent’s failure rate dropped. No magic, just observability and iteration.

I evaluate the whole loop, keep toolboxes small, and add an ask-for-missing-info step before shipping.

NIST kicked off an AI Agent Standards Initiative

Also on Feb 18, 2026, NIST launched a standards effort focused on AI agents, noted here. Translation for me and you: build with audit trails, role boundaries, and escalation paths. Expect more scrutiny on data provenance, tool permissions, and outcome-based evaluation.

My move now: log transcripts, tool calls, timestamps, and decisions. Separate read and write scopes so I can safely dial up autonomy.

What I’d actually build first

I don’t chase the biggest use case. I chase the clearest one. The fastest wins repeat daily, touch multiple sources of truth, and have an obvious definition of done. Think weekly reporting, lead qualification, invoice checks, or Tier 1 support replies. Define the end state in plain English, give the agent the fewest tools needed, and iterate with your team in the open.

I chase the clearest use case with the fewest tools, not the biggest one.

My simple 7-day starter plan

Day one, I pick a workflow with 5 to 10 steps and write its one-sentence definition of done. Next, I list only the top two or three tools and data sources. Then I draft the agent’s goal and guardrails, including what must be confirmed by a human. I build a read-only prototype with full logging, run 10 real cases, fix the top two friction points, add a fallback to ask for missing info, then ship to a tiny group with single-click approval and review logs together.

FAQ

What is agentic AI in simple terms?

Agentic AI is software that sets sub-goals, calls tools or APIs, and loops until it completes an outcome. It is not just a chat response. It plans, executes, and knows when to ask for help or escalate.

Do I need a frontier model to start?

No. Most early wins come from well-shaped tasks, tight tool design, and clear human handoffs. Start with a capable, cost-efficient model and invest more in evaluation and logging than model hopping.

How do I keep agents safe in regulated workflows?

Log everything, separate read and write roles, and require approvals for sensitive actions. Add guardrails for data provenance and tool permissions, and keep an audit-ready transcript for every run.

What metrics should I track?

Track end-to-end task success, time to completion, retries, and tool call counts. Review failed transcripts weekly to spot patterns and ship small fixes that reduce loops and guesswork.

What is a good first project?

Pick a process with repeatable steps and a clear definition of done, like weekly metric rollups or Tier 1 support. Limit tools to the essentials, keep it read-only first, and scale as trust grows.

I keep early projects read-only, limit tools to essentials, and scale as trust grows.

Where this is headed

The Sea and Google move on Feb 19 shows marketplaces are ready to orchestrate agents. Travelers’ launch the same day proves compliance-heavy flows can work. Calix’s Feb 18 number says operational agents are already normal. AWS’s guidance gives us a practical yardstick, and NIST’s initiative is the nudge to build like adults. For me, that’s freeing. Small, auditable wins first, then steady autonomy.