Agentic AI is no longer a side project for me. Agentic AI went from curiosity to roadmap-critical in two days, and I want you to see exactly why and how to start fast without breaking things.

Quick answer: Between Feb 18 and 19, 2026, standards, evaluations, infrastructure, and real deployments all moved at once. NIST kicked off standards, AWS shared practical evals, Temporal raised big money for reliability, and enterprises shipped real agents. If you start with one scoped workflow, clear evals, and durable execution in mind, you can ship a safe, auditable agent this weekend.

I start with one scoped workflow, set clear evals, and aim for durable execution so I can ship a safe, auditable agent this weekend.

Why this week flipped the switch for me

I spent two days glued to updates and it felt like someone turned the city lights on. Standards got teeth, operators showed their playbooks, the reliability stack got funded, and customers in hard industries went live. That combination is rare, and it’s exactly what I needed to tighten my 2026 plan.

NIST just set the rules of the road

On Feb 18, 2026, NIST launched its AI Agent Standards Initiative. That sounds dry, but it gives builders like me something critical: shared language for safety, planning, tool use, and auditability. If a regulated buyer asks how my agent decides or what tools it touches, I can answer with logs instead of vibes.

My rule from now on is simple: design as if a customer will ask for a full decision and tool audit. Because they will.

I design every agent assuming a customer will request a full decision and tool audit, because they will.

AWS showed how to test agents that actually work

Also on Feb 18, 2026, AWS shared real-world lessons from Amazon’s agentic systems. The core idea hit home for me: test like an operator. Define the task, the tools allowed, the time or token budget, and the success criteria. Track recoveries, not just wins. An agent that asks for help safely beats one that confidently does the wrong thing.

If you’re new, a tiny spreadsheet of scenarios with expected outputs is enough to start. Ship small, observe a lot.

I test like an operator: I define the task, tools, budgets, and success criteria, and I track recoveries as carefully as wins.

Temporal raised $300M to make reliability boring

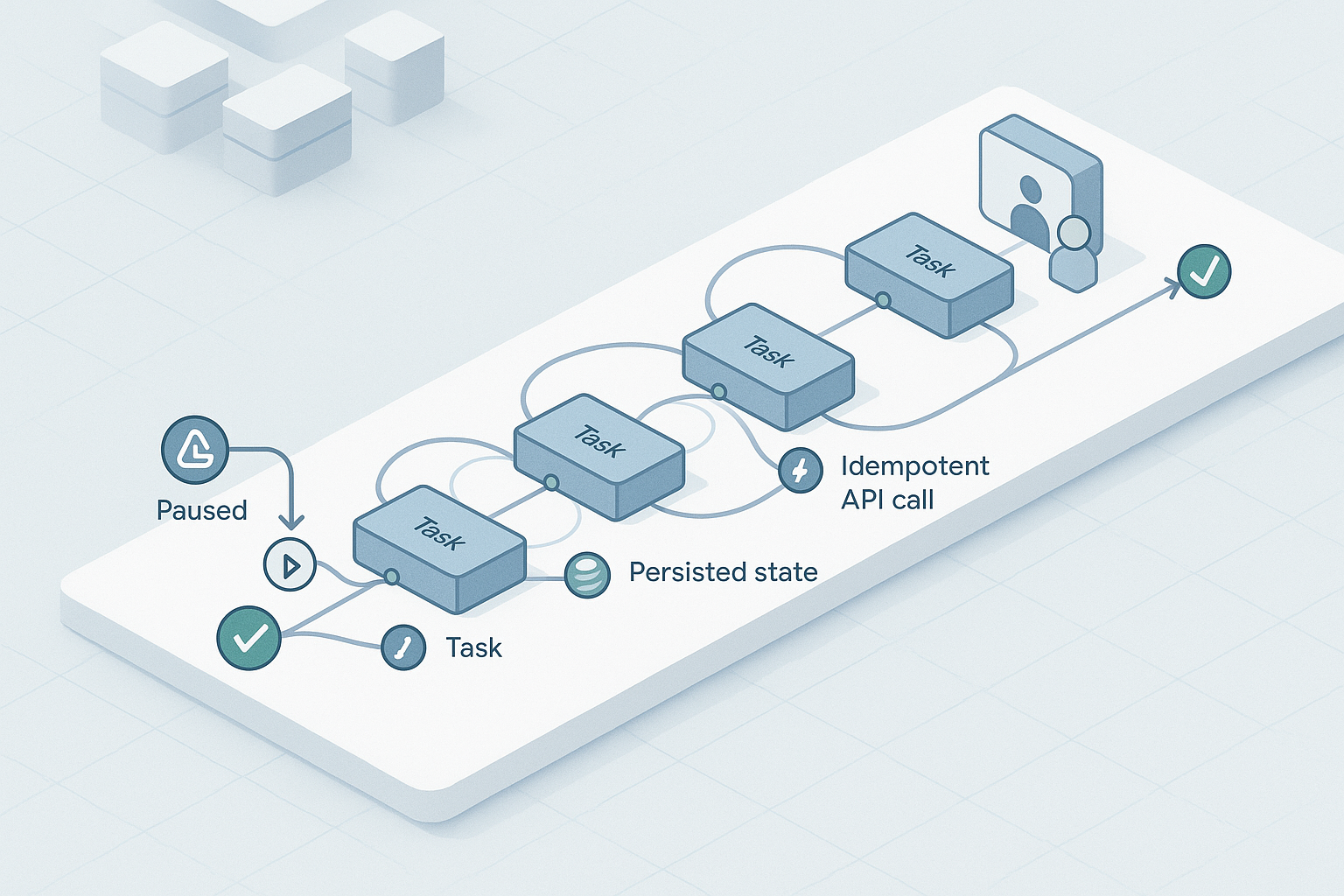

On Feb 18, 2026, Temporal announced a $300M Series D. I’ve wrestled with long-running agents that need to pause for webhooks or approvals, then resume without losing state. Durable execution and idempotent tool calls turn a flashy demo into something I trust while I sleep. I don’t want to reinvent that plumbing again.

Travelers quietly proved real enterprise value

On Feb 19, 2026, Travelers launched an agentic AI claim assistant with OpenAI. Claims are messy, document heavy, and fully auditable. If an agent can help there, it can help almost anywhere. The lesson I’m taking forward: The model is not the product. The workflow is.

Calix hit 500 ISPs on one agentic platform

Also on Feb 18, 2026, Calix said 500 ISPs use its agentic platform. Telco workflows are noisy, high volume, and unforgiving. If operators stick with an agent after the novelty wears off, it means the loop is tight: explain the issue, propose fixes, and open the right ticket when approved. That is the bar.

What this means if you’re starting today

Agentic AI projects win when they are operational, not flashy. Pick a workflow you already own, make success measurable, and design for audit and retries before you write a single prompt. Keep humans in control and let the agent handle prep, retrieval, and coordination.

I focus on operational wins over flash: I pick a workflow I already own and make success measurable from the start.

My weekend starter plan

- Pick one 30 to 90 minute workflow with clear success, like a weekly customer health summary or triaging failed invoices.

- List the tools the agent may use and when each is appropriate, in plain language.

- Set a budget: max runtime, max API calls, and what to do when uncertain. Asking a human is valid.

- Make a 10 row eval sheet with inputs, expected outcomes, and pass or fail.

If you can, simulate durability. Save state at each step, make tool calls idempotent, and log every action with a timestamp and reason. You’ll be ready for audits without slowing down.

I simulate durability early by saving state, making tool calls idempotent, and logging every action with timestamps and reasons.

How I pick a simple stack without overthinking it

I use one reasoning model I trust, add a vector or document store only if retrieval matters, and keep orchestration thin so I can swap it later. If the workflow runs longer than a few minutes, that’s my cue to look at a workflow engine. Temporal’s raise tells me reliability is not optional in 2026, but I still start with what I know and evolve.

For evals, I measure task completion and time first. Then I add cost and tool error rates. Subjective ratings come last, otherwise I just chase vibes.

Where this is going next

NIST’s Feb 18 move brings shared expectations. AWS’s operator-grade evals on the same day hand us a playbook. Temporal’s funding points to reliability over raw model size. Travelers showed value in a place that cannot fake it, and Calix proved operators will use this at scale. If you start small now, you’ll have logs and metrics when someone finally asks your agent to own a real outcome.

FAQ

What is agentic AI in simple terms?

Agentic AI is a system that can plan, call tools or APIs, and take multi-step actions toward a goal. It is not just chat. The useful ones are auditable, recover from errors, and keep humans in control for important decisions.

How do I evaluate an AI agent without a big setup?

Define the task, tools allowed, budgets, and a clear success rule. Then run a small set of scenarios in a spreadsheet and record pass or fail. The AWS guidance from Feb 18, 2026 backs this operator-first approach and it scales well as you add complexity.

Do I need a workflow engine like Temporal on day one?

No. Start simple, but design as if a crash could happen. Save state between steps and make tool calls idempotent. If your workflow regularly runs for more than a few minutes or needs human approvals, a workflow engine starts paying for itself fast.

How do I keep agents safe in regulated teams?

Log every decision and tool call with timestamps and reasons. Limit tools, set budgets, and define what to do when uncertain. Align to emerging guidance like NIST’s Feb 18, 2026 initiative so security reviews become checklists, not debates.

What’s a good first workflow to automate?

Pick something you already do weekly and can measure. Customer health summaries, lead qualification, or invoice triage are great. Let the agent prep data, draft outputs, and ask for confirmation. Keep final approval with a human until trust is earned.