Agentic AI finally clicked for me this week. I went from tinkering to a real agent that ships work without babysitting, and it only happened after I ate a few hard lessons on March 20, 2026 when a wave of stories landed at once.

Quick answer: Agentic AI works when you keep plans shallow, tools narrow, and guardrails loud. Budget the agent’s actions, bind every tool with strict schemas, add a fast policy layer for security, and design for queues and warm workers from day one. Start with one closed loop task and only add depth when the task proves it needs it.

I keep plans shallow, tools narrow, and guardrails loud; I budget the agent’s actions and bind every tool with strict schemas while I design for queues and warm workers from day one.

What I mean by agentic AI

When I say agentic AI, I mean a system that can plan, call tools or APIs, read and write data, and adapt from feedback to finish a goal. Less chatbot, more reliable intern that checks a knowledge base, files a ticket, sends the email, and logs the outcome.

Lesson 1: The math really can kill your agent

Why planning explodes and costs spiral

On March 20, 2026, I read The Math That’s Killing Your AI Agent and it explained what I was seeing. Naive planning branches like crazy. Two options per step over eight steps is 256 paths, before retries or tool calls. Tokens climb, latency stacks, and the bill hurts.

My fix was not a fancier prompt. I forced a shallow plan, capped exploration, and only reflected when confidence was low. I also tightened tool contracts so the agent could not wander.

I force a shallow plan, cap exploration, and only allow reflection when confidence is low; I tighten tool contracts so the agent cannot wander.

- Set an action budget and track it, not just tokens.

- Plan shallow, act, then reflect only when needed.

- Validate tool inputs and outputs with strict schemas.

- Keep beam width tiny so the agent must commit.

Lesson 2: Real users want agents that actually do things

Starling Bank raised the bar on March 20, 2026

That same day, Starling Bank’s assistant showed what users actually want: actions, not vibes. So I stopped building a generic helper and shipped one closed loop for billing support. Pull the invoice, check status, draft the message, update the CRM, log the result. It feels small, but it finishes the job.

If you are starting, pick a task with clear steps, available APIs, and a measurable outcome. You will learn orchestration without drowning in ambiguity.

I start with one closed loop task that has clear steps, available APIs, and a measurable outcome so the job actually finishes.

Lesson 3: Security is not a checklist, it is architecture

Microsoft’s end to end guidance landed on March 20, 2026

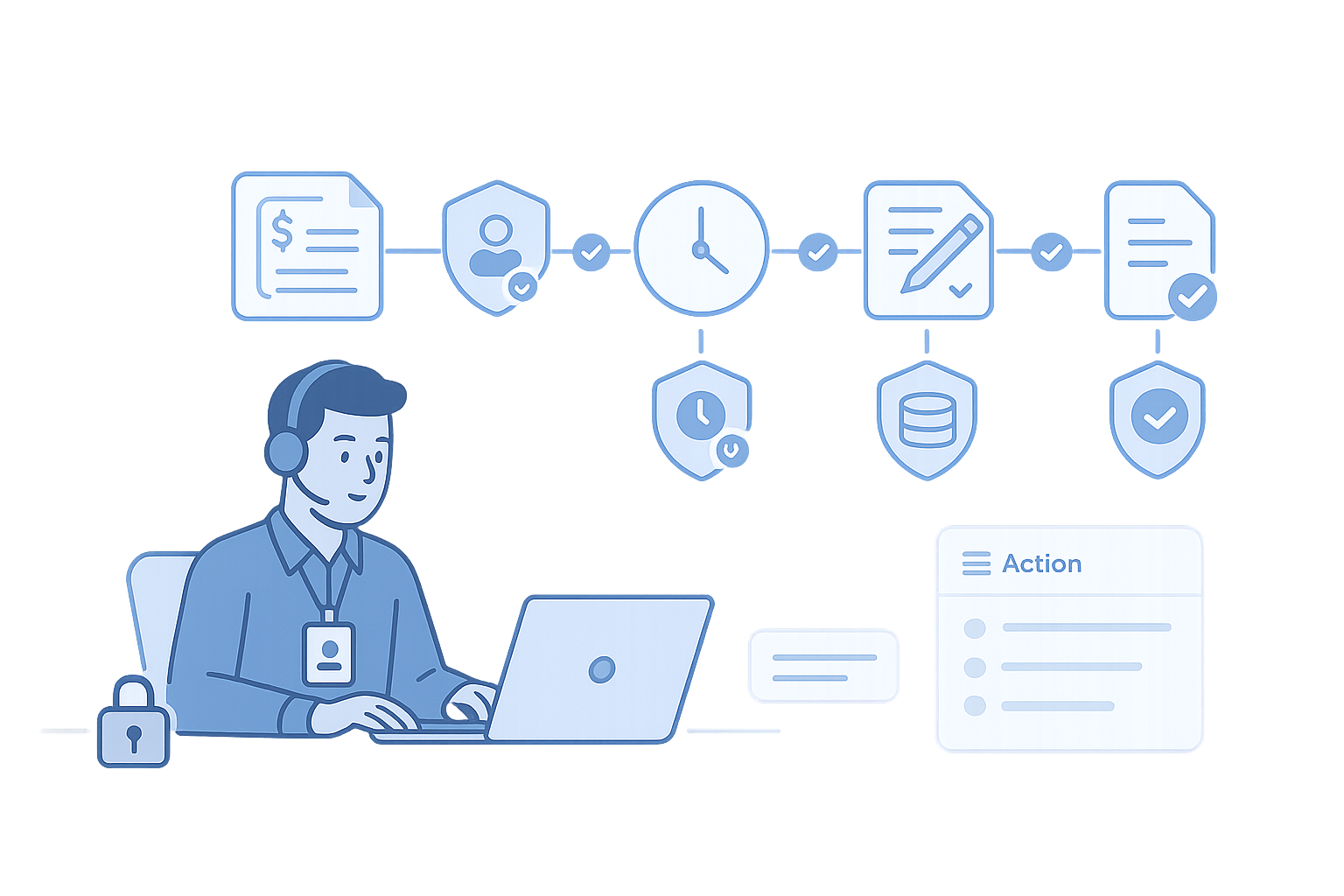

Microsoft published secure agentic AI guidance and it mirrored what finally made my build stable. I gave the agent an identity, signed tools, and a tiny policy layer that can deny or modify a call in real time. The agent proposes, a guard reviews, then the tool runs. With local checks it added milliseconds, not seconds, and it blocked several bad ideas during testing.

If you only change one thing today, stop giving default write access. Make read the default and force a policy-backed escalation for writes with user intent, data labels, and a valid change window.

I make read the default and only escalate to writes with a fast, policy-backed check that includes user intent, data labels, and a valid change window.

Lesson 4: The painful cautionary tale

A Meta engineer trusted an agent and paid for it

Also on March 20, 2026, IT Pro reported a Meta engineer followed an agent’s advice and exposed user data. The agent was not malicious. It just offered an efficient answer that was unsafe in context, and a rushed human approved it.

That story changed my setup the same afternoon. I moved secrets to a vault, rotated short lived tokens for every tool, and wired a tiny risk check before any write. It has already saved me from two near misses.

Lesson 5: The model is not your bottleneck

Nutanix called it out on March 20, 2026

ChannelE2E covered how the slow parts are your orchestrator, queues, vector store, and network I O, not the model. I saw the same thing the moment I turned on real traffic. Back pressure and cold starts show up fast.

The boring fixes worked. I put queues in front of expensive steps, set strict concurrency per tool, cached embeddings hard, and kept a small pool of warm workers. Measuring p50 and p95 per tool revealed a single file retrieval was the actual villain.

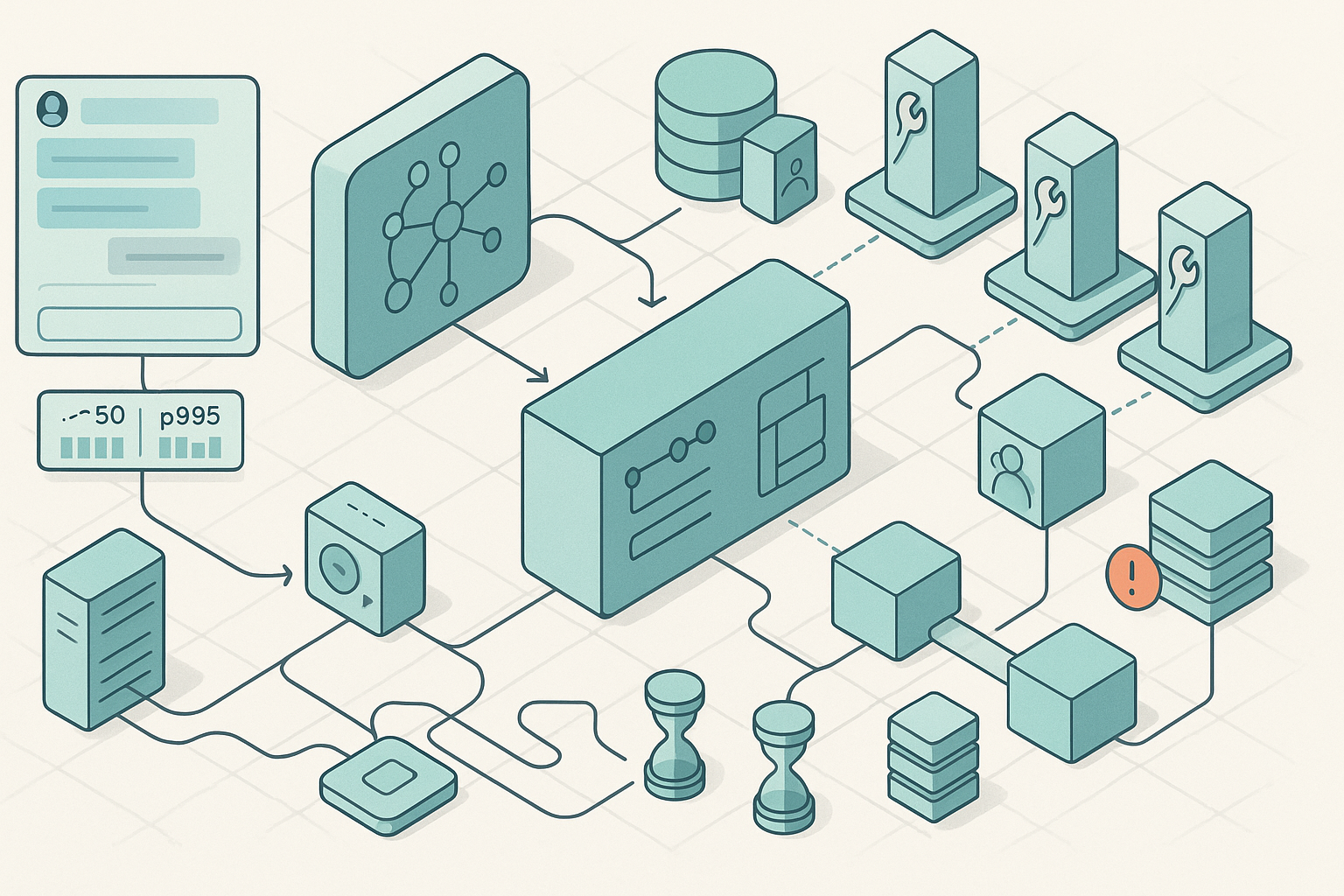

Your first agentic AI, a minimal blueprint that works

Here is the layout I shipped for a small but real use case. Front end is a light chat UI with a visible action log so people see what the agent will do. The orchestrator manages planning, budget, and tool picks, and decides when to reflect versus push. Tools are narrow with least privilege, each on its own service account with short lived tokens. Memory is a vector store for retrieval plus a small episodic store tied to a user or ticket, not a dump. A fast policy gate checks intent, data labels, and time windows before any tool runs. Observability is structured logs on every step with a trace ID and easy replays. For scale, I added queues, warm pools, and strict timeouts on external calls.

What March 20, 2026 told me about where this is going

The math forces shallow plans and tight tools. Real value shows up when agents can actually act, like Starling did. Security has to be end to end, not a final gate, and there are public scars when it goes wrong. Most limits are your plumbing, not your model.

If you are on the fence, pick one loop and ship it. Keep the plan shallow, the tools narrow, the guardrails loud, and the logs detailed. If an answer feels too efficient to be true, ask the agent to show its plan. That habit alone has saved me more time than any new model drop this year.

If an answer feels too efficient to be true, I ask the agent to show its plan; that single habit has saved me more time than any new model drop this year.

Agentic AI FAQs

What is agentic AI in simple terms?

It is an AI that can plan, call tools or APIs, and adapt from feedback to complete a goal. Think of it like a reliable intern that does tasks end to end instead of just chatting.

How do I stop my agent from overthinking and running up costs?

Set an action budget, keep planning shallow, and cap exploration. Validate every tool call with strict schemas and only allow reflection when confidence is low. Commit early and move.

What security basics should I ship on day one?

Give the agent an identity, sign tools, and wrap every call with a fast policy check. Make read-only the default, use short lived tokens, and log inputs, outputs, and tool signatures so you can replay any session.

How do I pick my first real use case?

Choose a task with clear steps, existing APIs, and a measurable outcome. Close one loop completely, then widen scope. Users forgive small scope if the loop actually closes.

How do I scale without melting my stack?

Queue expensive steps, limit per-tool concurrency, cache embeddings, and keep a warm worker pool. Measure p50 and p95 per tool to find the real blockers before you scale traffic.