Agentic AI just got very real for me this week. After two days in the weeds, I watched the hype turn into shipping products, real infrastructure, and clear security patterns. If you’re on the fence, this is the kind of week that changes what a beginner project can look like next month.

Quick answer: Agentic AI hit an inflection in late March 2026. Nvidia signaled massive capacity for long-running, tool-using agents, Starling put an action-taking assistant into a regulated banking app, Nutanix shipped an AI factory stack for scale, and Microsoft published end-to-end security patterns. If you start now, design for longer plans, strict tools, and built-in guardrails.

My quick take: Agentic AI hit an inflection in late March 2026, so I design for longer plans, strict tools, and built-in guardrails.

Nvidia just confirmed the compute runway

On March 21, 2026, Yahoo Finance’s conference coverage quoted Jensen Huang saying we’re at an agentic AI inflection and projected over 1 trillion dollars of demand for Blackwell-Rubin systems through 2027. That is not a marketing puff piece. It’s a manufacturing and deployment signal for anyone building agents that plan, call tools, and run for more than a few seconds. Here’s why I care if you’re new.

- Inference gets faster and cheaper, so chaining more tool calls won’t destroy UX.

- New instance types make memory, retrieval, and function calling feel snappier per action.

- You can design for outcomes, not timeouts, and let agents plan multiple steps.

I design for outcomes, not timeouts, and I let agents plan multiple steps.

If you want the source, the quote came from Yahoo Finance’s event coverage on March 21, 2026.

What I’m changing in my builds

I’ve stopped chasing one-shot clever prompts. I let the agent plan 3 to 5 steps with strict tool schemas so calls are fast and unambiguous. With compute getting cheaper, structure and reliability beat trick prompts every time.

Banking just shipped an agent that does things

On March 20, 2026, Starling Bank rolled out an agentic AI assistant inside its UK app. Multiple outlets covered it that day, and The Next Web’s write-up called out what matters: this isn’t a chatbot that explains your balance. It can take actions on your behalf inside a regulated product.

I’m building assistants that execute, not just suggest.

How I’d clone this safely for a side project

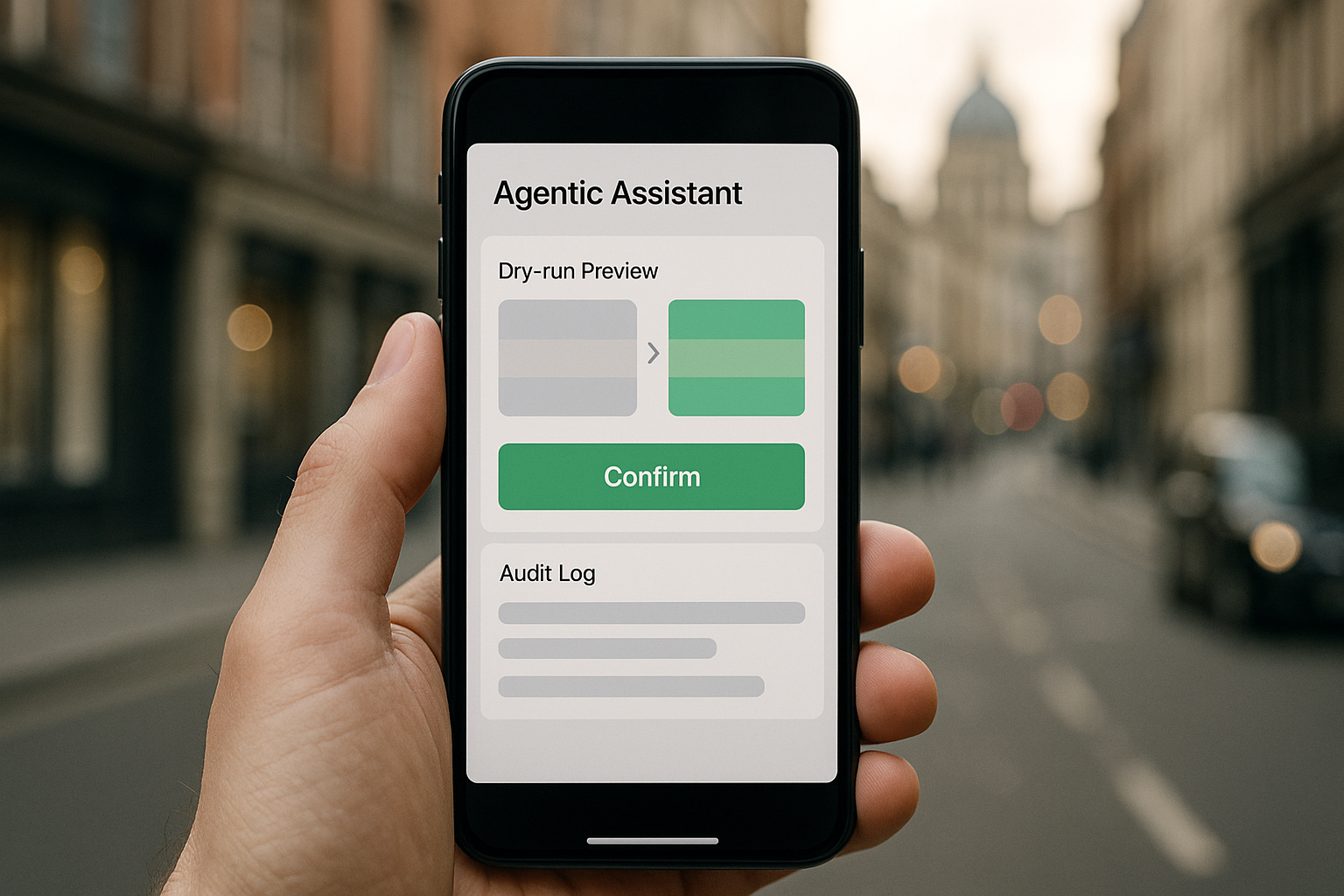

I’d ship three guardrails on day one: a dry-run mode that shows every planned action with diffs, a hard confirmation step for anything money-adjacent, and a human-readable log that explains what changed and why. I’d also time-box execution windows so nothing runs unattended in the background. That mix feels safe and immediately useful.

The bigger signal to me is trust. Users are ready for assistants that execute, not just suggest. If your app is still AI that explains, it’s time to think AI that resolves.

Infrastructure grew up with an AI factory stack

Also on March 20, 2026, Nutanix unveiled its Agentic AI full stack for enterprise AI factories. Ignore the buzzword and look at the shape of the problem they’re targeting: state, storage, orchestration, upgrades, rollback, and observability. Agents aren’t a single model call. They’re multi-step workflows with memory, tools, and policies. The moment you have more than one agent or a few thousand users, you need answers for where plans live, how tool credentials are scoped, how you recover mid-chain failures, and how you replay or audit without re-running paid calls.

I treat agents as multi-step workflows with memory, tools, and policies, not a single model call.

My simple home-lab version

I practice the same ideas with a small local LLM or hosted API, a lightweight task runner for steps and retries, a vector store for recall, and a tiny SQLite or Postgres table just for agent state. That setup quickly teaches where context drops, how to rehydrate state, and how to log tool results in a way you can audit or visualize later. When you move to cloud, you’ll already be thinking in factories: inputs, staged transforms, validations at tool boundaries, and consistent outputs you can verify.

Security finally has a playbook

On March 20, 2026, Microsoft published guidance titled “Secure agentic AI end-to-end.” It’s the cleanest mainstream write-up I’ve seen that treats agent security as first-class. They outline jailbreaks that try to cross tool boundaries, prompt injection that nudges the wrong APIs, over-permissioned tools that widen blast radius, and memory that quietly leaks sensitive data. You can read it directly on Microsoft’s site.

I treat agent security as first-class with clear tool boundaries and memory hygiene.

The guardrails I now add by default

I scope every tool to least privilege with expiring tokens and explicit allowlists per action. I require plan-level approval for anything destructive or money-related, not just a final output confirmation. I keep memory bounded with redaction and a hard separation between user data and system prompts. I run lightweight self-checks on tool outputs, then produce a natural-language rationale the user can understand. And I keep replayable, hashed action logs so I can investigate without re-running paid calls.

What I’m building next week

If you needed a nudge, this is it. Nvidia’s forecast tells me the runway is there. Starling shows users will trust execution if we nail permissions and clarity. Nutanix reminds me the boring parts matter and finally have better tooling. Microsoft turned security patterns into a checklist.

Here’s my 7-day plan. Days 1 and 2, I pick a workflow I hate. Mine is canceling random subscriptions and cleaning up recurring charges. I write down the exact tool calls I need: read statements, classify merchants, suggest actions, then execute cancellations or alerts. Day 3, I stand up a minimal agent with two tools and a strict schema for each. Tool one reads and summarizes. Tool two drafts a change. No execution yet. I add a dry-run transcript that a non-technical friend can follow.

Day 4, I add a plan validator. It restates the plan without sensitive data and checks three rules: does this touch money, does it modify an account, and will it call an external service. If yes, it switches to confirmation mode. Day 5, I add memory the careful way. I only keep context that explains decisions and a short-lived cache of relevant facts. No raw statements, and I redact tokens before anything hits logs. Days 6 and 7, I run it for myself. I wait a day and reread logs before I even think about letting friends try it. Agents age badly without good logs.

FAQ

What is Agentic AI in plain English?

Agentic AI is an AI that can plan steps and take actions with tools, not just chat back. Think of it as a workflow runner guided by language, with memory and permissions. It reads, decides, and then does, under rules you set.

Why does Nvidia’s projection matter to beginners?

Because cost and latency shape what you can build. If inference gets faster and cheaper, you can chain more steps, keep structure strict, and deliver outcomes without making users wait. It pushes you toward reliability over prompt hacks.

Is a banking assistant really a big deal?

Yes. When a regulated bank lets an assistant execute actions, it signals that clear permissions, logging, and confirmation flows can earn trust. That pattern translates to finance, ops, support, and beyond.

Do I need enterprise tools to start?

No. Start with a hosted LLM, a small task runner, a vector store, and a humble database table for agent state. You’ll learn the same lessons about context, retries, and audit trails before you spend real money.

How should I approach security on day one?

Design for failure by default. Validate the plan, the tool intent, and the tool output before writing to your source of truth. Scope permissions tightly, redact in memory, and keep replayable logs. Microsoft’s guidance is a solid checklist to follow.

Final thought

If you feel overwhelmed, that’s normal. A year ago we were all trying to get structured JSON back from a model. This week, a bank shipped an action-taking assistant, an enterprise stack landed, a security blueprint went public, and Nvidia said the compute to power it is on the way. Start small. Make it act. Make it safe. Then let the momentum pull you forward.