Agentic AI is here, and I’m finally treating it like a product, not a prototype. This week made it obvious that the runway is clear and the guardrails are showing up in time for real work.

Quick answer: Agentic AI just hit an inflection point. On March 21, 2026, NVIDIA highlighted massive demand for Blackwell-Rubin capacity, Starling shipped agentic features on March 20, and new security tooling landed the same week. If you want in, start with a boring but valuable agent that plans, acts with constraints, and logs everything. I share my exact weekend build below.

My tip: start with a boring but valuable agent that plans, acts with constraints, and logs everything.

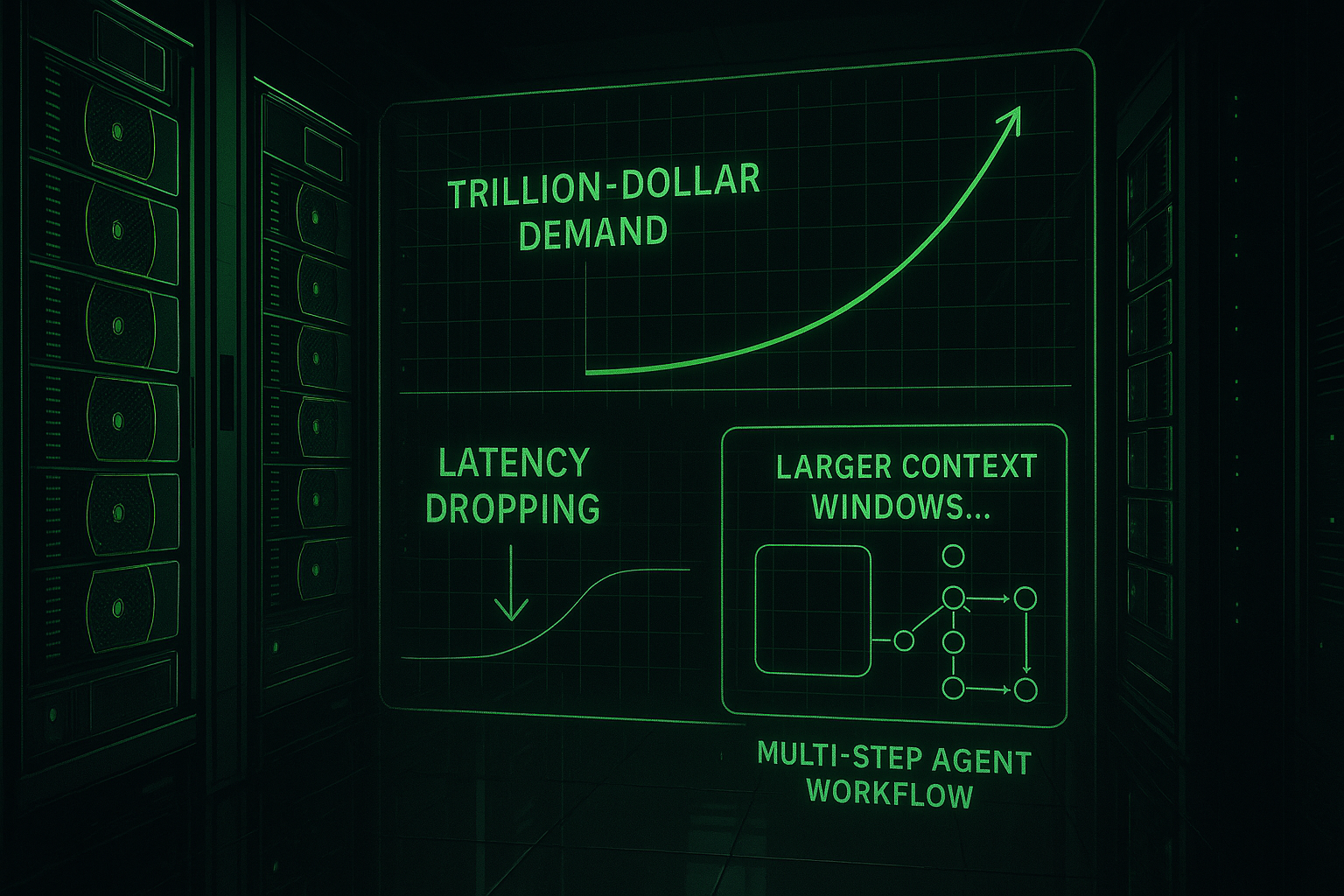

NVIDIA’s signal: the agentic inflection is real

Why the trillion-dollar line matters

On March 21, 2026, Jensen Huang pointed to an agentic AI inflection and over a trillion dollars in demand for Blackwell-Rubin through 2027, as covered by Yahoo Finance. The headline number is wild, but what matters is the assumption baked into it: agents will lean hard on reasoning, tool use, and long contexts. That means faster, cheaper runs for the multi-step workflows I actually care about.

For me, this flips the default from wait-and-see to build-and-ship. You don’t need a rack of GPUs to feel the impact. Capacity bets at the platform layer usually show up as lower latency, better context windows, and more stable planning loops.

Your bank just shipped an agent

Consumer proof beats slide decks

On March 20, 2026, Starling added agentic AI to its UK banking app, reported by PYMNTS. I don’t need their roadmap to know what this unlocks: spend insights that act, nudges that respect intent, and task chains that quietly do the boring parts.

Once a consumer bank goes first, every workflow where information, rules, and intent live together is fair game. If you’re just getting into Agentic AI, you can riff on this pattern without touching a bank API. I’ll show you my no-API weekend build in a minute.

When a consumer bank ships first, I treat every workflow where information, rules, and intent live together as fair game.

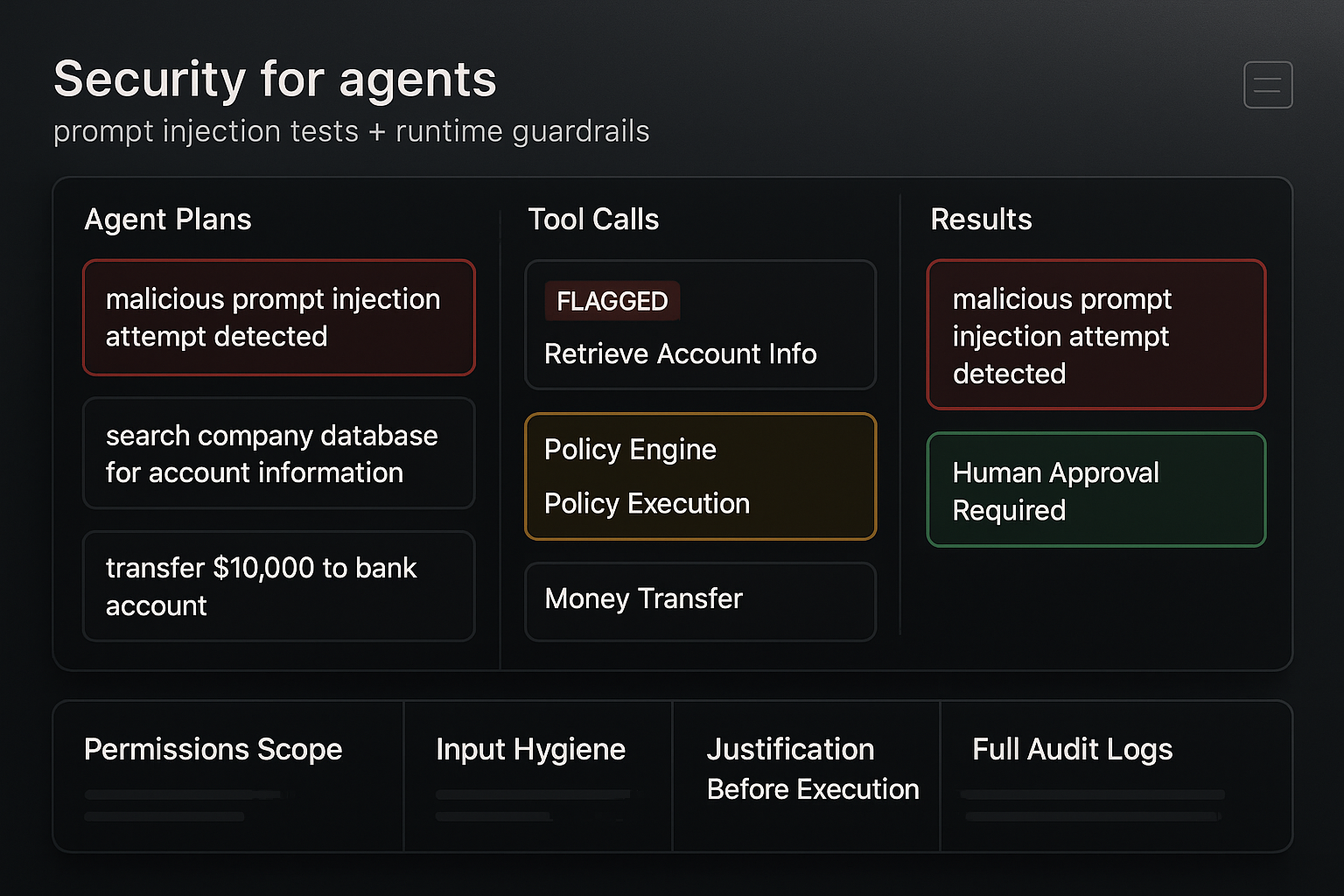

Security finally looks like a category

Prompt injection tests and runtime guardrails

Also on March 21, 2026, HackerOne launched agentic prompt injection testing, covered by Cybersecurity Insiders. Same day, Manifold announced an 8 million dollar seed to secure autonomous endpoint agents at runtime. The keyword is runtime. Not just pre-prompt linting, but watching what the agent actually does and gating risky behavior in the moment.

If you’ve been nervous about giving agents real tools, this is your glide path. Here is the short checklist I run before I let an Agentic AI system touch anything important:

- Scope and permissions only for the task at hand, nothing extra

- Input hygiene with HTML stripping, URL neutralization, and a denylist for risky actions

- Human confirmation for money movement, credentials, and destructive ops

- Full observability with logs for prompts, plans, tool calls, and results

Even without a vendor, you can wrap tools in a simple policy layer, require a justification chain before execution, and log the proposed plan before any step runs.

I watch what the agent does at runtime and gate risky behavior in the moment, not just before the prompt.

The infrastructure bet: 0G and on-chain patterns

Don’t chase tokens. Chase patterns.

On March 21, 2026, 0G pitched itself as a blockchain for AI agents and talked up a future trillion-dollar agent economy. Whether you like crypto or not isn’t the point. Agents produce receipts, meter usage, and sometimes need to pay other services. Signed events, verifiable logs, and revocable permissions make sense. You can do all of that off-chain today and bridge later if it’s actually needed.

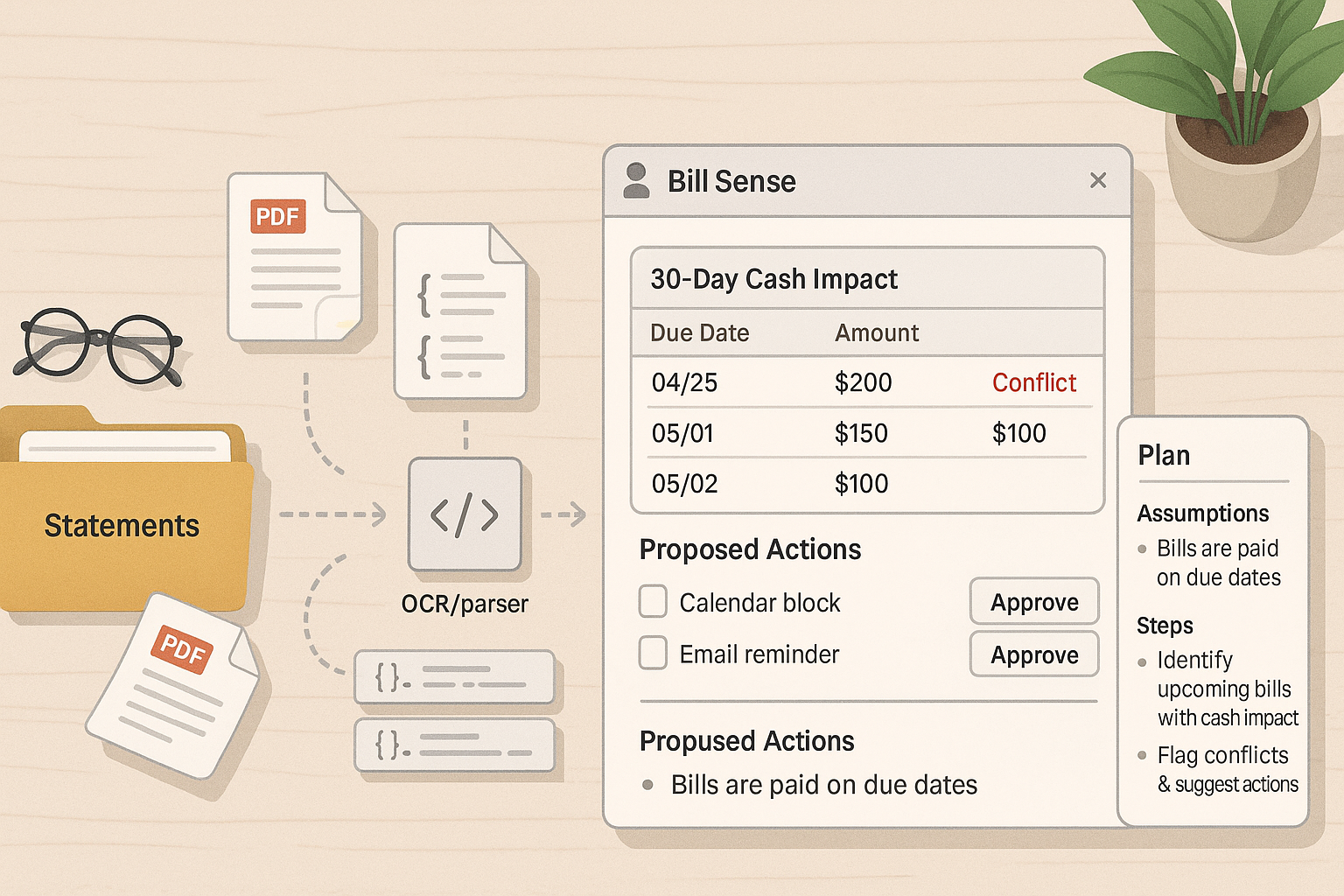

What I’d actually build this weekend

Keep it boring and valuable

Project: a Bill Sense desk agent for personal finance. It watches a folder for new statements, extracts due dates and amounts, projects cash impact for 30 days, then drafts two actions I can approve: a calendar block and an email reminder. No bank API, just files I already have.

I start with no bank API and just use the files I already have.

How I’d wire it up: one coordinator agent with two tools. The first is an OCR and parser that turns PDFs into tidy JSON rows. The second is a planner that summarizes exposure, calls out conflicts, and proposes actions. I ask the agent to output a plan object with assumptions, predicted conflicts, and proposed steps. I log the plan, then require a yes or no from me before it touches calendars or email. Cheap insurance, and it teaches you how to gate actions in Agentic AI flows.

My current starter stack

Use what you have, not what’s trending

I keep it simple so I can iterate fast: a reasoning-capable model with tool use, a lightweight router to decide between planning and acting, a small vector store plus a key-value store for run metadata, and a thin policy wrapper around each tool. If I’m testing security, I add a red-team prompt before each run and log how the agent defends itself.

I keep it simple so I can iterate fast.

FAQ

What is Agentic AI in plain English?

Agentic AI is a system that plans steps, calls tools, and acts toward a goal with feedback from results. Think of it as a worker that can read, decide, and do, instead of just chat. The value shows up when those steps are predictable and measurable.

Why does March 20-21, 2026 matter?

Those dates bundled real signals: NVIDIA flagged massive capacity demand, a major consumer bank shipped in-app agents, and security tools moved from blog posts to products. That combo is what made me switch from prototyping to shipping.

Do I need GPUs to start with Agentic AI?

No. You can get far on hosted models and a sane design: clear goals, small tools, and strong logging. The capacity surge highlighted by NVIDIA should make multi-step workflows faster and cheaper over time.

How do I stop prompt injection?

Assume untrusted inputs, sanitize them, and gate risky actions with policies and human approval. Tools like agentic prompt injection testing help, but your architecture matters most: least privilege, justification before execution, and full audit trails.

Should I put agents on a blockchain now?

Usually no. Start with the pattern you actually need: signed events, transparent logs, and revocable permissions. If your agents must coordinate across companies or strangers, you can explore on-chain later without rebuilding everything.

What changed for me

Confidence to ship, not just prototype

NVIDIA’s March 21 signal told me capacity is coming. Starling’s March 20 launch told me consumers will accept agents inside serious apps. HackerOne and Manifold showed me security is maturing in lockstep. So I’m I’m building the boring, valuable stuff now. Ping me next week and I’ll tell you what broke first.