Agentic AI just clicked into production for me this week

Agentic AI is no longer a lab toy. I spent the day pulling updates so you don’t have to, and the pattern is loud: real budgets, real guardrails, real jobs. If you’re starting from zero, these are the exact moves I’d copy right now.

My move: start tiny and supervised with one agent, 2 to 3 trusted tools, full logs, and approvals before any write.

Quick answer: Start tiny and supervised. Build one agent that runs a checklist with 2 to 3 trusted tools, logs every step, and writes clear status updates. Use a queue and a simple state store so you can retry and resume. Give it a service user, keys in a vault, and make it ask before any write. Then pick the model that calls tools cleanly and move on.

Banks quietly gave agents real work

What actually happened

On Feb 17, 2026, b1BANK said it’s partnering with Covecta to deploy agentic AI, with reports calling out routine loan and deposit work getting automated. That is regulated, paper-heavy, SLA-driven work moving to agents, not a demo. Source.

Why this matters for beginners

Banks hate chaos. If they’re letting agents touch loans and deposits, it means the pattern is structured checklists, document pulls, status updates, and validation. Perfect for a first build you can supervise without fancy scaffolding.

How I’d copy it

I’d take one boring workflow I already trust, like onboarding or a form check, and wire an agent to read the input, call only the tools I trust, write a plain status back to a sheet or ticket, and tag any exception for a human. Make the agent explain each step in simple language so you can audit fast.

I make the agent narrate each step in plain language so I can audit fast.

Security finally wrapped agents in guardrails

What actually happened

Also on Feb 17, 2026, Palo Alto said it intends to acquire Koi, a startup focused on agentic AI security, with coverage framing the goal as keeping AI agents in check. Translation: enterprises now treat agents like users with privileges and blast radius. Source.

Why this matters for beginners

The difference between a weekend toy and something your team actually uses is permissions and audit. Least privilege wins. Log everything. Make access easy to revoke. It’s not flashy, but it’s what keeps usage from getting shut down.

I keep permissions lean, log every action, and make access easy to revoke.

How I’d copy it

I create a service user for the agent, keep credentials in a vault, and force a permission check before any sensitive tool. The agent must say what it’s trying to do and why. You’ll catch weird loops and bad calls early.

Infra money is chasing long-lived, tool-using agents

What actually happened

On Feb 17, 2026, Render announced a 100 million dollar raise to build cloud tech for AI agents. Always-on orchestration, events, durable memory, predictable costs. That’s a production bet, not a hobby. Source.

Why this matters for beginners

When agents crash on webhooks or forget state between steps, you’ll think agents don’t work when the real issue is plumbing. Queues, retries, background workers, and timeouts fit how agents actually use tools.

How I’d copy it

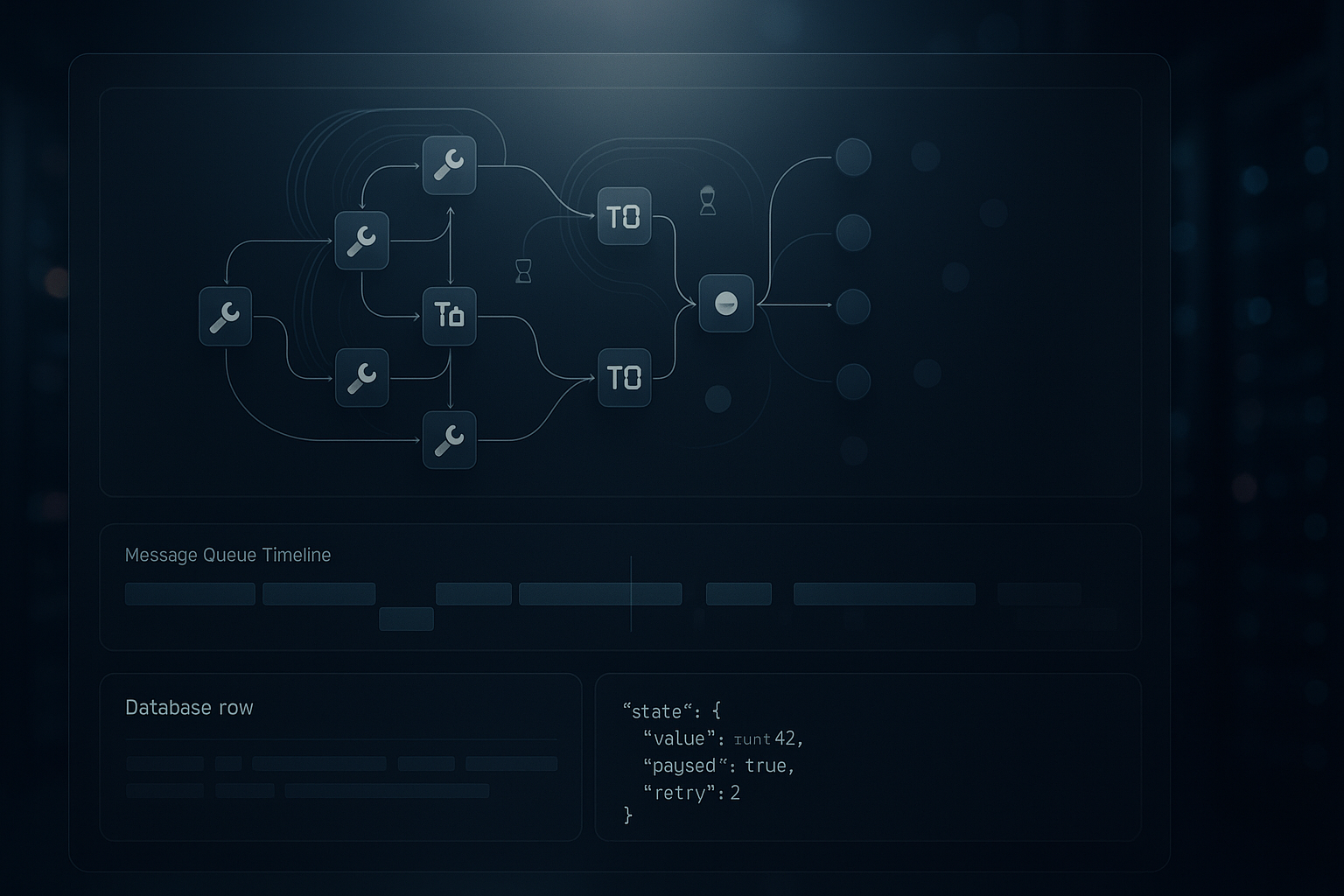

Even on a shoestring, I use a managed queue for tasks and a database row per run with a JSON state dump. That gets me resume, replay, and honest audits without overbuilding.

On a shoestring, I use a managed queue and a per-run JSON state to get resume, replay, and clean audits without overbuilding.

Enterprises are starting with data, not demos

What actually happened

On Feb 17, 2026, Unilever signed on with Google Cloud to target agentic AI with real enterprise data at scale. That’s pipelines and governance instead of playground prompts.

Why this matters for beginners

Data hygiene and repeatability beat novelty every time. Clear access patterns, lineage, and ownership are boring on purpose, and they’re where the leverage is for small teams too.

How I’d copy it

I pick one data source I control, let the agent read, write, and explain what it did, then add a second tool. No 12-API Frankensteins on day one. Narrow and dependable ships faster.

Models are racing to be agent-native

What actually happened

Alibaba’s Qwen3.5 was flagged for Feb 18 with a focus on agentic AI. The point isn’t a leaderboard. It’s that vendors are tuning for planning, tool use, and integration with other systems.

Why this matters for beginners

Agent performance now lives or dies on clean function calls, multi-step reasoning, and refusal boundaries. When a model family markets itself as agentic, I read it as better native support for tools and control, which means less custom scaffolding for you.

How I’d copy it

I test two models on the exact same tool-calling task and keep the one that returns structured outputs reliably, keeps calls tidy, and fails gracefully. No abstract IQ tests needed.

I A/B test two models on the same tool-calling task and keep the one with clean structured outputs and graceful failures.

My beginner blueprint after this week

If I had to start from zero today, here’s the shape I’d use for my first agent. It borrows the bank’s discipline, security’s guardrails, infra pragmatism, enterprise data focus, and model sanity.

The 5-step starter plan

- Define the checklist and mark read-only vs write steps.

- Pick one agent-native model and 2 to 3 tools with strict schemas.

- Build the smallest loop, log every step, and persist state per run.

- Add least-privilege permissions, service user, and vault-stored keys.

- Set human handoff on low confidence or rule breaks, then measure time saved and errors.

A tiny case study you can copy

I built a vendor invoice checker in two hours. The agent reads a PDF, extracts totals, cross-checks a Google Sheet of expected amounts, and either flags a mismatch or posts all good with a link to the exact row. Two tools, one table for state, one log file.

What worked: forcing the agent to narrate each step in plain English cut my debugging time in half. What I’d change: I’d add a dry-run mode earlier to test write steps without touching the sheet.

Common pitfalls I see

Trying to make one agent do five jobs at once is the fastest way to stall. Skipping the audit trail is another. If you can’t answer what it did, with what tools, and why, you won’t trust it and neither will anyone else. And if a model can’t call your tools with clean JSON, switch models before you add more glue.

FAQ

What is agentic AI in simple terms?

It’s an AI that can plan steps and use tools to complete a task, not just answer a prompt. Think checklists, API calls, and clear logs instead of one-off replies.

How do I pick the first workflow for an AI agent?

Choose something boring and deterministic with 5 to 10 steps, clear inputs and outputs, and low blast radius. Form checks, ticket summaries, or daily digests are perfect.

What stack do I actually need to start?

One agent-friendly model, 2 to 3 tools with strict schemas, a managed queue, a simple database for state, and logging. Add a service user and a secrets vault for safety.

How do I keep agents from breaking stuff?

Use least privilege, require explicit approvals before writes, log every action, and add a human handoff on low confidence. Make rollback easy by tracking state per run.

How do I compare models for agent work?

Run the same tool-calling task on both. Keep the one that returns structured outputs consistently, handles retries, and fails with useful errors you can act on.

Why I’m optimistic after this week

Feb 17 felt like the moment the story shifted from cool demos to please finish this checklist and write it down. Banks moved first, security showed up, infra is funding the boring bits, enterprises are anchoring on data, and models are getting agent-native. If you’re a beginner, you’re right on time. Start tiny, log everything, and ship.