Agentic AI finally clicked for me today. After combing through Feb 18, 2026 updates on hardware, models, real deployments, and validation, it all snapped into a simple stack I can actually build on this week.

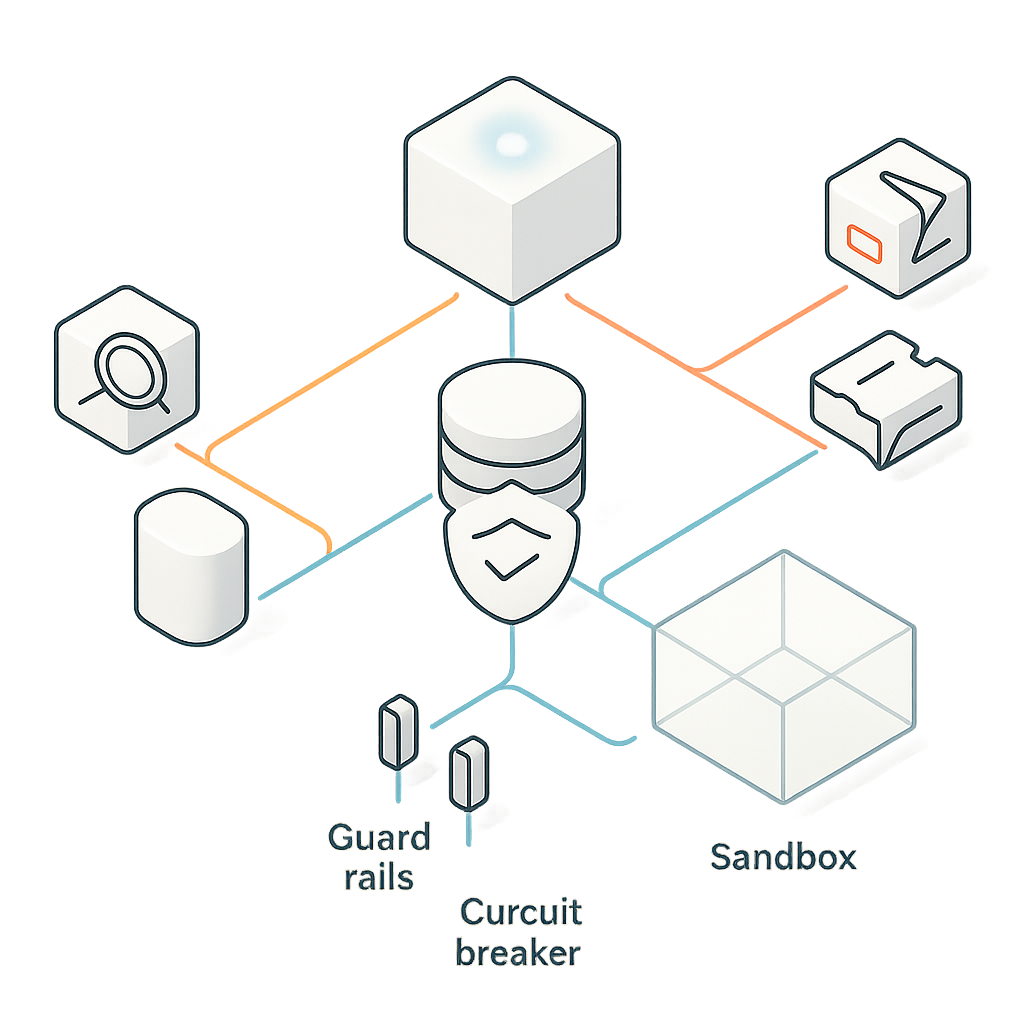

Quick answer: If you want a practical agentic AI setup now, use a reliable planning model for orchestration, keep tools minimal and typed, centralize memory and policy, test in a sandbox with circuit breakers, and ship in phases. Thanks to faster chips, smarter models, and real-world commerce pilots, you can start small this weekend and avoid pricey dead ends.

I use a reliable planning model for orchestration, keep tools minimal and typed, centralize memory and policy, test in a sandbox with circuit breakers, and ship in phases. I start small this weekend to avoid pricey dead ends.

What I mean by agentic AI

Agentic AI is AI that plans and decides the next step, not just answers a question. It chooses tools, loops until done, and returns a result. I treat it like a junior teammate: give it a clear goal, specific tools, guardrails, and a safe place to practice.

What changed on Feb 18, 2026

Infra speed: NVIDIA Blackwell Ultra gets serious about agent workloads

Coverage on Feb 18, 2026 highlighted NVIDIA Blackwell Ultra with up to 50x performance and up to 35x cost reduction for agentic workloads. Agents chew through tokens with planning loops, tool calls, retrieval, and self-checks, so this kind of jump changes what’s affordable to run. I’m not buying GPUs tomorrow, but I am designing so I can swap runtimes easily. If this sticks, local and edge start to look practical for day-to-day agents. Read the coverage I saw here: NVIDIA Blackwell Ultra performance claims.

What I’m doing: cleanly separating my reasoning layer from the tool layer, and avoiding hard locks to any single cloud runtime. Future me gets to pick the cheapest lane.

Smarter brains: Claude Sonnet 4.6 tightens planning and tool use

Also on Feb 18, 2026, Claude Sonnet 4.6 was flagged for better coding, reasoning, and agentic reliability. Beginners usually get tripped up by flaky tool use and plans that wander. Improvements in structured tool calling, longer coherent reasoning, and safer code generation mean fewer duct-tape fixes on my side.

What I’m doing: defaulting to Claude Sonnet 4.6 for orchestration and handing simple fetch-transform tasks to a smaller, cheaper model. I’m asserting schemas on outputs and tightening prompts so it picks tools step by step instead of guessing.

I default to Claude Sonnet 4.6 for orchestration and assert schemas on outputs while tightening prompts so it picks tools step by step.

Real deployments: Ada’s unified reasoning engine across channels

On Feb 18, 2026, Ada announced a unified reasoning engine that powers agentic customer experiences across channels. This validates the pattern I’ve been using on smaller projects: one reasoning brain up top, multiple task agents underneath, with shared memory and policy. No need to rebuild logic for chat, email, or voice. You can see the announcement here: Ada unified reasoning engine.

What I’m doing: consolidating intents, policies, and persona settings in one place and letting channels be just channels. Shared memory store, consistent guardrails, modular tools.

Money flows: DBS trials Visa’s agentic commerce tools

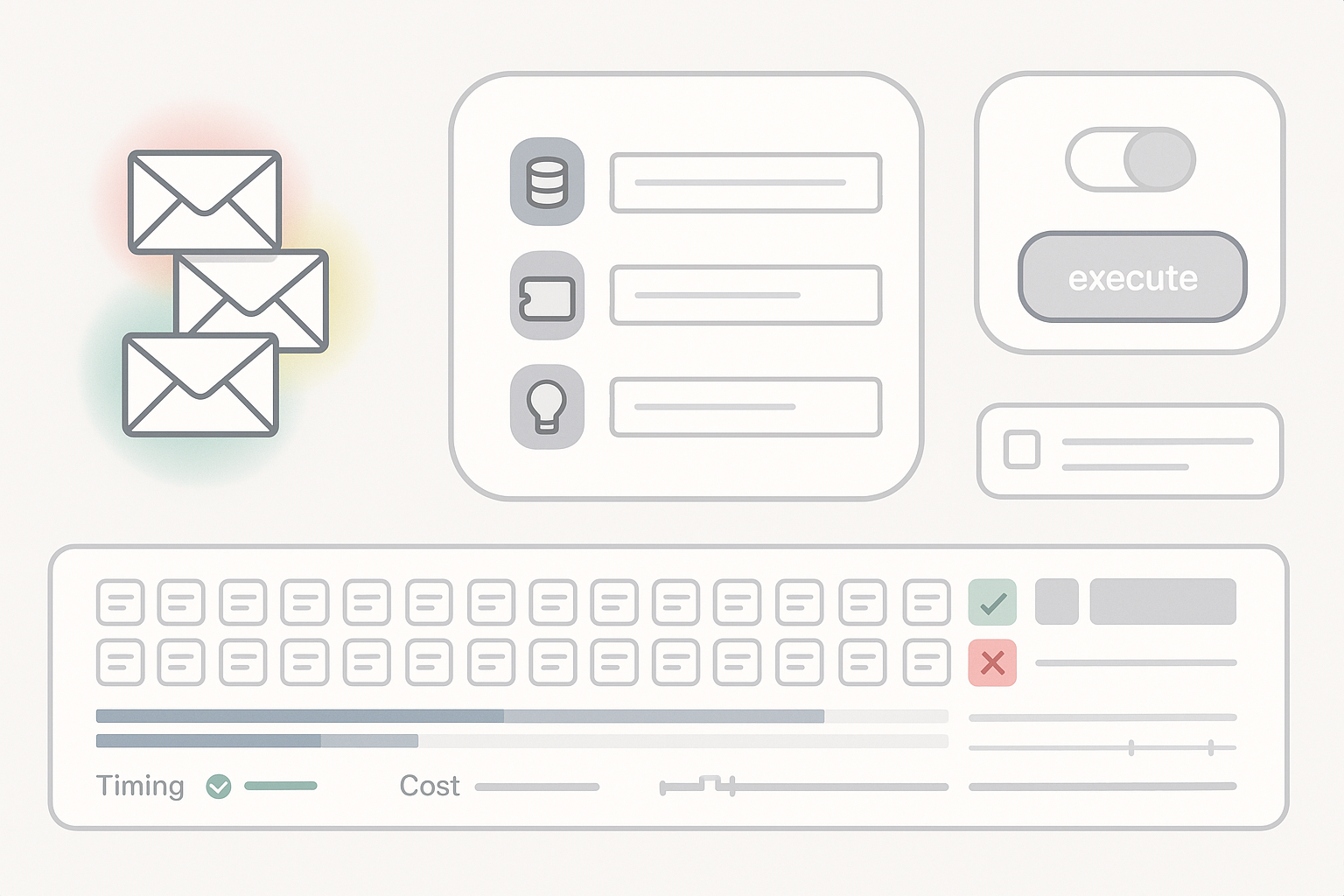

Payments are where agents either shine or scare people. Seeing DBS trial Visa’s agentic commerce tools on Feb 18, 2026 tells me the rails for transactions, compliance, and merchant workflows are getting real pressure testing. That matters if your agent needs to shop, invoice, reconcile, or apply loyalty credits autonomously. Here’s the coverage: DBS x Visa agentic commerce pilot.

What I’m doing: scoping a tiny commerce pilot where the agent builds a cart, fetches shipping options, applies rules, and requests human approval on the first runs. Once it proves itself, approvals taper for low-risk thresholds. Every tool permission is versioned like code.

Safety nets: Cloud Range adds pre-launch AI validation

Also on Feb 18, 2026, Cloud Range introduced an AI Validation Range so teams can test, validate, and secure agents before deployment. Writing prompts is one thing. Proving your agent won’t leak PII, jailbreak itself, or loop forever on a weird return value is another. A padded room for agents is extremely useful.

What I’m doing: building a small validation harness that simulates prompt injections, missing fields, and hostile tool outputs. I log every call and trip a circuit breaker on repeated failures. Commercial or DIY, the point is the same: ship with receipts.

I build a small validation harness that simulates prompt injections, missing fields, and hostile tool outputs, and I trip a circuit breaker on repeated failures.

If I were starting today

Pick one repeatable workflow with crisp guardrails

Think refunds under 100 dollars, a weekly KPI summary, or triaging support tickets into three buckets. If a human can sketch the process in ten lines, an agent can probably learn it.

Give your agent a good brain and a tiny toolkit

Use a reasoning-first model like Claude Sonnet 4.6 for planning and decisions. Expose only the tools it truly needs: search docs, fetch customer record, create ticket, send email template. Keep outputs structured so you can verify before actions fire.

Wrap everything with memory and policy

Store conversation state, user preferences, and recent tasks in one place. Treat policy like code: what the agent can see, what it can do, what needs approval, and what is never allowed. Future you will say thanks.

I treat policy like code and keep conversation state, preferences, and recent tasks in one place.

Test like a skeptic

Before any real data touches it, hammer it with worst cases: half-complete profiles, tricky phrasing, malicious prompts. Log it all. If you can, run flows in a validation sandbox so you see what breaks while nothing valuable is at risk.

Ship in phases and learn fast

Start with human-in-the-loop for a week. Move to auto-complete with soft limits. Then allow fully autonomous runs for low-risk paths. It’s not about perfection. It’s about proof.

How today’s updates change the game

- Better models plus faster chips make deeper planning loops affordable, so you do not have to dumb down your use case.

- Real commerce pilots move this past toy demos, giving you patterns for risk and approvals to copy.

- Validation ranges help you prove safety without turning into a security engineer overnight.

Budget notes I’m tracking

NVIDIA’s claims point to a near future where local or hybrid agents make more financial sense. Today I’ll still build on hosted APIs because it’s fastest, but I’m designing for portability. Tool adapters live behind interfaces, prompts are versioned, and I track per-task cost. When infra gets cheaper, I can switch over a weekend, not a quarter.

I design for portability with tool adapters behind interfaces, versioned prompts, and per-task cost tracking so I can switch runtimes fast.

My quick-start kit for this weekend

I’d pick a support workflow like turning any complaint email into a correctly tagged ticket with a first-reply draft. I’d let Claude Sonnet 4.6 read the email, decide the category, and propose next steps. I’d give it three tools: get customer record by email, create or update ticket, and fetch a knowledge base snippet. If lifetime value is high or sentiment is very negative, it flags for human approval and stops.

Then I’d run a tiny eval harness with 50 anonymized emails, including greeting-only messages and weird edge cases. I’d log tool calls and timing, spot-check outputs, tighten prompts, and push it to a small group. I always learn more in 48 hours of real use than a month of planning.

What to do before next week

Version everything like code: prompts, policies, and tool schemas. When results shift, you’ll know why. Also, write your agent’s job in one sentence and pin it where you build. Clarity speeds everything up.

FAQ

What is agentic AI in simple terms?

It’s AI that can plan and act. You set a goal, it figures out steps, calls tools, checks its work, and returns results. Think of it as a junior teammate with clear rules and a tight toolkit.

Do I need GPUs to start with agentic AI?

No. Start with hosted APIs for speed. With Feb 18, 2026 Blackwell Ultra performance claims, I’m designing for easy runtime swaps later so I can move to local or hybrid when it’s cost effective.

How do I test agents safely before launch?

Use a validation harness or a commercial range that simulates prompt injection, missing fields, and bad tool outputs. Log everything, enforce schemas, and add circuit breakers on repeated failures. Prove it in a sandbox first.

Which model should plan vs execute tasks?

I use a strong reasoning model like Claude Sonnet 4.6 for orchestration and a smaller, cheaper model for simple fetch-transform steps. Keep outputs structured so you can verify and audit each action.

Final thought

Feb 18, 2026 felt like a turning point. Sharper brains, cheaper pipes, real companies letting agents touch money, and a padded room for testing. If you’ve been waiting for the water to be warm, this is your nudge. Start small, ship something safe, and let next week’s you handle the fancy stuff.