Agentic AI just hit a real inflection point, and I felt it. Agentic AI moved from demo talk to shipping reality in a single week, and I don’t want you to miss the signals that actually matter.

Quick answer

If you’re short on time: March 29 delivered five big Agentic AI moves. Arm named an AGI CPU for agent workloads, Prisma AIRS 3.0 framed a real security control layer, Fortune flagged agents driving roughly 10% of revenue for some brands, a 4-year research pact focused on reliability landed, and a new toolkit pushed toward self-correcting systems. Translation: it just got cheaper, safer, and more practical to ship agents.

My quick read: it just got cheaper, safer, and more practical to ship agents.

Arm says it out loud: a CPU for the agentic cloud

On March 29, Arm announced its “AGI CPU,” calling it the silicon foundation for the agentic AI cloud era. The message is pretty direct: treat agents as first-class workloads, not sidecars to model inference. If you’ve ever hacked around timeouts, context limits, and flaky tool calls, this matters. The substrate is shifting to long-running tasks, rapid tool use, and heavy memory.

I expect a wave of SDKs, runtimes, and cloud SKUs tuned for stateful agents. That means longer experimentation windows and fewer reliability headaches on commodity cloud. If you’re learning, lean into vector stores, event-driven orchestration, and persistent memory. The industry is going there, and Arm basically said the quiet part out loud.

My take

No silver bullets, but when hardware draws the map, software follows it. Design as if your agents will never be stateless again.

I design as if my agents will never be stateless again; hardware is drawing the map and my software follows it.

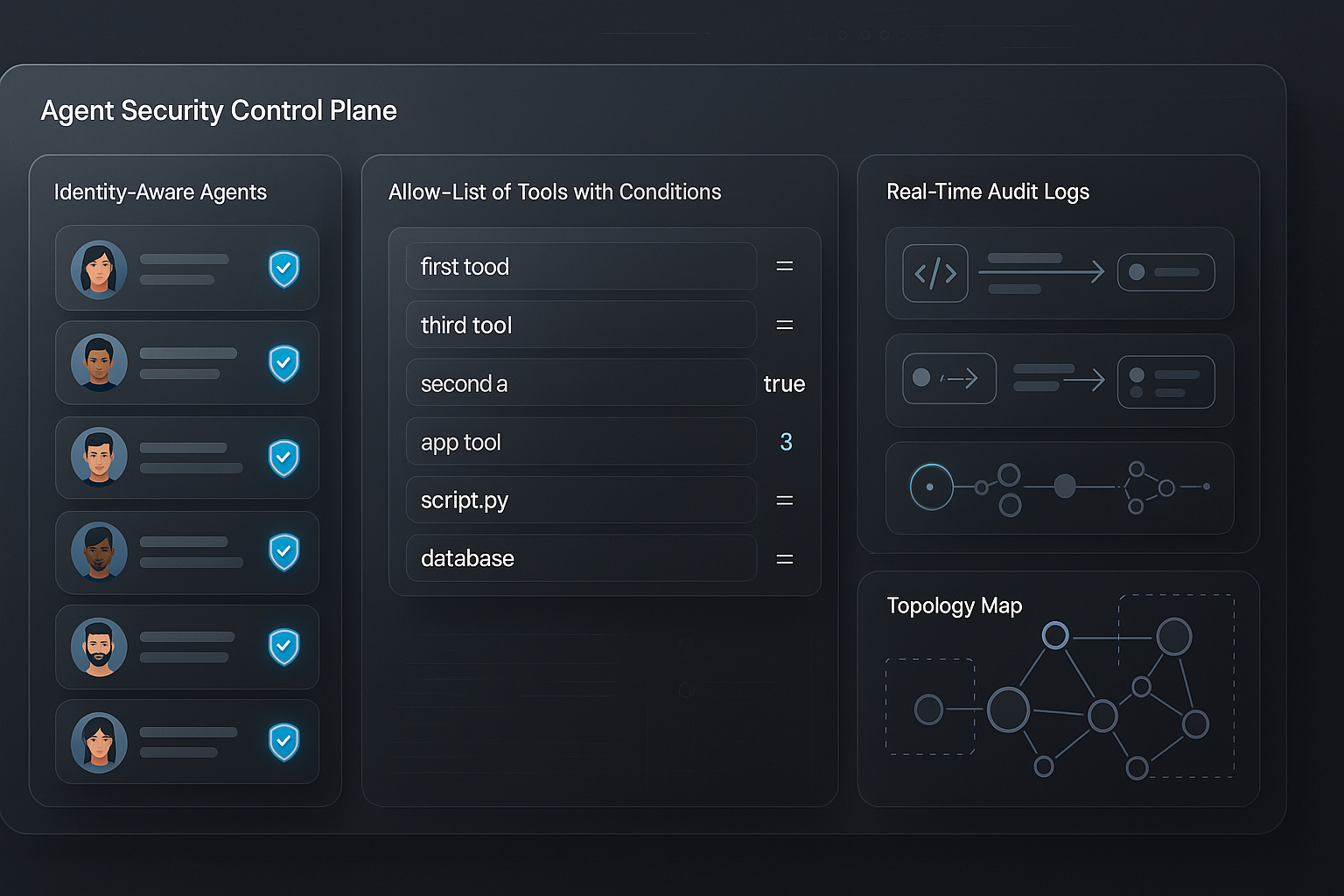

Security grows up: Prisma AIRS 3.0 aims at the agent stack

Also on March 29, a deep-dive asked a sharp question: with Prisma AIRS 3.0, is Palo Alto trying to own the agentic AI security stack? I like the framing because once software starts taking actions, the old scan-and-block mindset falls short. We need identity-aware agents, clear data boundaries, and continuous monitoring that treats actions like API-first automation, not just chat history.

If you’ve watched a toolchain run wild because one policy was missing, you get it. A real control plane turns demos into systems. The direction highlighted around Prisma AIRS 3.0 is exactly that: policy, provenance, and runtime oversight stitched together.

Why this matters if you’re new

Agents aren’t just LLM prompts. They act, and actions create risk. Start with explicit allow-lists for tools, log every call, keep secrets out of prompts, and make decisions replayable. You’ll see vendors make these basics far easier over the next few months.

I start with explicit allow-lists, I log every call, I keep secrets out of prompts, and I make decisions replayable.

The 10% wake-up call: agents already drive real revenue

This one made me pause. On March 29, Fortune reported agents are driving about 10% of revenue for some brands. It tracks with what I’m seeing: agents quietly own boring but profitable loops like renewals, replenishment, triage, and cart recovery. No fireworks, just faster cycles and better context.

If you’re on the fence, treat this as a market signal. Package a single agent with the right data, one or two scoped tools, and crisp rules. The results feel like magic because they’re disciplined automation with better brains.

If you’re on the fence, package one focused agent with the right data, a tiny toolbelt, and crisp rules.

What I’d do if I were starting today

Pick a high-frequency workflow with a clear success metric. Don’t chase general intelligence. Chase measurable wins you can ship this week.

A 4-year bet on safer, smarter agents

Also on March 29, Lloyds Banking Group and the University of Glasgow kicked off a four-year research partnership focused on agentic AI. I love this because finance has real constraints and real stakes. If agents can be trusted there, they can probably be trusted anywhere. And four years is proper commitment, which usually produces better test suites, open benchmarks, and evaluation methods the rest of us can use.

To me, this is the shift from cool demos to operational rigor. Expect more public thinking on plan verification, action containment, and risk modeling inside the agent loop.

The toolkit play: a push toward self-correction

Rounding out March 29, a piece on A-Evolve framed a potential PyTorch moment for agent systems: less hand-tuning, more automated state mutation and mid-flight self-correction. In plain English, think of an agent as a learning loop. It runs, finds friction, adjusts, and tries again. You still set guardrails and success criteria, but you’re not babysitting every branch.

What I’d try first

Start tiny but real: summarize a ticket, draft a reply, update the CRM, and log a rationale. Define “good,” track attempts, and let it iterate. You’ll quickly see where memory and policy need structure so improvements actually stick.

So, did this week change anything?

Yes. It clarified the stack. Hardware is optimizing for agents as agents, not just model inference. Security is congealing into a real control layer. Revenue is showing up in the unsexy places that run businesses. Research is committing to safety and auditability. And dev tooling is pushing us toward systems that learn and self-correct instead of pipelines we duct-tape by hand.

What I’d do this week

- Ship one end-to-end agent for a repeatable task and keep full, replayable logs.

- Add a simple policy layer that defines allowed tools and conditions to call them.

- Make your product machine-readable with clean docs, predictable APIs, and accurate metadata.

- Start a tiny agent SEO checklist for your brand: links, structured data, and helpful answers agents can surface.

- Keep a rolling postmortem. Every failure is a free unit test for v2.

I keep a rolling postmortem because every failure is a free unit test for v2.

FAQ: Agentic AI right now

What is Agentic AI, in simple terms?

Agentic AI pairs models with tools, memory, and policies so the system can plan and take actions, not just answer prompts. Think automation that reasons, calls APIs, and keeps state, with guardrails you control.

Why did March 29 matter so much?

Multiple signals landed on the same day: a chip blueprint for agent workloads, a clearer security control layer, a real revenue stat, a multi-year research pact, and a self-correcting toolkit narrative. Together, they lowered the friction to build reliable agents.

How do I pick my first agent use case?

Choose a high-frequency task with obvious success metrics and minimal edge cases. Give the agent a clean knowledge source, one or two tools, and a tight feedback loop. You want fast, measurable learning, not a grand theory of everything.

How should I think about security from day one?

Model what the agent is allowed to do before what it should do. Enforce identity, scope tools with guardrails, log every action, and keep secrets out of prompts. If you can’t replay decisions, you don’t have a system yet.

What developer skills help most?

Get comfortable with vector databases, event-driven orchestration, and persistent memory patterns. These are the building blocks of resilient, stateful agents that survive real workloads.

If you’ve been waiting for a sign to start, this is it. Keep it small, instrument everything, and iterate.