Agentic AI finally clicked for me this week. I blocked 48 hours to cut through the noise and focus on what actually moved, and it changed how I’m planning my next 90 days.

Quick answer: Agentic AI is leaving the lab and getting real. On March 13, 2026, Santander and Visa finalized a pilot for hands-free payments across LatAm. The same day, IBM detailed multi-agent incident investigations in Instana. On March 13, 2026, UK legal guidance arrived for businesses, and by March 14, 2026, physically oriented tools were in the spotlight. Build small, log everything, and keep a human in the loop.

I start small, log everything, and keep a human in the loop.

Hands-free payments are here

On March 13, 2026, Santander and Visa said they finalized an agentic AI payments pilot across Latin America. A payment isn’t just a swipe. It’s KYC checks, fraud scoring, currency conversions, routing, messaging, and settlement. Turning that into an agentic workflow means software plans the steps, calls the right tools, and verifies the result without you nudging it. You can read the announcement via Bitcoin.com News.

Here’s what that signaled to me: agents aren’t chatbots, they’re orchestrators. Picture an agent watching a cart, auto-picking the cheapest compliant route, pinging a fraud model, then asking me only when something looks off. That ask-when-needed loop is the quiet superpower.

How I’d try this in a weekend

I storyboard a tiny checkout. Give the agent three tools: a fraud score API, a currency converter, and a synthetic charge endpoint that returns success or a reason code. Its job: move a transaction from intent to approved while logging evidence for every step. If it gets denied, it retries a second route, then asks me before any further attempt. That ask is the safety rail.

Even if you never touch payments, the pattern holds: define success, give a small toolbelt, and force the agent to keep receipts.

I define success, keep the toolbelt small, and force the agent to keep receipts.

Self-healing ops stopped being sci-fi

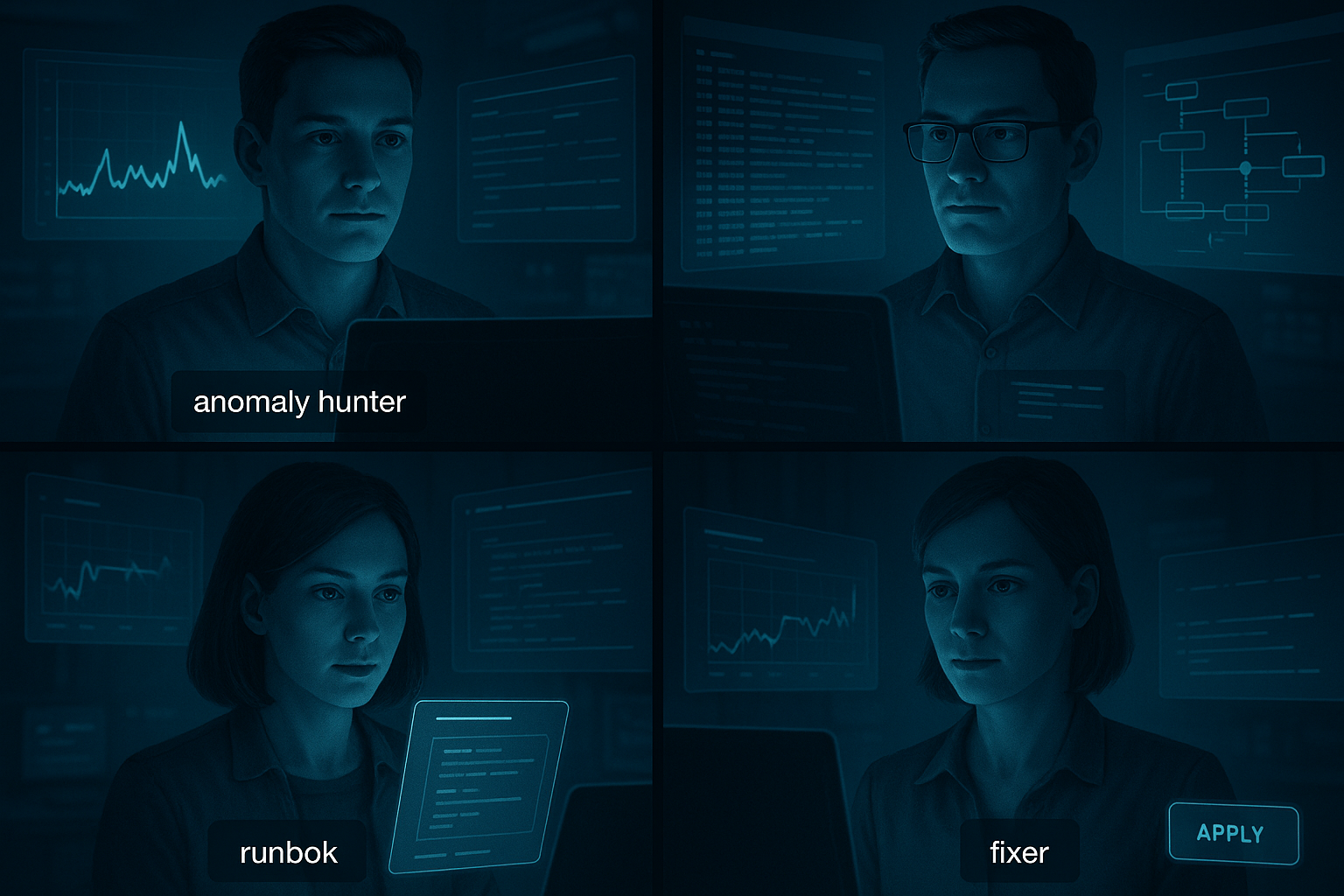

Also on March 13, 2026, IBM shared how Instana uses multi-agent workflows for incident investigation. Instead of one giant model doing everything, smaller agents split the job: one hunts anomalies, another correlates logs and traces, a third drafts a remediation plan, and a fourth runs it only if guardrails pass. That reads like therapy if you’ve been on call. IBM’s breakdown is here via IBM.

What I tell beginners: projects stall when the agent’s job is fuzzy. Multi-agent setups force roles. Even a simple two-hat flow helps: a detective agent gathers facts and a fixer proposes actions. I approve or deny. That alignment is the cheapest way I know to stop hallucinations under pressure.

I lean on a simple two-hat flow so the detective gathers facts and the fixer proposes actions.

A tiny ops starter you can actually build

Spin up a sandbox service that throws a 500 error every 50 requests. Feed logs to a detective agent with three tools: query logs, query metrics, open a runbook. Give the fixer one action: redeploy service. Rule of thumb: the fixer cannot act unless the detective shares a chain of evidence that matches a known runbook step. The first time you see the fixer refuse to act with thin evidence, the pattern clicks.

Agents need a body, not just a browser tab

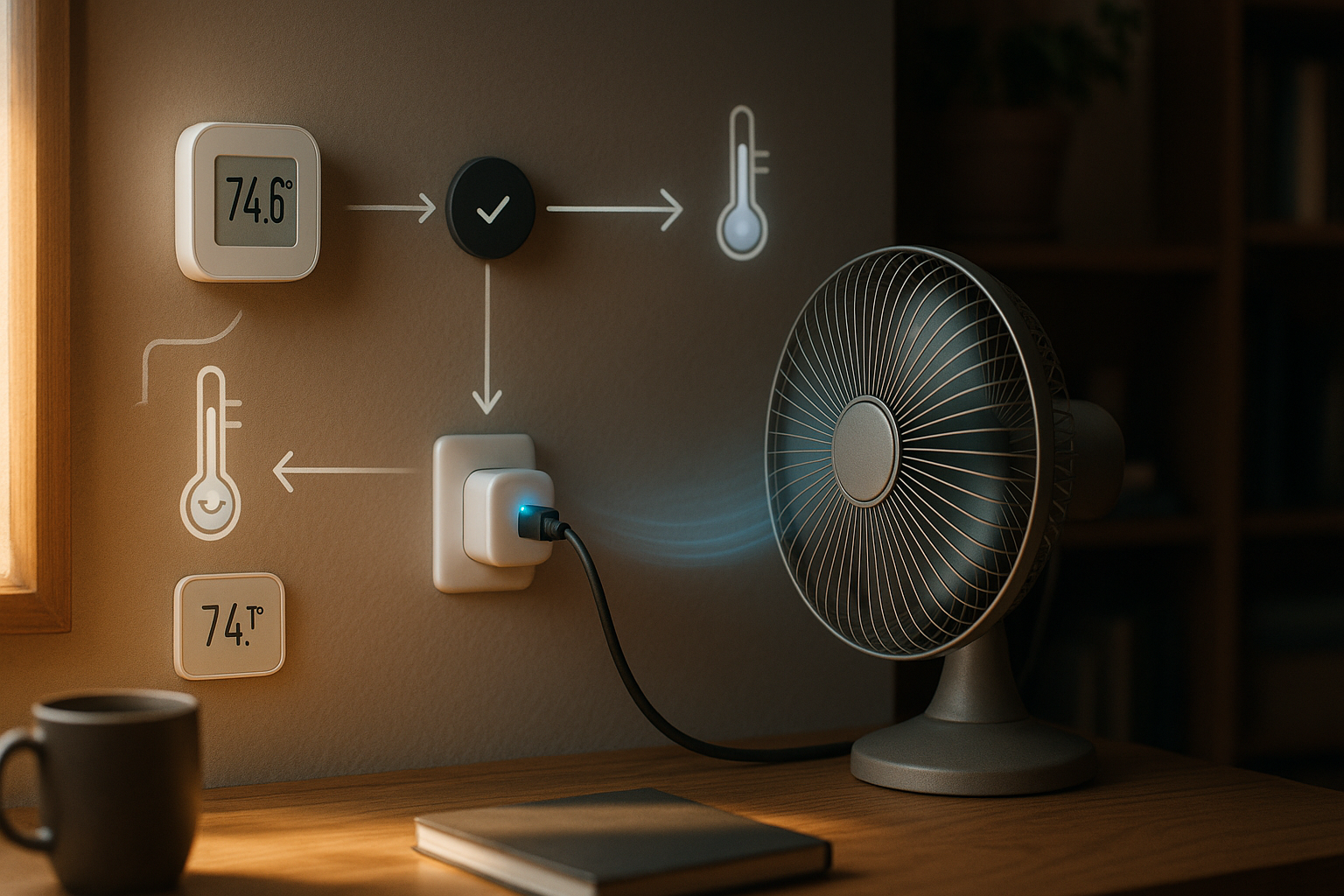

On March 14, 2026, physically oriented tools started getting real attention. I read that as a push to ground agents in the world. Think sensors, home automation, robotics, industrial IoT, and AR surfaces that tie perception to action. If you’ve ever watched a bot say it flipped a switch without checking, you know why grounding matters.

You don’t need a robot dog. Pair one real sensor with one actuator. A temperature sensor and a smart plug teach you most of the loop: read state, plan, act, verify, and revert if the reading looks wrong.

My go-to first experiment

Pick a single room. Give your agent two tools: read temp and toggle fan. Define success as holding the room between two thresholds for two hours. Make the agent explain each action in plain language and confirm with a second reading. You’ll meet sensor drift and delayed effects day one, which is exactly where agents beat if-this-then-that scripts.

I define success narrowly and make the agent explain each action and confirm with a second reading.

The rulebook arrived for businesses

On March 13, 2026, a practical UK legal update landed with guidance for companies exploring agentic AI. Even if you’re not in the UK, treat it like a checklist. If your AI can act, you need to prove how, when, and under whose authority it acted. The summary is here via Ashurst.

What I’m baking into every beginner project now

- Every action gets a reason, a tool trace, and a human-responsible owner in a plain JSON record.

- Human-in-the-loop on high-impact steps. If money moves, configs change, or devices are touched, I require approval or a strong, pre-approved policy.

- Easy off-switch and replay. One flag pauses autonomy and I can replay the last 10 decisions to audit what the agent thought.

Do this from day one and you won’t be rebuilding your stack when legal knocks.

What this week really told me about agentic AI

The March 13 to 14 headlines weren’t blips. Together they point to a clear pattern: agentic AI is learning to play nice with money, machines, and humans who need receipts. Payments show orchestration at scale, observability shows multi-agent teamwork under stress, physical tools show grounding, and the UK guidance shows the paperwork we’ll live with.

If you’re starting out, skip the magic framework hunt. Treat agent design like product design: one clear job to be done, minimal tools, visible constraints, and honest logs.

I treat agent design like product design with one clear job, minimal tools, visible constraints, and honest logs.

Your 7-day starter plan I wish I had

Day 1-2: Pick a boring problem and fence it in

Choose a daily, annoying workflow like reconciling invoices, triaging 500s, or toggling a device from a reading. Write a one-sentence success metric. Give your future agent only the tools it needs for that sentence.

Day 3: Two agents, two hats

Make a detective for facts and a fixer for actions. The fixer can only act when the detective shares evidence that matches a rule you wrote. It’s the smallest multi-agent pattern that doesn’t collapse into chaos.

Day 4: Put its hands on a tool

Connect exactly one external tool. It could be a payments sandbox endpoint, a CI job, or a smart plug. Force the agent to confirm the effect with a second observation before saying it’s done.

Day 5: Add the receipts

Log every action to a human-readable record: timestamp, tool used, input, output, the agent’s reason, and whether a human approved it.

Day 6: Break it on purpose

Give it bad input. Make a tool return nonsense. Watch how your guardrails behave. Add policies like ask a human if confidence drops below X or roll back if reality disagrees.

Day 7: Share it with one trusted user

Let someone else try your tiny system and tell you where they want full autonomy versus approval-needed. That chat surfaces the boundaries you’ll need to document anyway.

FAQ

What is agentic AI in simple terms?

Agentic AI plans steps, uses tools, and verifies results with minimal nudging. Instead of replying to text like a chatbot, it orchestrates actions toward a goal and asks you when it’s uncertain.

How is agentic AI different from a chatbot?

Chatbots talk. Agents act. A chatbot might answer a question about a payment. An agent executes the payment workflow end to end, calls fraud checks, chooses a route, and confirms success with logs.

Is it safe to let agentic AI touch payments or production?

Yes, if you design for it. Use narrow roles, strict tool access, human-in-the-loop on high-impact steps, and auditable logs. Start in sandboxes, prove the guardrails, then expand gradually.

Do I need multiple agents to start?

No. A single agent can work for small tasks, but a two-agent pattern with detective and fixer roles reduces hallucinations and makes approvals clearer. Add more roles only when the job demands it.

What logs do I need for compliance?

Record the reason, tools used, inputs, outputs, timestamps, and the human owner for each action. Keep an easy off-switch and a replay of recent decisions. That’s usually enough to satisfy early audits.

Where I’m betting my time next

After the March 13 to 14 updates, I’m doubling down on three things: agents that show their work as first-class UX, multi-agent teams with narrow, testable roles, and physical grounding even in tiny ways. I’m also treating governance like a feature, not a tax. Build approvals and audit logs in from the start and you’ll ship faster.

If those headlines sparked FOMO, good. Not because you’re late, but because the floor just rose. The new bar for useful agentic AI is simple: can plan, can act, can prove it. Start small, keep the toolbelt tight, and make the logs friendly to a future you running on two hours of sleep.